Smart Alex

Alex was aptly named because she’s, like, super smart. She likes teaching people, and her hobby is posing people questions so that she can explain the answers to them. Alex appears at the end of each chapter of Discovering Statistics Using R and RStudio (2nd edition) to pose you some questions and give you tasks to help you to practice your data analysis skills. This page contains her answers to those questions.

This document contains abridged sections from Discovering Statistics Using R and RStudio by Andy Field so there are some copyright considerations. You can use this material for teaching and non-profit activities but please do not meddle with it or claim it as your own work. See the full license terms at the bottom of the page.

General tips

When attempting these tasks, remember to use a setup code chunk at the start of your Quarto document (below the yaml) and load any packages you need using library(). In general, most tasks are likely to use the tidyverse and easystats packages so as a bare minimum your setup code chunk should contain

Many tasks will require you to load data. You can do this in one of two ways. The easiest is to grab the data directly from the discovr package using the general code:

my_tib <- discovr::name_of_datain which you replace my_tib with the name you wish to give the data once loaded and name_of_data is the data you wish to load. In RStudio if you type discovr:: it will list the datasets available. For example, to load the data called students into a tibble called students_tib we’d execute:

students_tib <- discovr::studentsAlternatively, download the CSV file from https://www.discovr.rocks/csv/name_of_file replacing name_of_file with the name of the csv file. For example, to download students.csv use this url: https://www.discovr.rocks/csv/students.csv

Set up an RStudio project (exactly as described in Chapter 4), place the csv file in your data folder and use this general code:

in which you replace my_tib with the name you wish to give the data once loaded and name_of_file.csv is the name of the file you wish to read into here to locate the file within the project and read_csv() to read it into students.csv you would execute

Chapter 1

Task 1.1

What are (broadly speaking) the five stages of the research process?

- Generating a research question: through an initial observation (hopefully backed up by some data).

- Generate a theory to explain your initial observation.

- Generate hypotheses: break your theory down into a set of testable predictions.

- Collect data to test the theory: decide on what variables you need to measure to test your predictions and how best to measure or manipulate those variables.

- Analyse the data: look at the data visually and by fitting a statistical model to see if it supports your predictions (and therefore your theory). At this point you should return to your theory and revise it if necessary.

Task 1.2

What is the fundamental difference between experimental and correlational research?

In a word, causality. In experimental research we manipulate a variable (predictor, independent variable) to see what effect it has on another variable (outcome, dependent variable). This manipulation, if done properly, allows us to compare situations where the causal factor is present to situations where it is absent. Therefore, if there are differences between these situations, we can attribute cause to the variable that we manipulated. In correlational research, we measure things that naturally occur and so we cannot attribute cause but instead look at natural covariation between variables.

Task 1.3

What is the level of measurement of the following variables?

- The number of downloads of different bands’ songs on iTunes:

- This is a discrete ratio measure. It is discrete because you can download only whole songs, and it is ratio because it has a true and meaningful zero (no downloads at all).

- The names of the bands downloaded.

- This is a nominal variable. Bands can be identified by their name, but the names have no meaningful order. The fact that Norwegian black metal band 1349 called themselves 1349 does not make them better than British boy-band has-beens 911; the fact that 911 were a bunch of talentless idiots does, though.

- Their positions in the download chart.

- This is an ordinal variable. We know that the band at number 1 sold more than the band at number 2 or 3 (and so on) but we don’t know how many more downloads they had. So, this variable tells us the order of magnitude of downloads, but doesn’t tell us how many downloads there actually were.

- The money earned by the bands from the downloads.

- This variable is continuous and ratio. It is continuous because money (pounds, dollars, euros or whatever) can be broken down into very small amounts (you can earn fractions of euros even though there may not be an actual coin to represent these fractions).

- The weight of drugs bought by the band with their royalties.

- This variable is continuous and ratio. If the drummer buys 100 g of cocaine and the singer buys 1 kg, then the singer has 10 times as much.

- The type of drugs bought by the band with their royalties.

- This variable is categorical and nominal: the name of the drug tells us something meaningful (crack, cannabis, amphetamine, etc.) but has no meaningful order.

- The phone numbers that the bands obtained because of their fame.

- This variable is categorical and nominal too: the phone numbers have no meaningful order; they might as well be letters. A bigger phone number did not mean that it was given by a better person.

- The gender of the people giving the bands their phone numbers.

- This variable is categorical: the people dishing out their phone numbers could fall into one of several categories based on how they self-identify when asked about their gender (their gender identity could be fluid). Taking a very simplistic view of gender, the variable might contain categories of male, female, and non-binary.

- This variable is categorical: the people dishing out their phone numbers could fall into one of several categories based on how they self-identify when asked about their gender (their gender identity could be fluid). Taking a very simplistic view of gender, the variable might contain categories of male, female, and non-binary.

- The instruments played by the band members.

- This variable is categorical and nominal too: the instruments have no meaningful order but their names tell us something useful (guitar, bass, drums, etc.).

- The time they had spent learning to play their instruments.

- This is a continuous and ratio variable. The amount of time could be split into infinitely small divisions (nanoseconds even) and there is a meaningful true zero (no time spent learning your instrument means that, like 911, you can’t play at all).

Task 1.4

Say I own 857 CDs. My friend has written a computer program that uses a webcam to scan my shelves in my house where I keep my CDs and measure how many I have. His program says that I have 863 CDs. Define measurement error. What is the measurement error in my friend’s CD counting device?

Measurement error is the difference between the true value of something and the numbers used to represent that value. In this trivial example, the measurement error is 6 CDs. In this example we know the true value of what we’re measuring; usually we don’t have this information, so we have to estimate this error rather than knowing its actual value.

Task 1.5

Sketch the shape of a normal distribution, a positively skewed distribution and a negatively skewed distribution.

Normal

Positive skew

Negative skew

Task 1.6

In 2011 I got married and we went to Disney Florida for our honeymoon. We bought some bride and groom Mickey Mouse hats and wore them around the parks. The staff at Disney are really nice and upon seeing our hats would say ‘congratulations’ to us. We counted how many times people said congratulations over 7 days of the honeymoon: 5, 13, 7, 14, 11, 9, 17. Calculate the mean, median, sum of squares, variance and standard deviation of these data.

First compute the mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{5+13+7+14+11+9+17}{7} \\ &= \frac{76}{7} \\ &= 10.86 \end{aligned} \]

To calculate the median, first let’s arrange the scores in ascending order: 5, 7, 9, 11, 13, 14, 17. The median will be the (n + 1)/2th score. There are 7 scores, so this will be the 8/2 = 4th. The 4th score in our ordered list is 11.

To calculate the sum of squares, first take the mean from each score, then square this difference, finally, add up these squared values:

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 5 | -5.86 | 34.34 | |

| 13 | 2.14 | 4.58 | |

| 7 | -3.86 | 14.90 | |

| 14 | 3.14 | 9.86 | |

| 11 | 0.14 | 0.02 | |

| 9 | -1.86 | 3.46 | |

| 17 | 6.14 | 37.70 | |

| Total | — | — | 104.86 |

So, the sum of squared errors is (Table 1):

\[ \begin{aligned} \text{SS} &= 34.34 + 4.58 + 14.90 + 9.86 + 0.02 + 3.46 + 37.70 \\ &= 104.86 \\ \end{aligned} \]

The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{104.86}{6} \\ &= 17.48 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{17.48} \\ &= 4.18 \end{aligned} \]

Task 1.7

In this chapter we used an example of the time taken for 21 heavy smokers to fall off a treadmill at the fastest setting (18, 16, 18, 24, 23, 22, 22, 23, 26, 29, 32, 34, 34, 36, 36, 43, 42, 49, 46, 46, 57). Calculate the sums of squares, variance and standard deviation of these data.

To calculate the sum of squares, take the mean from each value, then square this difference. Finally, add up these squared values (the values in the final column). The sum of squared errors is a massive 2685.24 (Table 2).

| Score | Mean | Difference | Difference squared | |

|---|---|---|---|---|

| 18 | 32.19 | -14.19 | 201.356 | |

| 16 | 32.19 | -16.19 | 262.116 | |

| 18 | 32.19 | -14.19 | 201.356 | |

| 24 | 32.19 | -8.19 | 67.076 | |

| 23 | 32.19 | -9.19 | 84.456 | |

| 22 | 32.19 | -10.19 | 103.836 | |

| 22 | 32.19 | -10.19 | 103.836 | |

| 23 | 32.19 | -9.19 | 84.456 | |

| 26 | 32.19 | -6.19 | 38.316 | |

| 29 | 32.19 | -3.19 | 10.176 | |

| 32 | 32.19 | -0.19 | 0.036 | |

| 34 | 32.19 | 1.81 | 3.276 | |

| 34 | 32.19 | 1.81 | 3.276 | |

| 36 | 32.19 | 3.81 | 14.516 | |

| 36 | 32.19 | 3.81 | 14.516 | |

| 43 | 32.19 | 10.81 | 116.856 | |

| 42 | 32.19 | 9.81 | 96.236 | |

| 49 | 32.19 | 16.81 | 282.576 | |

| 46 | 32.19 | 13.81 | 190.716 | |

| 46 | 32.19 | 13.81 | 190.716 | |

| 57 | 32.19 | 24.81 | 615.536 | |

| Total | — | — | — | 2685.236 |

The variance is the sum of squared errors divided by the degrees of freedom (\(N-1\)). There were 21 scores and so the degrees of freedom were 20. The variance is, therefore:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{2685.24}{20} \\ &= 134.26 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{134.26} \\ &= 11.59 \end{aligned} \]

Task 1.8

Sports scientists sometimes talk of a ‘red zone’, which is a period during which players in a team are more likely to pick up injuries because they are fatigued. When a player hits the red zone it is a good idea to rest them for a game or two. At a prominent London football club that I support, they measured how many consecutive games the 11 first team players could manage before hitting the red zone: 10, 16, 8, 9, 6, 8, 9, 11, 12, 19, 5. Calculate the mean, standard deviation, median, range and interquartile range.

First we need to compute the mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{10+16+8+9+6+8+9+11+12+19+5}{11} \\ &= \frac{113}{11} \\ &= 10.27 \end{aligned} \]

Then the standard deviation, which we do as follows:

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 10 | -0.27 | 0.07 | |

| 16 | 5.73 | 32.83 | |

| 8 | -2.27 | 5.15 | |

| 9 | -1.27 | 1.61 | |

| 6 | -4.27 | 18.23 | |

| 8 | -2.27 | 5.15 | |

| 9 | -1.27 | 1.61 | |

| 11 | 0.73 | 0.53 | |

| 12 | 1.73 | 2.99 | |

| 19 | 8.73 | 76.21 | |

| 5 | -5.27 | 27.77 | |

| Total | — | — | 172.15 |

So, the sum of squared errors is (Table 3):

\[ \begin{aligned} \text{SS} &= 0.07 + 32.83 + 5.15 + 1.61 + 18.23 + 5.15 + 1.61 + 0.53 + 2.99 + 76.21 + 27.77 \\ &= 172.15 \\ \end{aligned} \]

The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{172.15}{10} \\ &= 17.22 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{17.22} \\ &= 4.15 \end{aligned} \]

- To calculate the median, range and interquartile range, first let’s arrange the scores in ascending order: 5, 6, 8, 8, 9, 9, 10, 11, 12, 16, 19. The median: The median will be the (\(n + 1\))/2th score. There are 11 scores, so this will be the 12/2 = 6th. The 6th score in our ordered list is 9 games. Therefore, the median number of games is 9.

- The lower quartile: This is the median of the lower half of scores. If we split the data at 9 (the 6th score), there are 5 scores below this value. The median of 5 = 6/2 = 3rd score. The 3rd score is 8, the lower quartile is therefore 8 games.

- The upper quartile: This is the median of the upper half of scores. If we split the data at 9 again (not including this score), there are 5 scores above this value. The median of 5 = 6/2 = 3rd score above the median. The 3rd score above the median is 12; the upper quartile is therefore 12 games.

- The range: This is the highest score (19) minus the lowest (5), i.e. 14 games.

- The interquartile range: This is the difference between the upper and lower quartile: 12−8 = 4 games.

Task 1.9

Celebrities always seem to be getting divorced. The (approximate) length of some celebrity marriages in days are: 240 (J-Lo and Cris Judd), 144 (Charlie Sheen and Donna Peele), 143 (Pamela Anderson and Kid Rock), 72 (Kim Kardashian, if you can call her a celebrity), 30 (Drew Barrymore and Jeremy Thomas), 26 (Axl Rose and Erin Everly), 2 (Britney Spears and Jason Alexander), 150 (Drew Barrymore again, but this time with Tom Green), 14 (Eddie Murphy and Tracy Edmonds), 150 (Renee Zellweger and Kenny Chesney), 1657 (Jennifer Aniston and Brad Pitt). Compute the mean, median, standard deviation, range and interquartile range for these lengths of celebrity marriages.

First we need to compute the mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{240+144+143+72+30+26+2+150+14+150+1657}{11} \\ &= \frac{2628}{11} \\ &= 238.91 \end{aligned} \]

Then the standard deviation, which we do as follows:

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 240 | 1.09 | 1.19 | |

| 144 | -94.91 | 9007.91 | |

| 143 | -95.91 | 9198.73 | |

| 72 | -166.91 | 27858.95 | |

| 30 | -208.91 | 43643.39 | |

| 26 | -212.91 | 45330.67 | |

| 2 | -236.91 | 56126.35 | |

| 150 | -88.91 | 7904.99 | |

| 14 | -224.91 | 50584.51 | |

| 150 | -88.91 | 7904.99 | |

| 1657 | 1418.09 | 2010979.25 | |

| Total | — | — | 2268541 |

So, the sum of squared errors is the sum of the final column (Table 4). The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{2268541}{10} \\ &= 226854.1 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{226854.1} \\ &= 476.29 \end{aligned} \]

- To calculate the median, range and interquartile range, first let’s arrange the scores in ascending order: 2, 14, 26, 30, 72, 143, 144, 150, 150, 240, 1657. The median: The median will be the (n + 1)/2th score. There are 11 scores, so this will be the 12/2 = 6th. The 6th score in our ordered list is 143. The median length of these celebrity marriages is therefore 143 days.

- The lower quartile: This is the median of the lower half of scores. If we split the data at 143 (the 6th score), there are 5 scores below this value. The median of 5 = 6/2 = 3rd score. The 3rd score is 26, the lower quartile is therefore 26 days.

- The upper quartile: This is the median of the upper half of scores. If we split the data at 143 again (not including this score), there are 5 scores above this value. The median of 5 = 6/2 = 3rd score above the median. The 3rd score above the median is 150; the upper quartile is therefore 150 days.

- The range: This is the highest score (1657) minus the lowest (2), i.e. 1655 days.

- The interquartile range: This is the difference between the upper and lower quartile: 150−26 = 124 days.

Task 1.10

Repeat Task 9 but excluding Jennifer Anniston and Brad Pitt’s marriage. How does this affect the mean, median, range, interquartile range, and standard deviation? What do the differences in values between Tasks 9 and 10 tell us about the influence of unusual scores on these measures?

First let’s compute the new mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{240+144+143+72+30+26+2+150+14+150}{11} \\ &= \frac{971}{11} \\ &= 97.1 \end{aligned} \]

The mean length of celebrity marriages is now 97.1 days compared to 238.91 days when Jennifer Aniston and Brad Pitt’s marriage was included. This demonstrates that the mean is greatly influenced by extreme scores.

Let’s now calculate the standard deviation excluding Jennifer Aniston and Brad Pitt’s marriage (Table 5):

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 240 | 142.9 | 20420.41 | |

| 144 | 46.9 | 2199.61 | |

| 143 | 45.9 | 2106.81 | |

| 72 | -25.1 | 630.01 | |

| 30 | -67.1 | 4502.41 | |

| 26 | -71.1 | 5055.21 | |

| 2 | -95.1 | 9044.01 | |

| 150 | 52.9 | 2798.41 | |

| 14 | -83.1 | 6905.61 | |

| 150 | 52.9 | 2798.41 | |

| Total | — | — | 56460.9 |

So, the sum of squared errors is:

\[ \begin{aligned} \text{SS} &= 20420.41 + 2199.61 + 2106.81 + 630.01 + 4502.41 + 5055.21 + 9044.01 + 2798.41 + 6905.61 + 2798.41 \\ &= 56460.90 \\ \end{aligned} \]

The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{56460.90}{9} \\ &= 6273.43 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{6273.43} \\ &= 79.21 \end{aligned} \]

From these calculations we can see that the variance and standard deviation, like the mean, are both greatly influenced by extreme scores. When Jennifer Aniston and Brad Pitt’s marriage was included in the calculations (see Smart Alex Task 9), the variance and standard deviation were much larger, i.e. 226854.09 and 476.29 respectively.

- To calculate the median, range and interquartile range, first, let’s again arrange the scores in ascending order but this time excluding Jennifer Aniston and Brad Pitt’s marriage: 2, 14, 26, 30, 72, 143, 144, 150, 150, 240.

- The median: The median will be the (n + 1)/2 score. There are now 10 scores, so this will be the 11/2 = 5.5th. Therefore, we take the average of the 5th score and the 6th score. The 5th score is 72, and the 6th is 143; the median is therefore 107.5 days.

- The lower quartile: This is the median of the lower half of scores. If we split the data at 107.5 (this score is not in the data set), there are 5 scores below this value. The median of 5 = 6/2 = 3rd score. The 3rd score is 26; the lower quartile is therefore 26 days.

- The upper quartile: This is the median of the upper half of scores. If we split the data at 107.5 (this score is not actually present in the data set), there are 5 scores above this value. The median of 5 = 6/2 = 3rd score above the median. The 3rd score above the median is 150; the upper quartile is therefore 150 days.

- The range: This is the highest score (240) minus the lowest (2), i.e. 238 days. You’ll notice that without the extreme score the range drops dramatically from 1655 to 238 – less than half the size.

- The interquartile range: This is the difference between the upper and lower quartile: 150 − 26 = 124 days of marriage. This is the same as the value we got when Jennifer Aniston and Brad Pitt’s marriage was included. This demonstrates the advantage of the interquartile range over the range, i.e. it isn’t affected by extreme scores at either end of the distribution

Chapter 2

Task 2.1

Why do we use samples?

We are usually interested in populations, but because we cannot collect data from every human being (or whatever) in the population, we collect data from a small subset of the population (known as a sample) and use these data to infer things about the population as a whole.

Task 2.2

What is the mean and how do we tell if it’s representative of our data?

The mean is a simple statistical model of the centre of a distribution of scores. A hypothetical estimate of the ‘typical’ score. We use the variance, or standard deviation, to tell us whether it is representative of our data. The standard deviation is a measure of how much error there is associated with the mean: a small standard deviation indicates that the mean is a good representation of our data.

Task 2.3

What’s the difference between the standard deviation and the standard error?

The standard deviation tells us how much observations in our sample differ from the mean value within our sample. The standard error tells us not about how the sample mean represents the sample itself, but how well the sample mean represents the population mean. The standard error is the standard deviation of the sampling distribution of a statistic. For a given statistic (e.g. the mean) it tells us how much variability there is in this statistic across samples from the same population. Large values, therefore, indicate that a statistic from a given sample may not be an accurate reflection of the population from which the sample came.

Task 2.4

In Chapter 1 we used an example of the time in seconds taken for 21 heavy smokers to fall off a treadmill at the fastest setting (18, 16, 18, 24, 23, 22, 22, 23, 26, 29, 32, 34, 34, 36, 36, 43, 42, 49, 46, 46, 57). Calculate standard error and 95% confidence interval for these data.

If you did the tasks in Chapter 1, you’ll know that the mean is 32.19 seconds:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{16+(2\times18)+(2\times22)+(2\times23)+24+26+29+32+(2\times34)+(2\times36)+42+43+(2\times46)+49+57}{21} \\ &= \frac{676}{21} \\ &= 32.19 \end{aligned} \]

We also worked out that the sum of squared errors was 2685.24; the variance was 2685.24/20 = 134.26; the standard deviation is the square root of the variance, so was \(\sqrt(134.26)\) = 11.59. The standard error will be:

\[ SE = \frac{s}{\sqrt{N}} = \frac{11.59}{\sqrt{21}} = 2.53 \]

The sample is small, so to calculate the confidence interval we need to find the appropriate value of t. First we need to calculate the degrees of freedom, \(N − 1\). With 21 data points, the degrees of freedom are 20. For a 95% confidence interval we can look up the value in the column labelled ‘Two-Tailed Test’, ‘0.05’ in the table of critical values of the t-distribution (Appendix). The corresponding value is 2.09. The confidence intervals is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.09 \times SE)) \\ &= 32.19 – (2.09 × 2.53) \\ & = 26.90 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.09 \times SE) \\ &= 32.19 + (2.09 × 2.53) \\ &= 37.48 \end{aligned} \]

Task 2.5

What do the sum of squares, variance and standard deviation represent? How do they differ?

All of these measures tell us something about how well the mean fits the observed sample data. Large values (relative to the scale of measurement) suggest the mean is a poor fit of the observed scores, and small values suggest a good fit. They are also, therefore, measures of dispersion, with large values indicating a spread-out distribution of scores and small values showing a more tightly packed distribution. These measures all represent the same thing, but differ in how they express it. The sum of squared errors is a ‘total’ and is, therefore, affected by the number of data points. The variance is the ‘average’ variability but in units squared. The standard deviation is the average variation but converted back to the original units of measurement. As such, the size of the standard deviation can be compared to the mean (because they are in the same units of measurement).

Task 2.6

What is a test statistic and what does it tell us?

A test statistic is a statistic for which we know how frequently different values occur. The observed value of such a statistic is typically used to test hypotheses, or to establish whether a model is a reasonable representation of what’s happening in the population.

Task 2.7

What are Type I and Type II errors?

A Type I error occurs when we believe that there is a genuine effect in our population, when in fact there isn’t. A Type II error occurs when we believe that there is no effect in the population when, in reality, there is.

Task 2.8

What is statistical power?

Power is the ability of a test to detect an effect of a particular size (a value of 0.8 is a good level to aim for).

Task 2.9

Figure 2.21 (in the book) shows two experiments that looked at the effect of singing versus conversation on how much time a woman would spend with a man. In both experiments the means were 10 (singing) and 12 (conversation), the standard deviations in all groups were 3, but the group sizes were 10 per group in the first experiment and 100 per group in the second. Compute the values of the confidence intervals displayed in the Figure.

Experiment 1:

In both groups, because they have a standard deviation of 3 and a sample size of 10, the standard error will be:

\[ SE = \frac{s}{\sqrt{N}} = \frac{3}{\sqrt{10}} = 0.95 \]

The sample is small, so to calculate the confidence interval we need to find the appropriate value of t. First we need to calculate the degrees of freedom, \(N − 1\). With 10 data points, the degrees of freedom are 9. For a 95% confidence interval we can look up the value in the column labelled ‘Two-Tailed Test’, ‘0.05’ in the table of critical values of the t-distribution (Appendix). The corresponding value is 2.26. The confidence interval for the singing group is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.26 \times SE) \\ &= 10 – (2.26 × 0.95) \\ & = 7.85 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.26 \times SE) \\ &= 10 + (2.26 × 0.95) \\ &= 12.15 \end{aligned} \]

For the conversation group:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.26 \times SE) \\ &= 12 – (2.26 × 0.95) \\ & = 9.85 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.26 \times SE) \\ &= 12 + (2.26 × 0.95) \\ &= 14.15 \end{aligned} \]

Experiment 2

In both groups, because they have a standard deviation of 3 and a sample size of 100, the standard error will be:

\[ SE = \frac{s}{\sqrt{N}} = \frac{3}{\sqrt{100}} = 0.3 \]

The sample is large, so to calculate the confidence interval we need to find the appropriate value of z. For a 95% confidence interval we should look up the value of 0.025 in the column labelled Smaller Portion in the table of the standard normal distribution (Appendix). The corresponding value is 1.96. The confidence interval for the singing group is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 10 – (1.96 × 0.3) \\ & = 9.41 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 10 + (1.96 × 0.3) \\ &= 10.59 \end{aligned} \]

For the conversation group:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 12 – (1.96 × 0.3) \\ & = 11.41 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 12 + (1.96 × 0.3) \\ &= 12.59 \end{aligned} \]

Task 2.10

Figure 2.22 shows a similar study to above, but the means were 10 (singing) and 10.01 (conversation), the standard deviations in both groups were 3, and each group contained 1 million people. Compute the values of the confidence intervals displayed in the figure.

In both groups, because they have a standard deviation of 3 and a sample size of 1,000,000, the standard error will be:

\[ SE = \frac{s}{\sqrt{N}} = \frac{3}{\sqrt{1000000}} = 0.003 \]

The sample is large, so to calculate the confidence interval we need to find the appropriate value of z. For a 95% confidence interval we should look up the value of 0.025 in the column labelled Smaller Portion in the table of the standard normal distribution (Appendix). The corresponding value is 1.96. The confidence interval for the singing group is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 10 – (1.96 × 0.003) \\ & = 9.99412 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 10 + (1.96 × 0.003) \\ &= 10.00588 \end{aligned} \]

For the conversation group:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 10.01 – (1.96 × 0.003) \\ & = 10.00412 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 10.01 + (1.96 × 0.003) \\ &= 10.01588 \end{aligned} \]

Note: these values will look slightly different than the plot because the exact means were 10.00147 and 10.01006, but we rounded off to 10 and 10.01 to make life a bit easier. If you use these exact values you’d get, for the singing group:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 10.01006 – (1.96 × 0.003) \\ & = 9.99559 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 10.01006 + (1.96 × 0.003) \\ &= 10.00735 \end{aligned} \]

For the conversation group:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(1.96 \times SE) \\ &= 10.01006 – (1.96 × 0.003) \\ & = 10.00418 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(1.96 \times SE) \\ &= 10.01006 + (1.96 × 0.003) \\ &= 10.01594 \end{aligned} \]

Task 2.11

In Chapter 1 (Task 8) we looked at an example of how many games it took a sportsperson before they hit the ‘red zone’ Calculate the standard error and confidence interval for those data.

We worked out in Chapter 1 that the mean was 10.27, the standard deviation 4.15, and there were 11 sportspeople in the sample. The standard error will be:

\[ SE = \frac{s}{\sqrt{N}} = \frac{4.15}{\sqrt{11}} = 1.25 \]

The sample is small, so to calculate the confidence interval we need to find the appropriate value of t. First we need to calculate the degrees of freedom, \(N − 1\). With 11 data points, the degrees of freedom are 10. For a 95% confidence interval we can look up the value in the column labelled ‘Two-Tailed Test’, ‘.05’ in the table of critical values of the t-distribution (Appendix). The corresponding value is 2.23. The confidence interval is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.23 \times SE) \\ &= 10.27 – (2.23 × 1.25) \\ & = 7.48 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.23 \times SE) \\ &= 10.27 + (2.23 × 1.25) \\ &= 13.06 \end{aligned} \]

Task 2.12

At a rival club to the one I support, they similarly measured the number of consecutive games it took their players before they reached the red zone. The data are: 6, 17, 7, 3, 8, 9, 4, 13, 11, 14, 7. Calculate the mean, standard deviation, and confidence interval for these data.

First we need to compute the mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{6+17+7+3+8+9+4+13+11+14+7}{11} \\ &= \frac{99}{11} \\ &= 9.00 \end{aligned} \]

Then the standard deviation, which we do as follows:

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 6 | -3 | 9 | |

| 17 | 8 | 64 | |

| 7 | -2 | 4 | |

| 3 | -6 | 36 | |

| 8 | -1 | 1 | |

| 9 | 0 | 0 | |

| 4 | -5 | 25 | |

| 13 | 4 | 16 | |

| 11 | 2 | 4 | |

| 14 | 5 | 25 | |

| 7 | -2 | 4 | |

| Total | — | — | 188 |

The sum of squared errors is (Table 6):

\[ \begin{aligned} \text{SS} &= 9 + 64 + 4 + 36 + 1 + 0 + 25 + 16 + 4 + 25 + 4 \\ &= 188 \\ \end{aligned} \]

The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{188}{10} \\ &= 18.8 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{18.8} \\ &= 4.34 \end{aligned} \]

There were 11 sportspeople in the sample, so the standard error will be: \[ SE = \frac{s}{\sqrt{N}} = \frac{4.34}{\sqrt{11}} = 1.31\]

The sample is small, so to calculate the confidence interval we need to find the appropriate value of t. First we need to calculate the degrees of freedom, \(N − 1\). With 11 data points, the degrees of freedom are 10. For a 95% confidence interval we can look up the value in the column labelled ‘Two-Tailed Test’, ‘0.05’ in the table of critical values of the t-distribution (Appendix). The corresponding value is 2.23. The confidence intervals is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.23\times SE)) \\ &= 9 – (2.23 × 1.31) \\ & = 6.08 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.23\times SE) \\ &= 9 + (2.23 × 1.31) \\ &= 11.92 \end{aligned} \]

Task 2.13

In Chapter 1 (Task 9) we looked at the length in days of 11 celebrity marriages. Here are the approximate lengths in months of nine marriages, one being mine and the others being those of some of my friends and family. In all but two cases the lengths are calculated up to the day I’m writing this, which is 20 June 2023, but the 3- and 111-month durations are marriages that have ended – neither of these is mine, in case you’re wondering: 3, 144, 267, 182, 159, 152, 693, 50, and 111. Calculate the mean, standard deviation and confidence interval for these data.

First we need to compute the mean:

\[ \begin{aligned} \overline{X} &= \frac{\sum_{i=1}^{n} x_i}{n} \\ &= \frac{3 + 144 + 267 + 182 + 159 + 152 + 693 + 50 + 111}{9} \\ &= \frac{1761}{9} \\ &= 195.67 \end{aligned} \]

Compute the standard deviation as follows:

| Score | Error (score - mean) | Error squared | |

|---|---|---|---|

| 3 | -192.67 | 37121.73 | |

| 144 | -51.67 | 2669.79 | |

| 267 | 71.33 | 5087.97 | |

| 182 | -13.67 | 186.87 | |

| 159 | -36.67 | 1344.69 | |

| 152 | -43.67 | 1907.07 | |

| 693 | 497.33 | 247337.13 | |

| 50 | -145.67 | 21219.75 | |

| 111 | -84.67 | 7169.01 | |

| Total | — | — | 324044 |

The sum of squared errors is (Table 7):

\[ \begin{aligned} \text{SS} &= 37121.73 + 2669.79 + 5087.97 + 186.87 + 1344.69 + 1907.07 + 247337.13 + 21219.75 + 7169.01 \\ &= 324044 \\ \end{aligned} \]

The variance is the sum of squared errors divided by the degrees of freedom:

\[ \begin{aligned} s^2 &= \frac{SS}{N - 1} \\ &= \frac{324044}{8} \\ &= 40505.5 \end{aligned} \]

The standard deviation is the square root of the variance:

\[ \begin{aligned} s &= \sqrt{s^2} \\ &= \sqrt{40505.5} \\ &= 201.2598 \end{aligned} \]

The standard error is:

\[ \begin{aligned} SE &= \frac{s}{\sqrt{N}} \\ &= \frac{201.2598}{\sqrt{9}} \\ &= 67.0866 \end{aligned} \]

The sample is small, so to calculate the confidence interval we need to find the appropriate value of t. First we need to calculate the degrees of freedom, \(N − 1\). With 9 data points, the degrees of freedom are 8. For a 95% confidence interval we can look up the value in the column labelled ‘Two-Tailed Test’, ‘0.05’ in the table of critical values of the t-distribution (Appendix). The corresponding value is 2.31. The confidence interval is, therefore, given by:

\[ \begin{aligned} \text{95% CI}_\text{lower boundary} &= \overline{X}-(2.31 \times SE)) \\ &= 195.67 – (2.31 × 67.0866) \\ & = 40.70 \\ \text{95% CI}_\text{upper boundary} &= \overline{X}+(2.31 \times SE) \\ &= 195.67 + (2.31 × 67.0866) \\ &= 350.64 \end{aligned} \]

Chapter 3

Task 3.1

What is an effect size and how is it measured?

An effect size is an objective and standardized measure of the magnitude of an observed effect. Measures include Cohen’s d, the odds ratio and Pearson’s correlations coefficient, r. Cohen’s d, for example, is the difference between two means divided by either the standard deviation of the control group, or by a pooled standard deviation.

Task 3.2

In Chapter 1 (Task 8) we looked at an example of how many games it took a sportsperson before they hit the ‘red zone’, then in Chapter 2 we looked at data from a rival club. Compute and interpret Cohen’s \(\hat{d}\) for the difference in the mean number of games it took players to become fatigued in the two teams mentioned in those tasks.

Cohen’s d is defined as:

\[ \hat{d} = \frac{\bar{X_1}-\bar{X_2}}{s} \]

There isn’t an obvious control group, so let’s use a pooled estimate of the standard deviation:

\[ \begin{aligned} s_p &= \sqrt{\frac{(N_1-1) s_1^2+(N_2-1) s_2^2}{N_1+N_2-2}} \\ &= \sqrt{\frac{(11-1)4.15^2+(11-1)4.34^2}{11+11-2}} \\ &= \sqrt{\frac{360.23}{20}} \\ &= 4.24 \end{aligned} \]

Therefore, Cohen’s \(\hat{d}\) is:

\[ \hat{d} = \frac{10.27-9}{4.24} = 0.30 \]

Therefore, the second team fatigued in fewer matches than the first team by about 1/3 standard deviation. By the benchmarks that we probably shouldn’t use, this is a small to medium effect, but I guess if you’re managing a top-flight sports team, fatiguing 1/3 of a standard deviation faster than one of your opponents could make quite a substantial difference to your performance and team rotation over the season.

Task 3.3

Calculate and interpret Cohen’s \(\hat{d}\) for the difference in the mean duration of the celebrity marriages in Chapter 1 (Task 9) and me and my friend’s marriages (Chapter 2, Task 13).

Cohen’s \(\hat{d}\) is defined as:

\[ \hat{d} = \frac{\bar{X_1}-\bar{X_2}}{s} \]

There isn’t an obvious control group, so let’s use a pooled estimate of the standard deviation:

\[ \begin{aligned} s_p &= \sqrt{\frac{(N_1-1) s_1^2+(N_2-1) s_2^2}{N_1+N_2-2}} \\ &= \sqrt{\frac{(11-1)476.29^2+(9-1)8275.91^2}{11+9-2}} \\ &= \sqrt{\frac{550194093}{18}} \\ &= 5528.68 \end{aligned} \]

Therefore, Cohen’s d is: \[\hat{d} = \frac{5057-238.91}{5528.68} = 0.87\] Therefore, my friend’s marriages are 0.87 standard deviations longer than the sample of celebrities. By the benchmarks that we probably shouldn’t use, this is a large effect.

Task 3.4

What are the problems with null hypothesis significance testing?

- We can’t conclude that an effect is important because the p-value from which we determine significance is affected by sample size. Therefore, the word ‘significant’ is meaningless when referring to a p-value.

- The null hypothesis is never true. If the p-value is greater than .05 then we can decide to reject the alternative hypothesis, but this is not the same thing as the null hypothesis being true: a non-significant result tells us is that the effect is not big enough to be found but it doesn’t tell us that the effect is zero.

- A significant result does not tell us that the null hypothesis is false (see text for details).

- It encourages all or nothing thinking: if p < 0.05 then an effect is significant, but if p > 0.05 it is not. So, a p = 0.0499 is significant but a p = 0.0501 is not, even though these ps differ by only 0.0002.

Task 3.5

What is the difference between a confidence interval and a credible interval?

A 95% confidence interval is set so that before the data are collected there is a long-run probability of 0.95 (or 95%) that the interval will contain the true value of the parameter. This means that in 100 random samples, the intervals will contain the true value in 95 of them but won’t in 5. Once the data are collected, your sample is either one of the 95% that produces an interval containing the true value, or one of the 5% that does not. In other words, having collected the data, the probability of the interval containing the true value of the parameter is either 0 (it does not contain it) or 1 (it does contain it), but you do not know which. A credible interval is different in that it reflects the plausible probability that the interval contains the true value. For example, a 95% credible interval has a plausible 0.95 probability of containing the true value.

Task 3.6

What is a meta-analysis?

Meta-analysis is where effect sizes from different studies testing the same hypothesis are combined to get a better estimate of the size of the effect in the population.

Task 3.7

Describe what you understand by the term Bayes factor.

The Bayes factor is the ratio of the probability of the data given the alternative hypothesis to that of the data given the null hypothesis. A Bayes factor less than 1 supports the null hypothesis (it suggests the data are more likely given the null hypothesis than the alternative hypothesis); conversely, a Bayes factor greater than 1 suggests that the observed data are more likely given the alternative hypothesis than the null. Values between 1 and 3 are considered evidence for the alternative hypothesis that is ‘barely worth mentioning’, values between 3 and 10 are considered to indicate evidence for the alternative hypothesis that ‘has substance’, and values greater than 10 are strong evidence for the alternative hypothesis.

Task 3.8

Various studies have shown that students who use laptops in class often do worse on their modules (Payne-Carter et al., 2016). Table 3.3 (reproduced in in Table 8) shows some fabricated data that mimics what has been found. What is the odds ratio for passing the exam if the student uses a laptop in class compared to if they don’t?

| Laptop | No Laptop | Sum | |

|---|---|---|---|

| Pass | 24 | 49 | 73 |

| Fail | 16 | 11 | 27 |

| Sum | 40 | 60 | 100 |

First we compute the odds of passing when a laptop is used in class:

\[ \begin{aligned} \text{Odds}_{\text{pass when laptop is used}} &= \frac{\text{Number of laptop users passing exam}}{\text{Number of laptop users failing exam}} \\ &= \frac{24}{16} \\ &= 1.5 \end{aligned} \]

Next we compute the odds of passing when a laptop is not used in class:

\[ \begin{aligned} \text{Odds}_{\text{pass when laptop is not used}} &= \frac{\text{Number of students without laptops passing exam}}{\text{Number of students without laptops failing exam}} \\ &= \frac{49}{11} \\ &= 4.45 \end{aligned} \]

The odds ratio is the ratio of the two odds that we have just computed:

\[ \begin{aligned} \text{Odds Ratio} &= \frac{\text{Odds}_{\text{pass when laptop is used}}}{\text{Odds}_{\text{pass when laptop is not used}}} \\ &= \frac{1.5}{4.45} \\ &= 0.34 \end{aligned} \]

The odds of passing when using a laptop are 0.34 times those when a laptop is not used. If we take the reciprocal of this, we could say that the odds of passing when not using a laptop are 2.97 times those when a laptop is used.

Task 3.9

From the data in Table 3.1 (reproduced in Table 8) what is the conditional probability that someone used a laptop given that they passed the exam, p(laptop|pass). What is the conditional probability of that someone didn’t use a laptop in class given they passed the exam, p(no laptop |pass)?

The conditional probability that someone used a laptop given they passed the exam is 0.33, or a 33% chance:

\[ p(\text{laptop|pass})=\frac{p(\text{laptop ∩ pass})}{p(\text{pass})}=\frac{{24}/{100}}{{73}/{100}}=\frac{0.24}{0.73}=0.33 \]

The conditional probability that someone didn’t use a laptop in class given they passed the exam is 0.67 or a 67% chance.

\[ p(\text{no laptop|pass})=\frac{p(\text{no laptop ∩ pass})}{p(\text{pass})}=\frac{{49}/{100}}{{73}/{100}}=\frac{0.49}{0.73}=0.67 \]

Task 3.10

Using the data in Table 3.1 (reproduced in Table 8), what are the posterior odds of someone using a laptop in class (compared to not using one) given that they passed the exam?

The posterior odds are the ratio of the posterior probability for one hypothesis to another. In this example it would be the ratio of the probability that a used a laptop given that they passed (which we have already calculated above to be 0.33) to the probability that they did not use a laptop in class given that they passed (which we have already calculated above to be 0.67). The value turns out to be 0.49, which means that the probability that someone used a laptop in class if they passed the exam is about half of the probability that someone didn’t use a laptop in class given that they passed the exam.

\[ \text{posterior odds}= \frac{p(\text{hypothesis 1|data})}{p(\text{hypothesis 2|data})} = \frac{p(\text{laptop|pass})}{p(\text{no laptop| pass})} = \frac{0.33}{0.67} = 0.49 \]

Chapter 4

Task 4.1

Smart Alex’s first task for this chapter is to set up an RStudio project (you should have done this already if you’ve been following the chapter) and within the main project folder create folders called ‘data’, and ‘quarto’. Then create a Quarto document and follow the self-tests in the chapter to create a document containing some code and text.

This is a general video on workflow (note that I name the quarto folder as r_docs in this tutorial, but otherwise it follows the book chapter):

You can download a complete version of this file (chapter_4_self_tests.qmd) and it’s rendered output (chapter_4_self_tests.html) from www.discovr.rocks/repository/chapter_4_self_tests.zip.

Task 4.2

Render the document in Task 1, then locate the rendered file within your project folder.

A general tutorial on rendering is here:

You can download a complete version of this file (chapter_4_self_tests.qmd) and it’s rendered output (chapter_4_self_tests.html) from www.discovr.rocks/repository/chapter_4_self_tests.zip.

Task 4.3

The data below show the score (out of 20) for 20 different students, some of whom are male and some female, and some of whom were taught using positive reinforcement (being nice) and others who were taught using punishment (electric shock). Enter these data into a tibble. (Hint: the data below are in messy format, you need to enter them in tidy format.)

We have three variables here: sex (was the person male or female), the method of teaching they underwent and their mark on an assignment. Therefore, the tidy format is to arrange the data in three columns. There are several ways to do this, here’s one of them:

The data can be found in discovr::teaching (this version has an extra variable with the participant id).

Task 4.4

Thinking back to Labcoat Leni’s Real Research 4.1, Oxoby also measured the minimum acceptable offer; these MAOs (in dollars) are below (again, they are approximations based on the graphs in the paper). Enter these data into a tibble.

- Bon Scott group: 2, 3, 3, 3, 3, 4, 4, 4, 4, 4, 4, 4, 4, 5, 5, 5, 5, 5

- Brian Johnson group: 0, 1, 2, 2, 3, 3, 3, 3, 3, 4, 4, 4, 4, 4, 4, 4, 4, 1

The full data can be found in discovr::acdc

Task 4.5

According to some highly unscientific research done by a UK department store chain and reported in Marie Claire magazine, shopping is good for you. They found that the average woman spends 150 minutes and walks 2.6 miles when she shops, burning off around 385 calories. In contrast, men spend only about 50 minutes shopping, covering 1.5 miles. This was based on strapping a pedometer on a mere 10 participants. Although I don’t have the actual data, some simulated data based on these means are below. Enter these data into a tibble (again, you need to think about making the data tidy format).

We have three variables here: sex (was the person male or female), the distance they walked and the time they spent shopping. Therefore, we arrange the data in three columns:

The data can be found in discovr::shopping.

Task 4.6

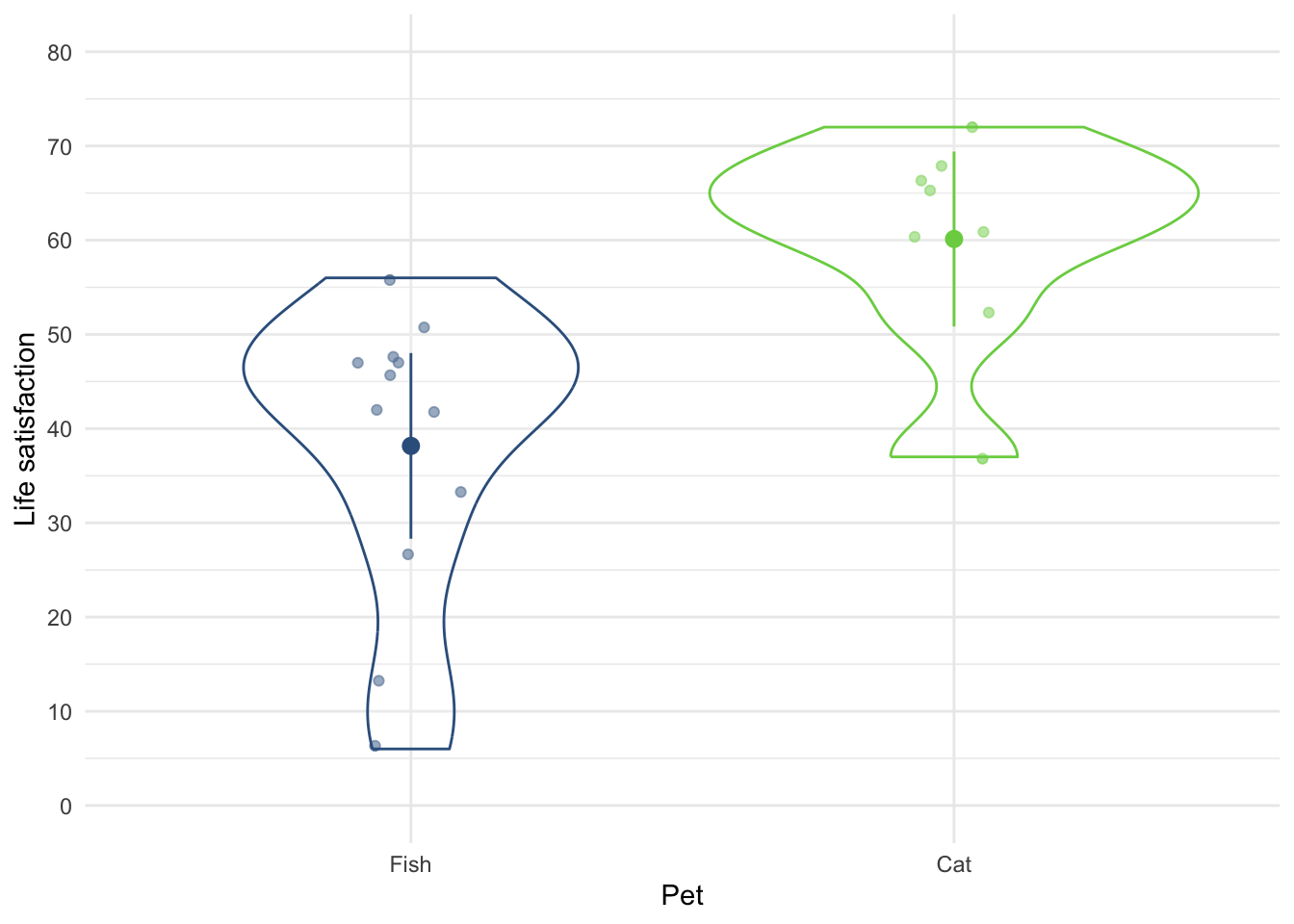

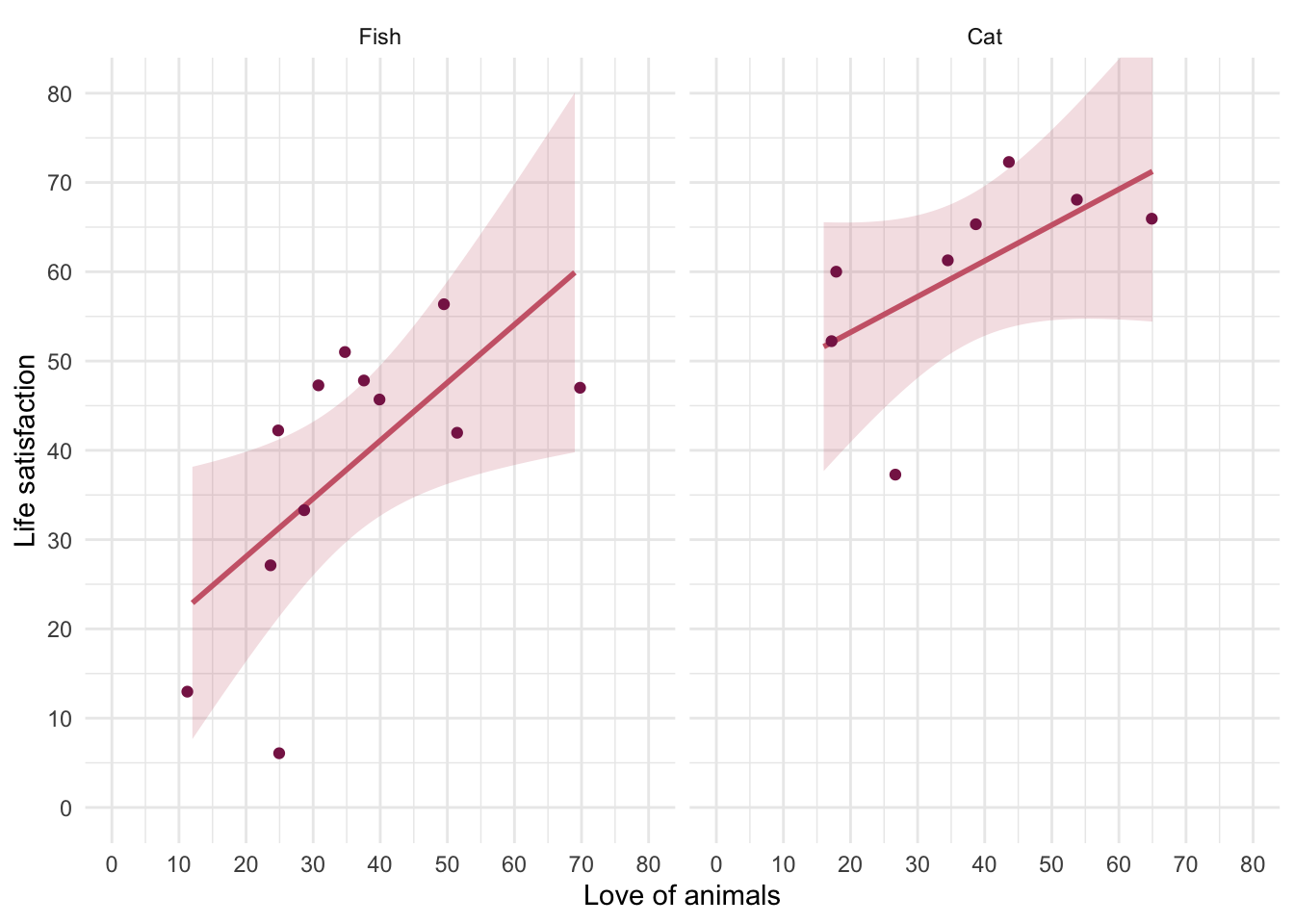

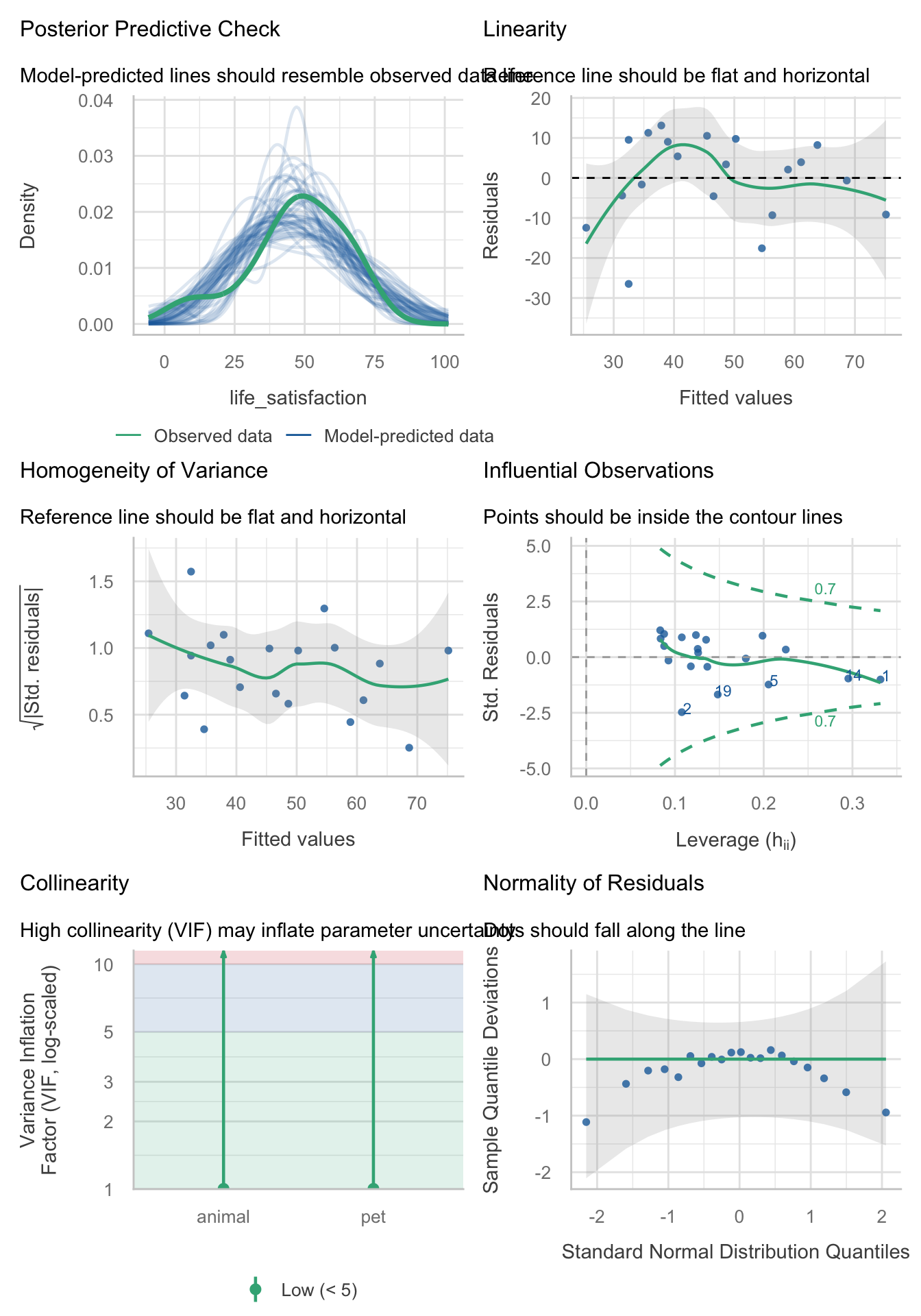

I wondered whether a fish or cat made a better pet. I found some people who had either fish or cats as pets and measured their life satisfaction and how much they like animals. Enter these data into a tibble.

We have three variables here: pet (did the person have a fish or a cat as a pet?), their love of animals (animal) and their life_satisfaction score. The tidy format is to arrange the data in three columns. There are several ways to do this, here’s one of them:

The full data can be found in discovr::pets.

Task 4.7

One of my favourite activities, especially when trying to do brain-melting things like writing statistics books, is drinking tea. I am English, after all. Fortunately, tea improves your cognitive function – well, it does in old Chinese people at any rate (Feng et al., 2010). I may not be Chinese and I’m not that old, but I nevertheless enjoy the idea that tea might help me think. Here are some data based on Feng et al.’s study that measured the number of cups of tea drunk per day and cognitive functioning (out of 80) in 15 people. Enter these data into a tibble.

We have two variables here: the number of cups of tea a person drinks per day (tea) and their cognitive functioning out of 80 (cog_fun) The tidy format is to arrange the data in three columns. There are several ways to do this, here’s one of them:

The data (with an additional variable containing the participant id) can be found in discovr::tea_15.

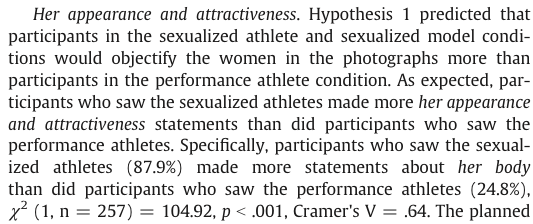

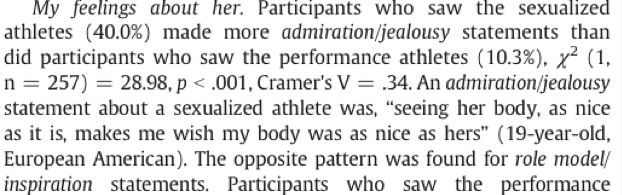

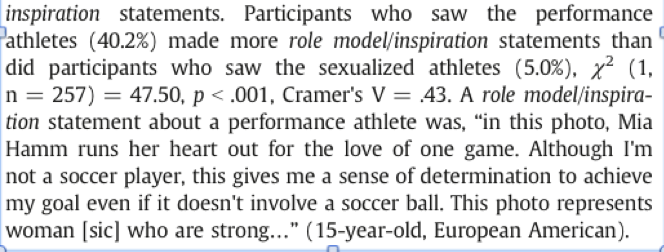

Task 1.8

Statistics and maths anxiety are common and affect people’s performance on maths and stats assignments; women in particular can lack confidence in mathematics (Field, 2010). Zhang et al. (2013) did an intriguing study in which students completed a maths test in which some put their own name on the test booklet, whereas others were given a booklet that already had either a male or female name on. Participants in the latter two conditions were told that they would use this other person’s name for the purpose of the test. Women who completed the test using a different name performed significantly better than those who completed the test using their own name. (There were no such significant effects for men.) The data below are a random subsample of Zhang et al.’s data. Enter them into a tibble.

The design of this study is such that different people were put in one of three different conditions (female fake name, male fake name, own name), but the groups are not equal. In the female fake name group there were 10 females but only 7 males, in the fake male name there were 10 females and 9 males, and in the own name condition 7 females and 9 males. With a bit of adding we can see that there were 27 females in total, and 25 males. We’re going to have to use a lot of rep() statements and keep our wits about us!

We have three variables: whether the participant was male or female (sex), which booklet condition they were in (name_type) and their test score (accuracy). I’ve also included a participant id, just so that your data matches the file zhang_2013_subsample.csv that I provide for you. We can enter the data as follows (there are other ways too …):

zhang_tib <- tibble(.rows = 52) |>

mutate(

id = c(171, 35, 57, 36, 53, 176, 76, 184, 64, 166, 14, 100, 30, 49, 157, 14, 68, 71, 4, 40, 66, 27, 61, 27, 36, 33, 120, 113, 95, 99, 78, 32, 43, 183, 103, 31, 86, 54, 5, 20, 13, 59, 58, 188, 187, 15, 50, 9, 45, 60, 73, 189) |> as_factor(),

sex = c(rep("Female", 27), rep("Male", 25)) |> as_factor(),

name_type = c(rep("Female fake name", 10), rep("Male fake name", 10), rep("Own name", 7), rep("Female fake name", 7), rep("Male fake name", 9), rep("Own name", 9)) |> as_factor(),

accuracy = c(33, 22, 46, 53, 14, 27, 64, 62, 75, 50, 69, 60, 82, 78, 38, 63, 46, 27, 61, 29, 75, 33, 83, 42, 10, 44, 27, 53, 47, 87, 41, 62, 67, 57, 31, 63, 34, 40, 22, 17, 60, 47, 57, 70, 57, 33, 83, 86, 65, 64, 37, 80)

)The data are in discovr::zhang_sample.

Task 4.9

What is a factor variable?

A variable in which numbers are used to represent group or category membership. An example would be a variable in which a score of 1 represents a person being female, and a 0 represents them being male.

Task 4.10

What is the difference between messy and tidy format data?

Tidy format data are arranged such that scores on an outcome variable appear in a single column and rows represent a combination of the attributes of those scores (for example, the entity from which the scores came, when the score was recorded etc.). In tidy format data, scores from a single entity can appear over multiple rows where each row represents a combination of the attributes of the score (e.g., levels of an independent variable or time point at which the score was recorded etc.) In contrast, messy format data are arranged such that scores from a single entity appear in a single row and levels of independent or predictor variables are arranged over different columns. As such, in designs with multiple measurements of an outcome variable within a case the outcome variable scores will be contained in multiple columns each representing a level of an independent variable, or a timepoint at which the score was observed. Columns can also represent attributes of the score or entity that are fixed over the duration of data collection (e.g., participant sex, employment status etc.).

Chapter 5

Task 5.1

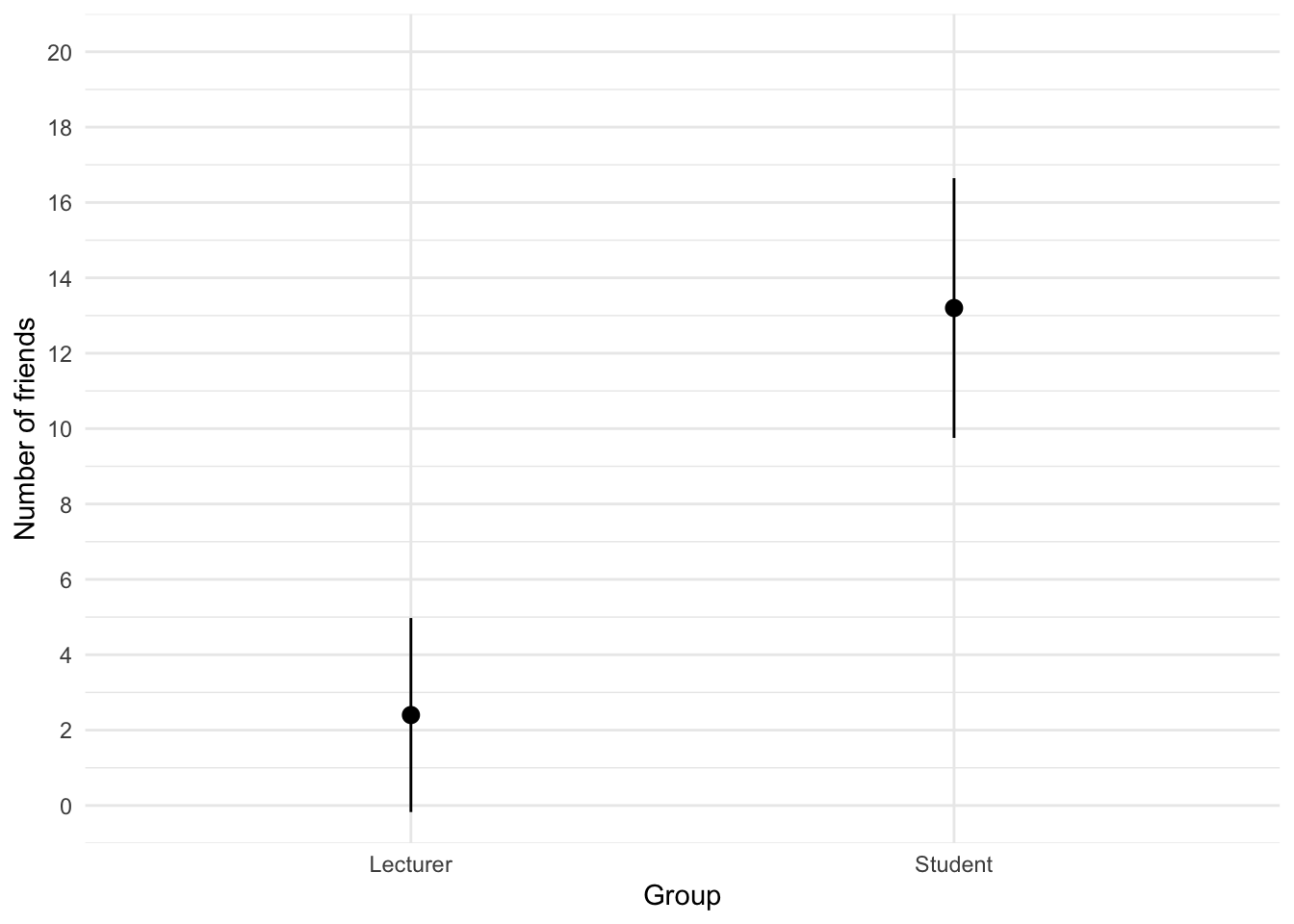

The file students.csv contains some fictional data about students and lecturers including their number of friends, income (per anum), alcohol consumption per week (in units) and neuroticism (neurotic). Plot and interpret an error bar chart showing the mean number of friends for students and lecturers.

First, load the data (see Tip 2) using

student_tib <- discovr::studentsTo find out more about the data execute ?students. The code for the plot (Figure 1) is:

ggplot(student_tib, aes(group, friends)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange") +

coord_cartesian(ylim = c(0, 20)) +

scale_y_continuous(breaks = seq(0, 20, 2)) +

labs(x = "Group", y = "Number of friends") +

theme_minimal()

We can conclude that, on average, students had more friends than lecturers.

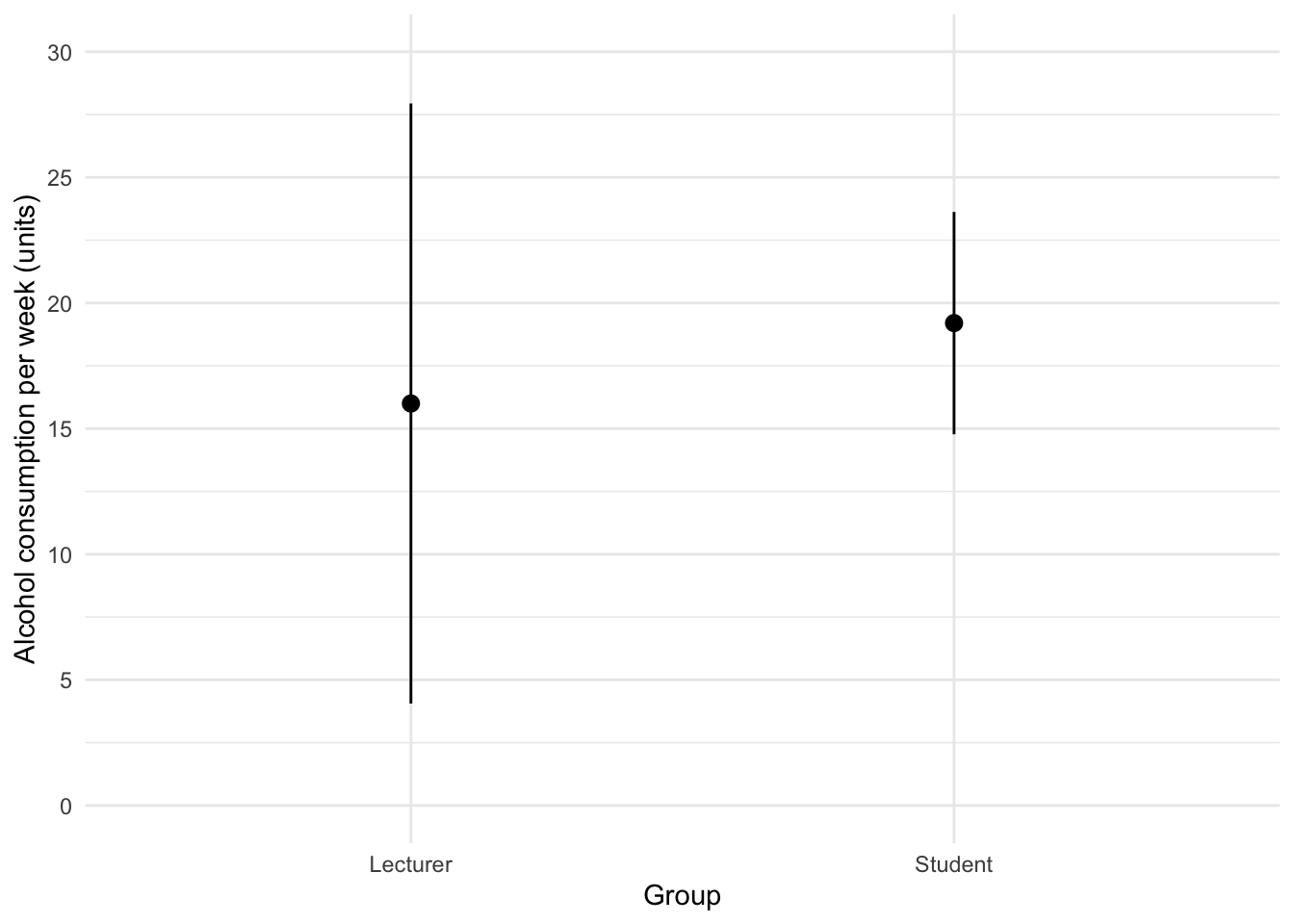

Task 5.2

Using the same data, plot and interpret an error bar chart showing the mean alcohol consumption for students and lecturers.

The code for the plot in Figure 2 is:

ggplot(student_tib, aes(group, alcohol)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange") +

coord_cartesian(ylim = c(0, 30)) +

scale_y_continuous(breaks = seq(0, 30, 5)) +

labs(x = "Group", y = "Alcohol consumption per week (units)") +

theme_minimal()

We can conclude that, on average, students and lecturers drank similar amounts, but the error bars tell us that the mean is a better representation of the population for students than for lecturers (there is more variability in lecturers’ drinking habits compared to students’).

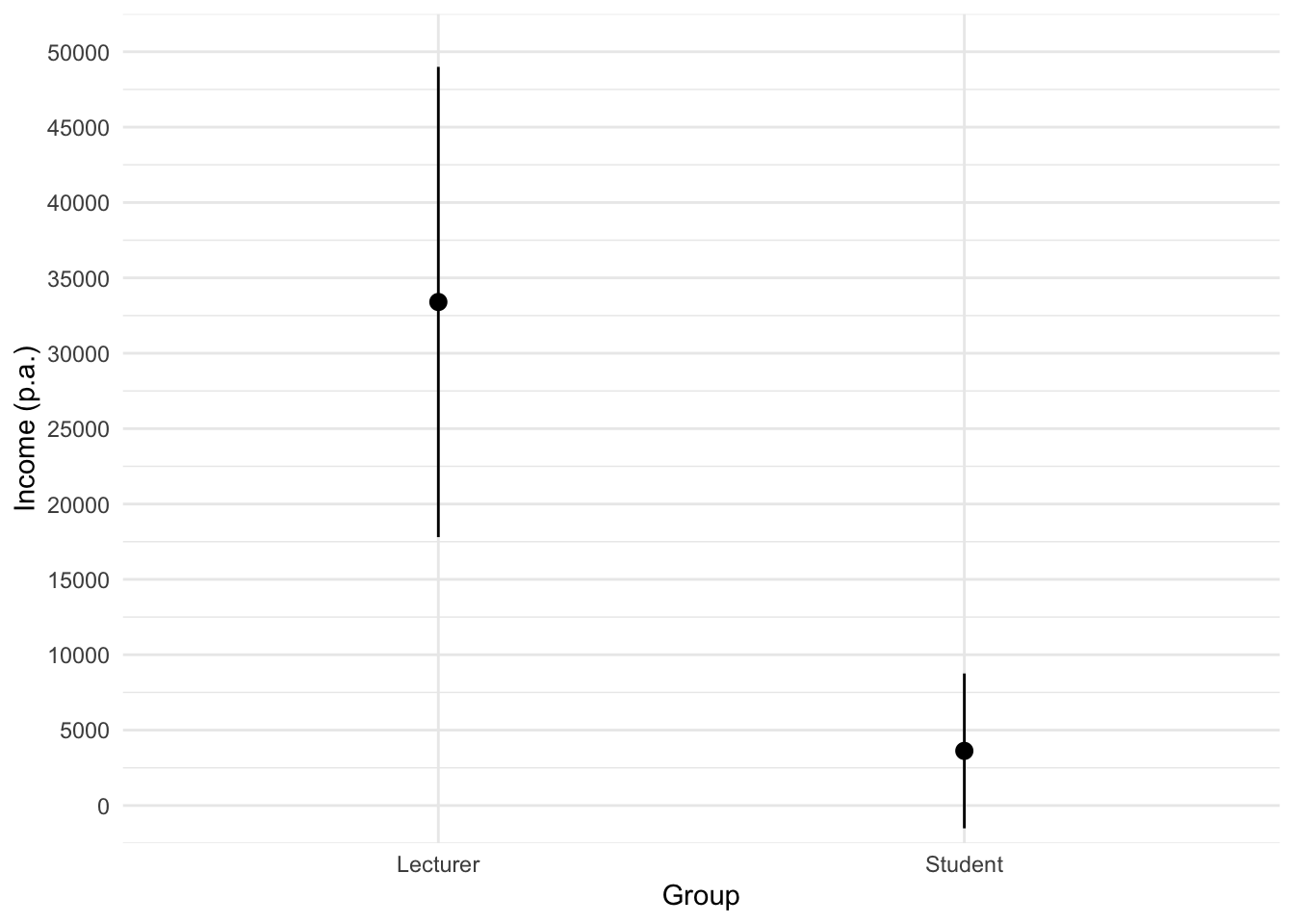

Task 5.3

Using the same data, plot and interpret an error line chart showing the mean income for students and lecturers.

The code for the plot in Figure 3 is:

ggplot(student_tib, aes(group, income)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange") +

coord_cartesian(ylim = c(0, 50000)) +

scale_y_continuous(breaks = seq(0, 50000, 5000)) +

labs(x = "Group", y = "Income (p.a.)") +

theme_minimal()

We can conclude that, on average, students earn less than lecturers, but the error bars tell us that the mean is a better representation of the population for students than for lecturers (there is more variability in lecturers’ income compared to students’).

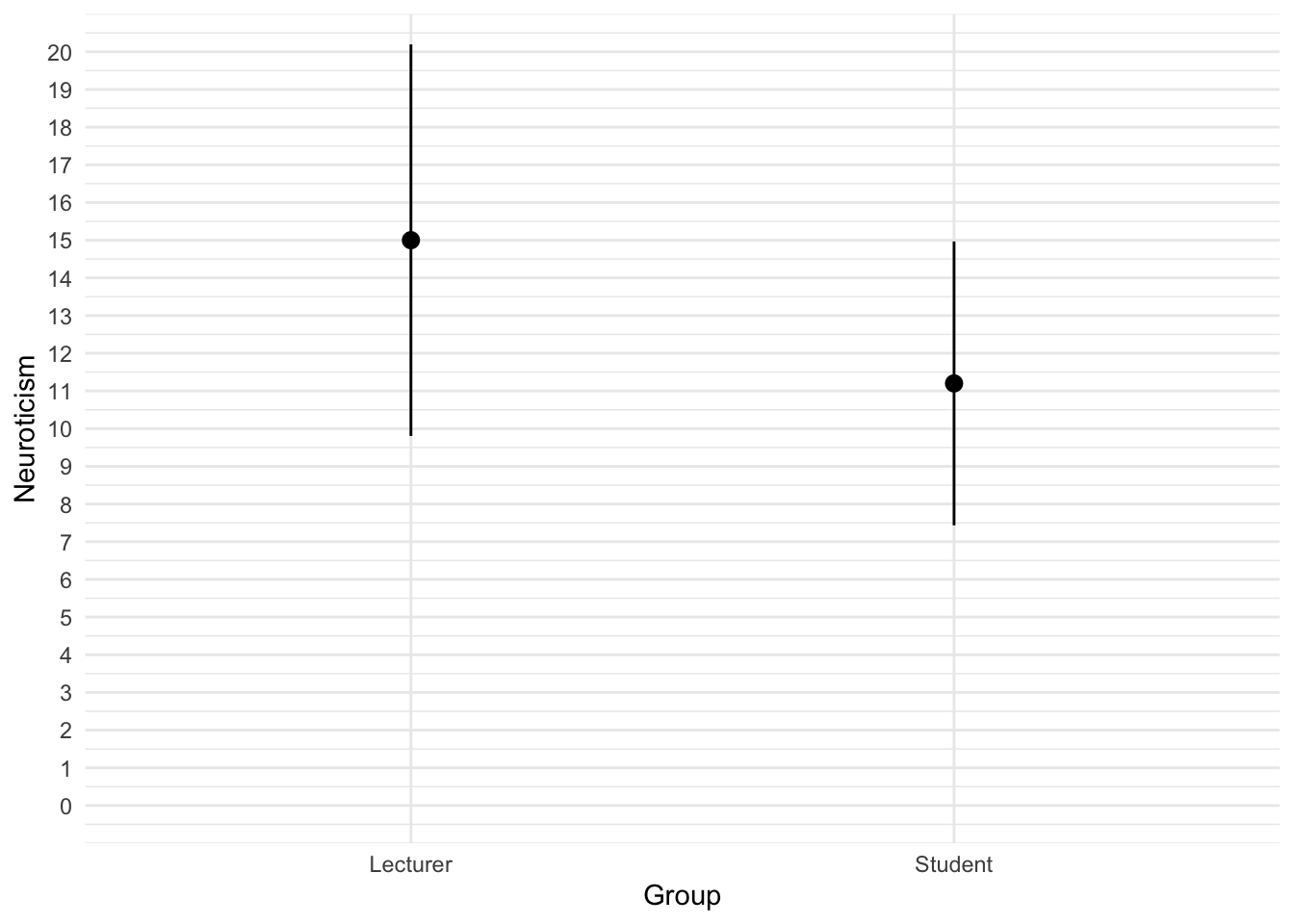

Task 5.4

Using the same data, plot and interpret error a line chart showing the mean neuroticism for students and lecturers.

The code for the plot in Figure 4 is:

ggplot(student_tib, aes(group, neurotic)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange") +

coord_cartesian(ylim = c(0, 20)) +

scale_y_continuous(breaks = seq(0, 20, 1)) +

labs(x = "Group", y = "Neuroticism") +

theme_minimal()

We can conclude that, on average, students are slightly less neurotic than lecturers.

Task 5.5

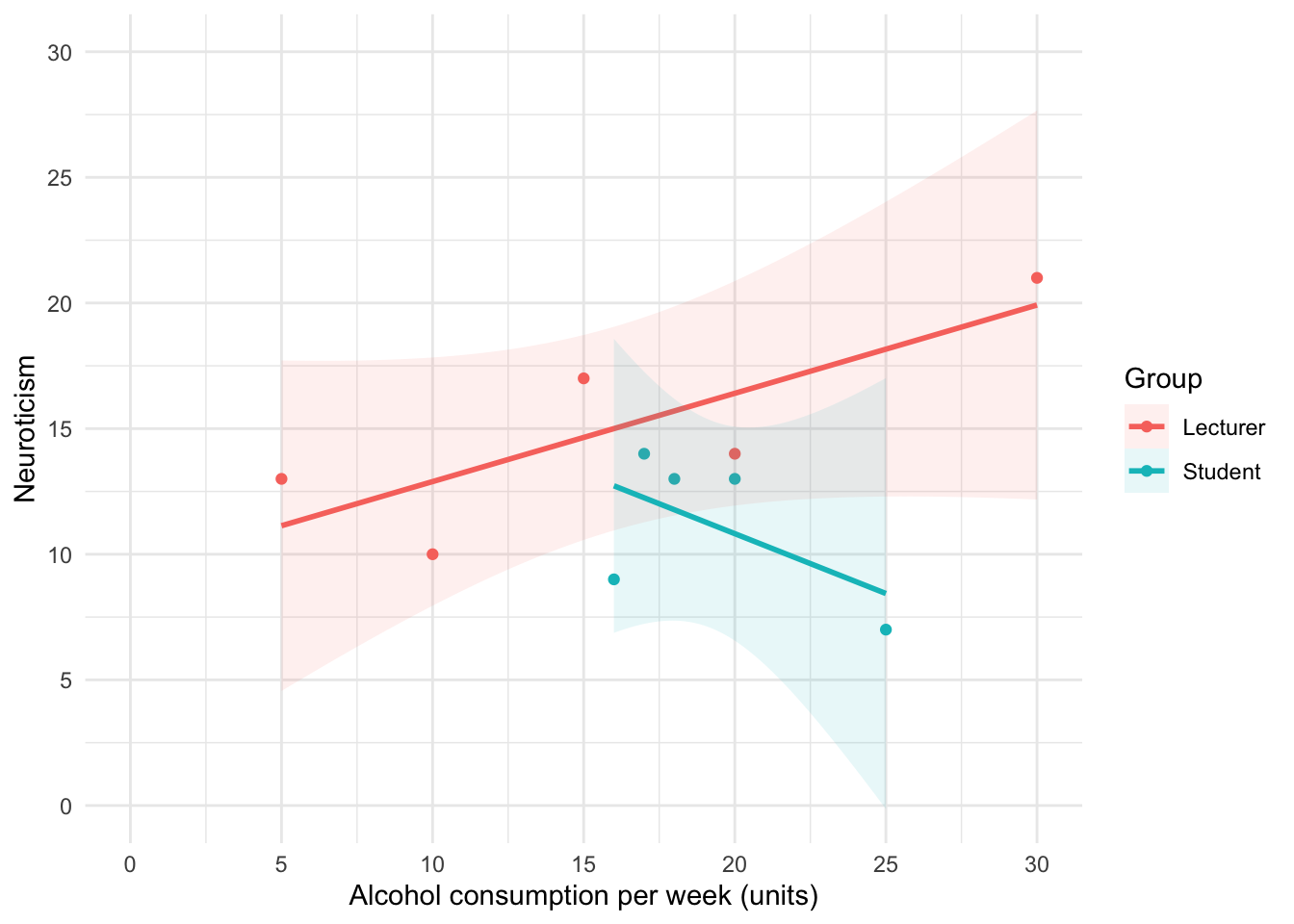

Using the same data, plot and interpret a scatterplot with regression lines of alcohol consumption and neuroticism grouped by lecturer/student.

The code for the plot in Figure 5 is:

ggplot(student_tib, aes(alcohol, neurotic, colour = group)) +

geom_point() +

geom_smooth(method = "lm", aes(fill = group), alpha = 0.1) +

coord_cartesian(xlim = c(0, 30), ylim = c(0, 30)) +

scale_x_continuous(breaks = seq(0, 30, 5)) +

scale_y_continuous(breaks = seq(0, 30, 5)) +

labs(x = "Alcohol consumption per week (units)", y = "Neuroticism", colour = "Group", fill = "Group") +

theme_minimal()

We can conclude that for lecturers, as neuroticism increases so does alcohol consumption (a positive relationship), but for students the opposite is true, as neuroticism increases alcohol consumption decreases.

Task 5.6

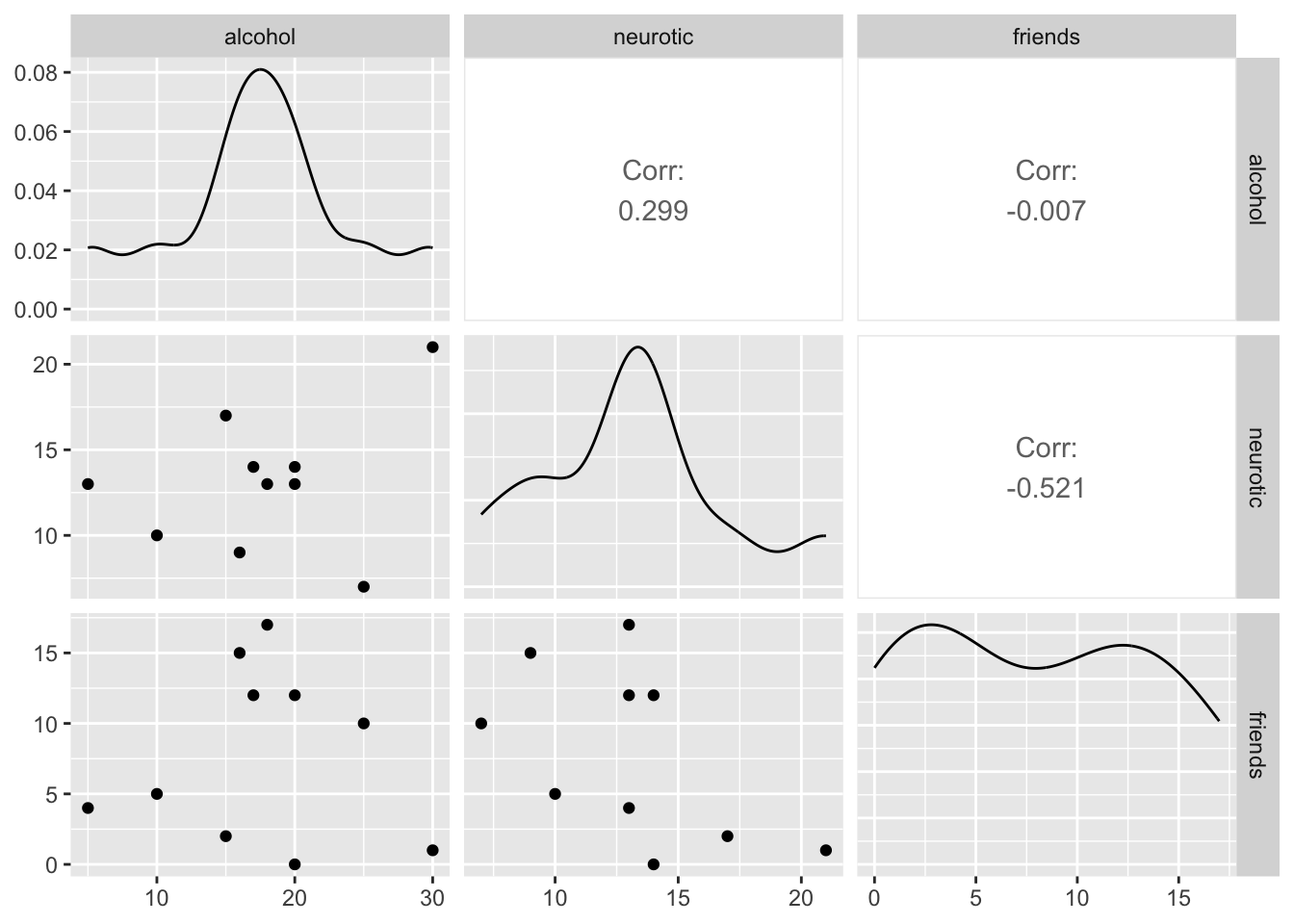

Using the same data, plot and interpret a scatterplot matrix with regression lines of alcohol consumption, neuroticism and number of friends.

The code for the plot in Figure 5 is:

We can conclude that there is no relationship (flat line) between the number of friends and alcohol consumption; there was a negative relationship between how neurotic a person was and their number of friends ; and there was a slight positive relationship between how neurotic a person was and how much alcohol they drank.

Task 5.7

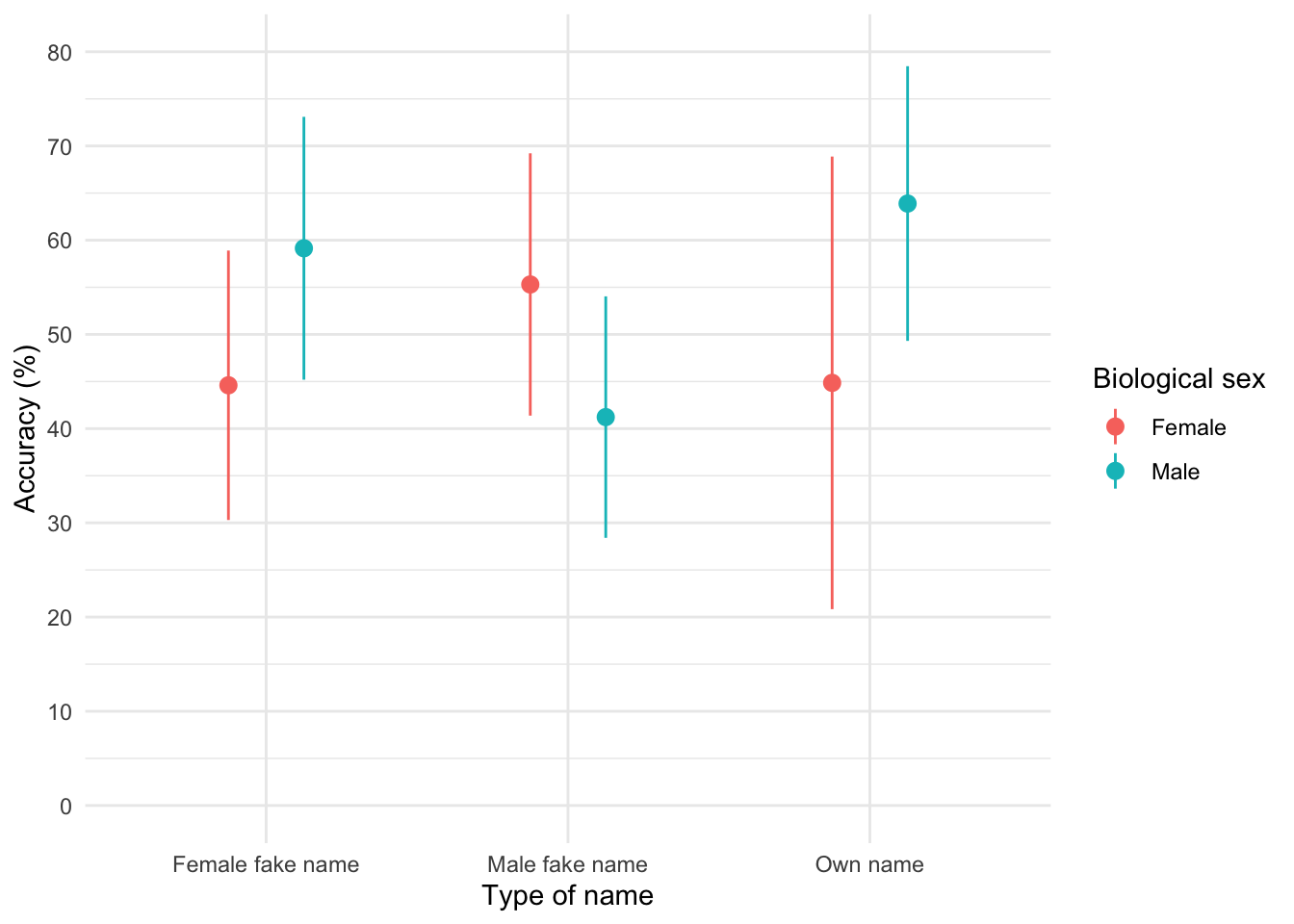

Using the zhang_2013_subsample.csv data from Chapter 1 (see Smart Alex’s task) plot a clustered error bar chart of the mean test accuracy as a function of the type of name participants completed the test under (x-axis) and whether they were male or female (different coloured bars).

First, let’s load the data (see Tip 2)

zhang_tib <- discovr::zhang_sampleTo find out more about the data execute ?zhang_sample.

To get the plot in Figure 7 execute:

ggplot(zhang_tib, aes(name_type, accuracy, colour = sex)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 80)) +

scale_y_continuous(breaks = seq(0, 80, 10)) +

labs(x = "Type of name", y = "Accuracy (%)", colour = "Biological sex") +

theme_minimal()

The plot shows that, on average, males did better on the test than females when using their own name (the control) but also when using a fake female name. However, for participants who did the test under a fake male name, the women did better than males.

Task 5.8

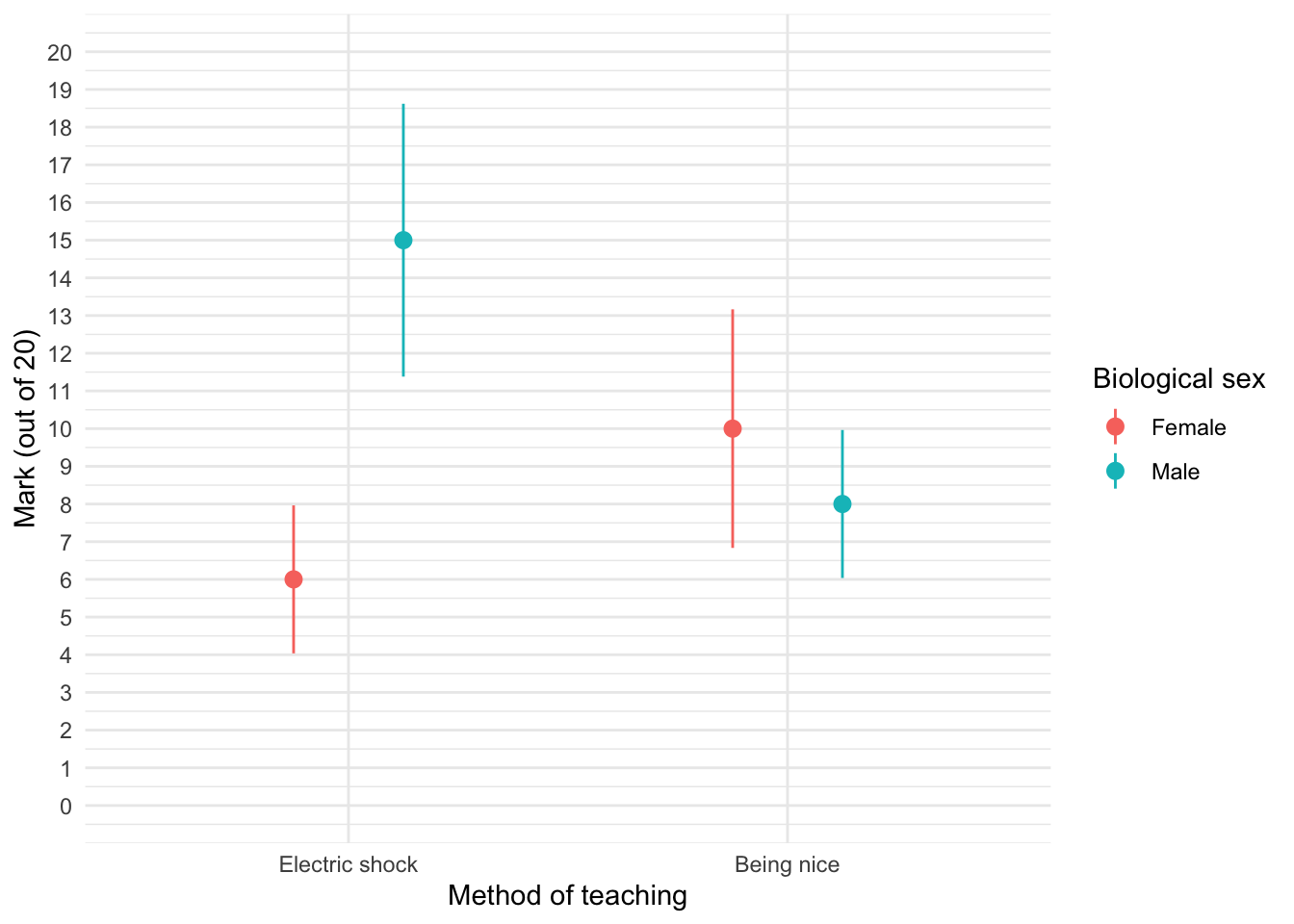

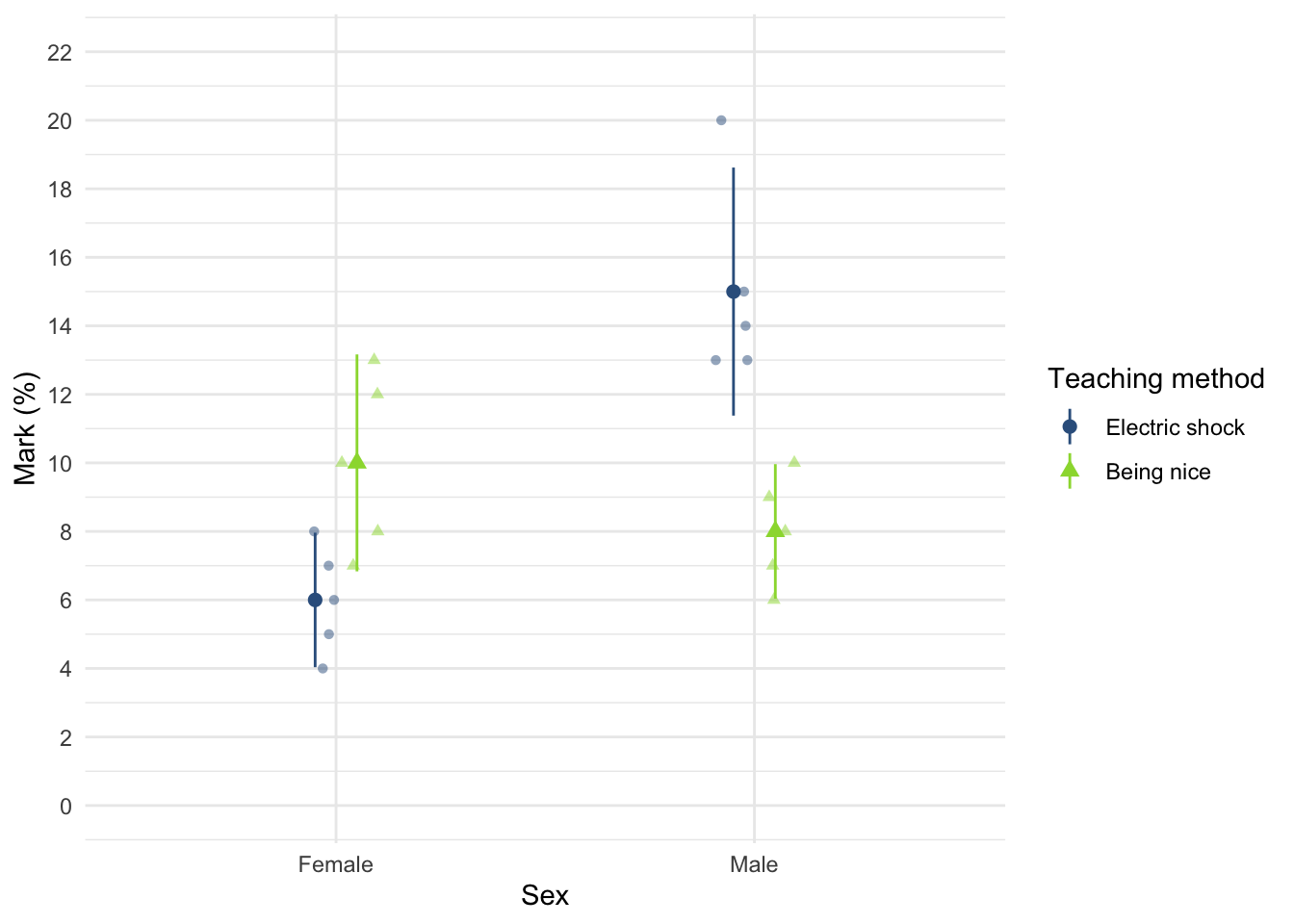

Using the teaching.csv data from Chapter 4 (Task 3), plot a clustered error line chart of the mean score when electric shocks were used compared to being nice, and plot males and females as different coloured lines.

First, let’s load the data (see Tip 2):

teaching_tib <- discovr::teachingTo find out more about the data execute ?teaching. To get the plot in Figure 8 execute:

ggplot(teaching_tib, aes(method, mark, colour = sex)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 20)) +

scale_y_continuous(breaks = seq(0, 20, 1)) +

labs(x = "Method of teaching", y = "Mark (out of 20)", colour = "Biological sex") +

theme_minimal()

We can see that when the being nice method of teaching is used, males and females have comparable scores on their coursework, with females scoring slightly higher than males on average, although their scores are also more variable than the males’ scores as indicated by the longer error bar). However, when an electric shock is used, males score higher than females but there is more variability in the males’ scores than the females’ for this method (as seen by the longer error bar for males than for females). Additionally, the graph shows that females score higher when the being nice method is used compared to when an electric shock is used, but the opposite is true for males. This suggests that there may be an interaction effect of sex.

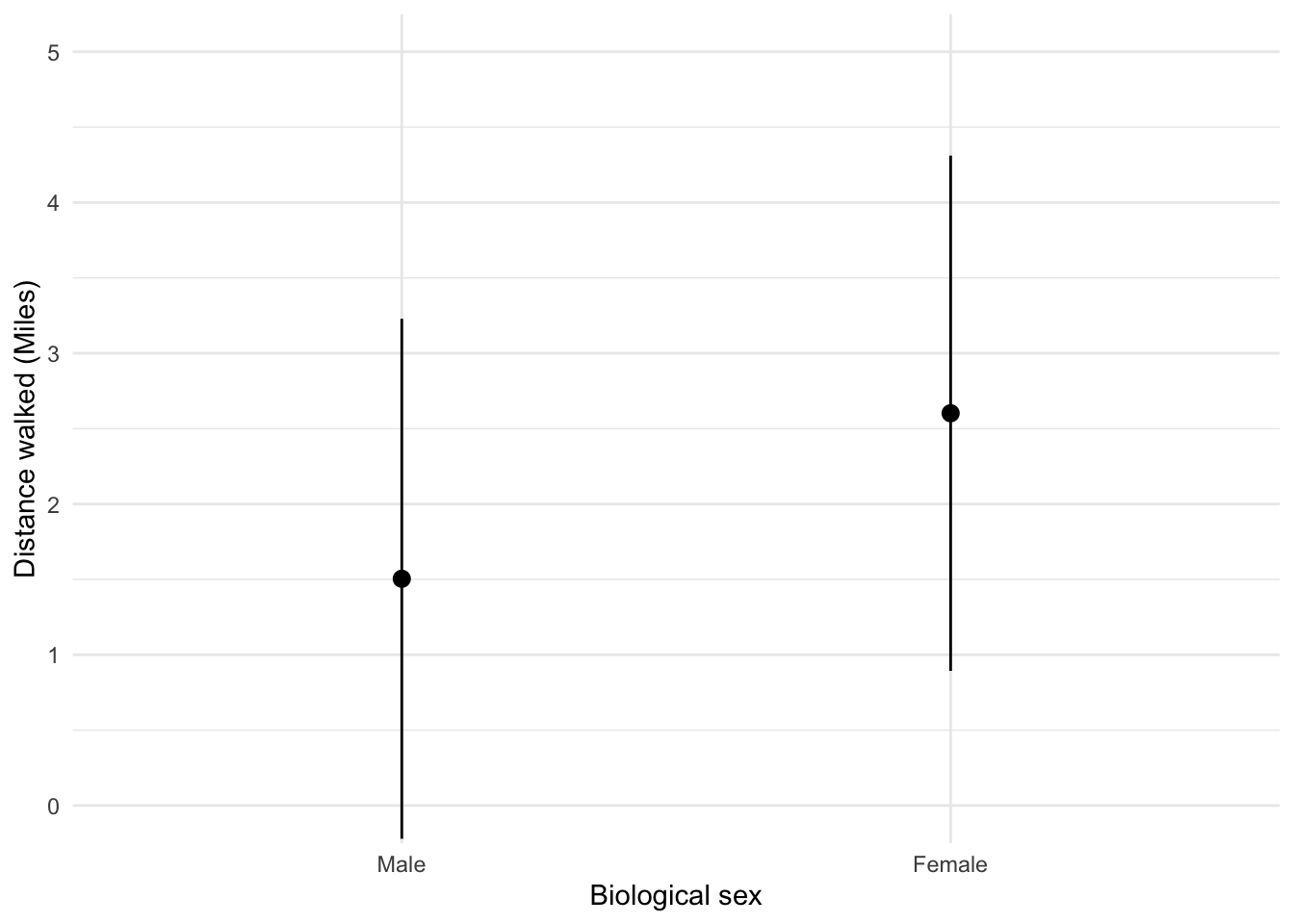

Task 5.9

Using the shopping_exercise.csv data from Chapter 3, plot two error bar graphs comparing men and women (x-axis): one for the distance walked, and the other of the time spent shopping.

First, let’s load the data (see Tip 2):

shopping_tib <- discovr::shoppingTo find out more about the data execute ?shopping. To get the plot in Figure 9 execute:

ggplot(shopping_tib, aes(sex, distance)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 5)) +

scale_y_continuous(breaks = seq(0, 5, 1)) +

labs(x = "Biological sex", y = "Distance walked (Miles)") +

theme_minimal()

Looking at the plot in Figure 9, we can see that, on average, females walk longer distances (probably not significantly so) while shopping than males.

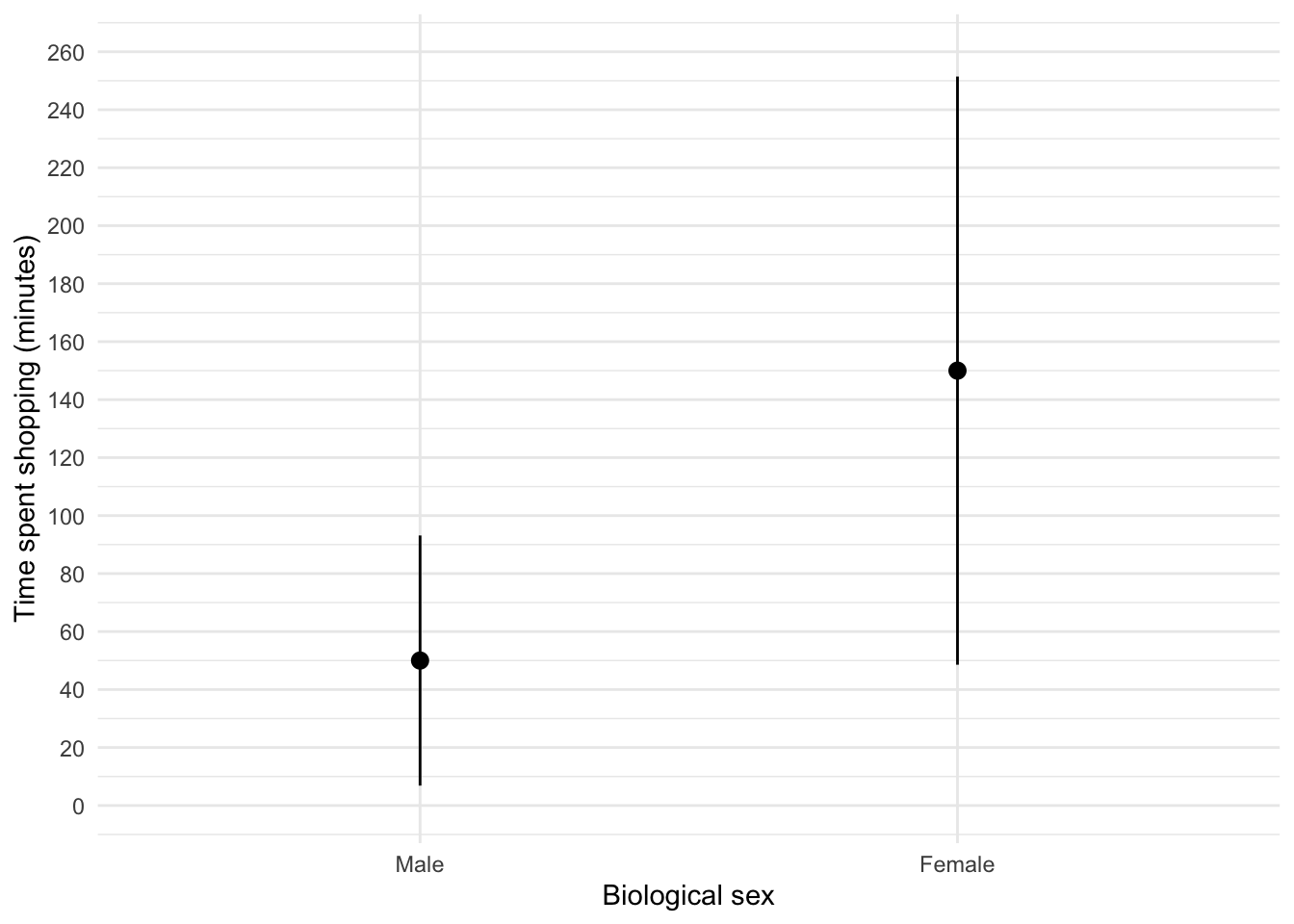

To get the plot in Figure 10 execute:

ggplot(shopping_tib, aes(sex, time)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 260)) +

scale_y_continuous(breaks = seq(0, 260, 20)) +

labs(x = "Biological sex", y = "Time spent shopping (minutes)") +

theme_minimal()

Figure 10 shows that, on average, females spend more time shopping than males (but again, probably not significantly so). The females’ scores are more variable than the males’ scores (longer error bar).

Task 5.10

Using the pets.csv data from Chapter 4 (Task 6), plot two error bar charts comparing scores when having a fish or cat as a pet (x-axis): one for the animal liking variable, and the other for the life satisfaction.

First, let’s load the data (see Tip 2):

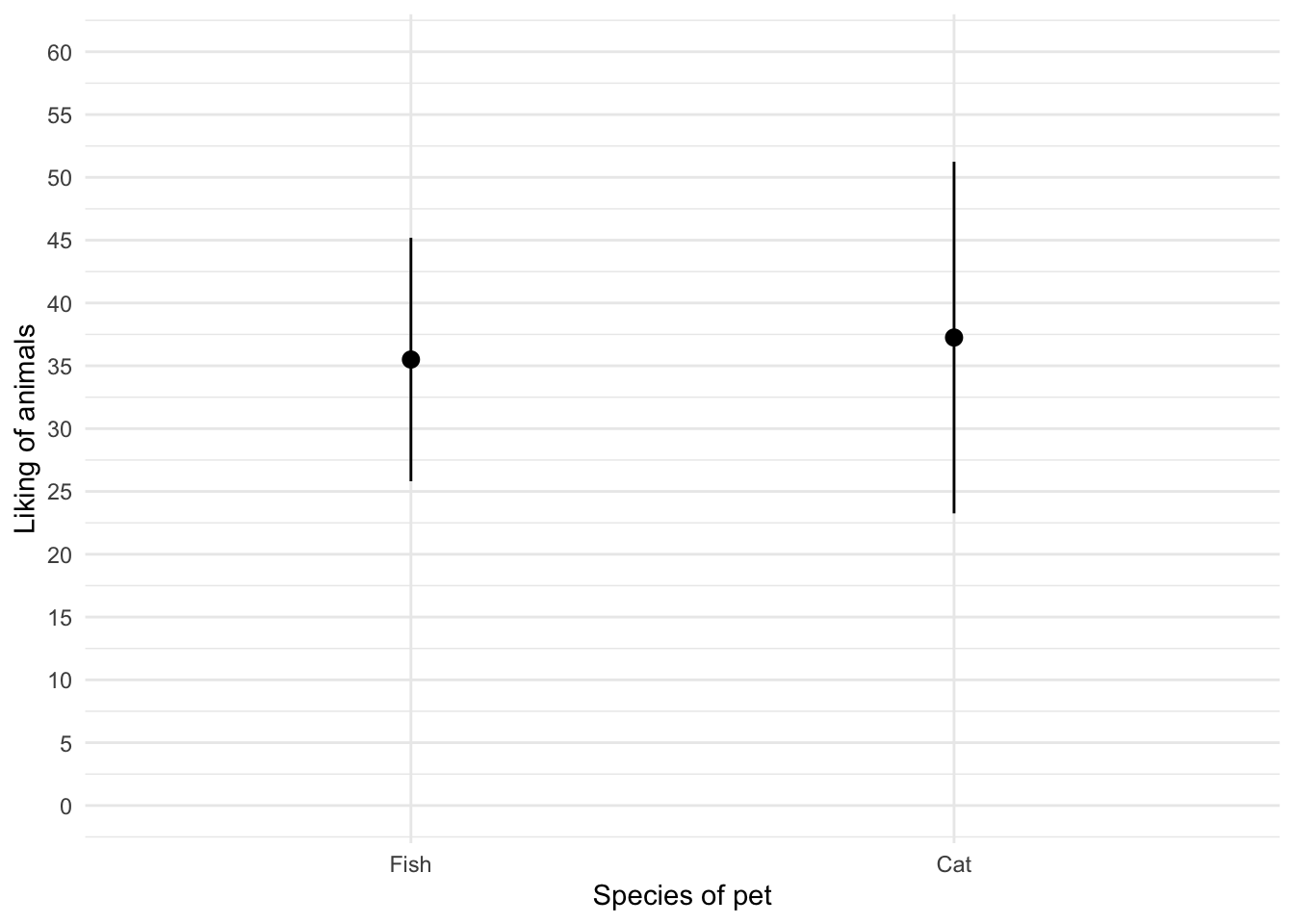

pet_tib <- discovr::petsTo find out more about the data execute ?pets. To get the first plot execute:

ggplot(pet_tib, aes(pet, animal)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 60)) +

scale_y_continuous(breaks = seq(0, 60, 5)) +

labs(x = "Species of pet", y = "Liking of animals") +

theme_minimal()

Figure 11 shows that the mean love of animals was the same for people with cats and fish as pets. To get the second plot execute:

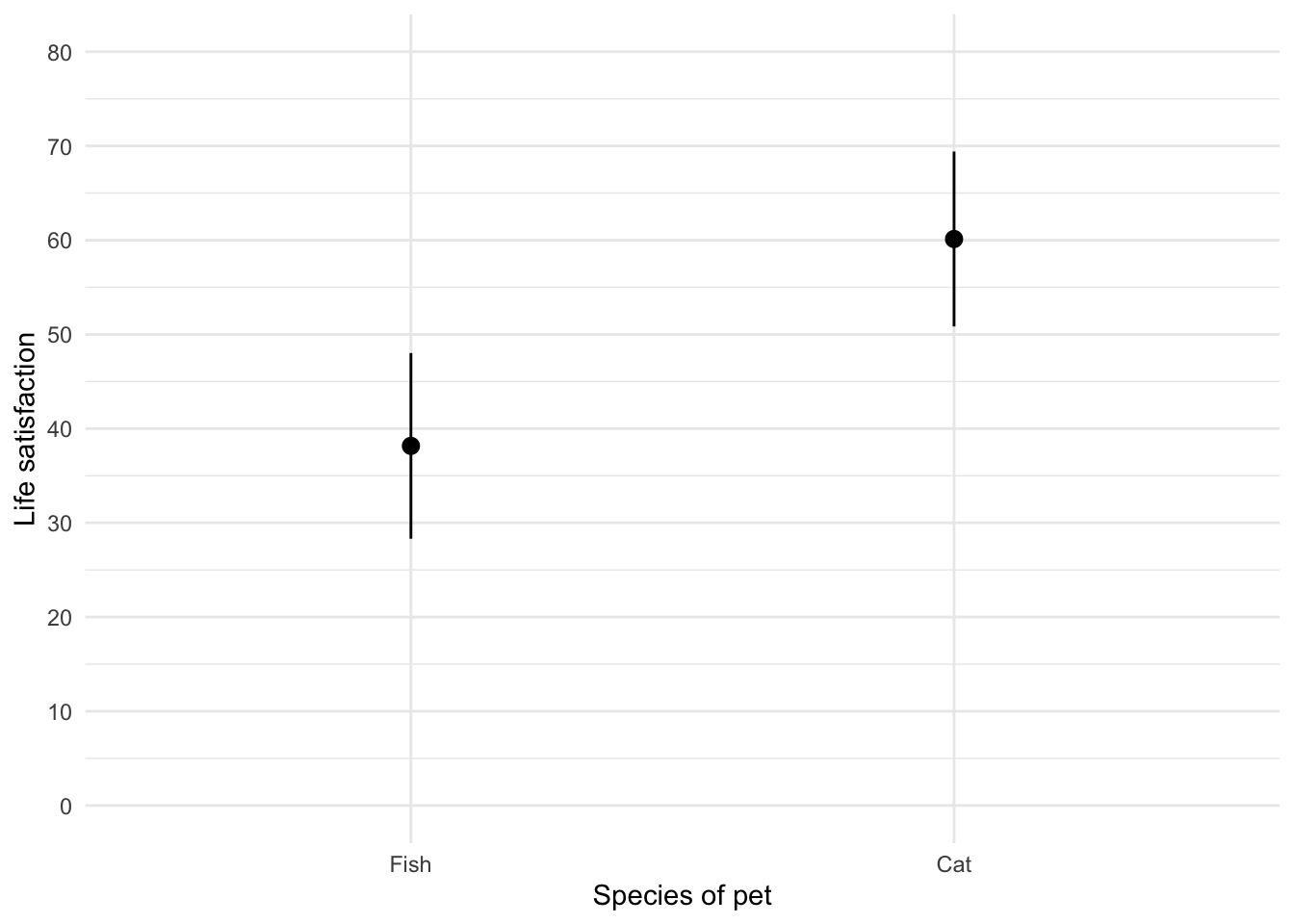

ggplot(pet_tib, aes(pet, life_satisfaction)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.5)) +

coord_cartesian(ylim = c(0, 80)) +

scale_y_continuous(breaks = seq(0, 80, 10)) +

labs(x = "Species of pet", y = "Life satisfaction") +

theme_minimal()

Figure 12 shows that, on average, life satisfaction was higher for people with a cat as a pety than those with a fish.

Task 5.11

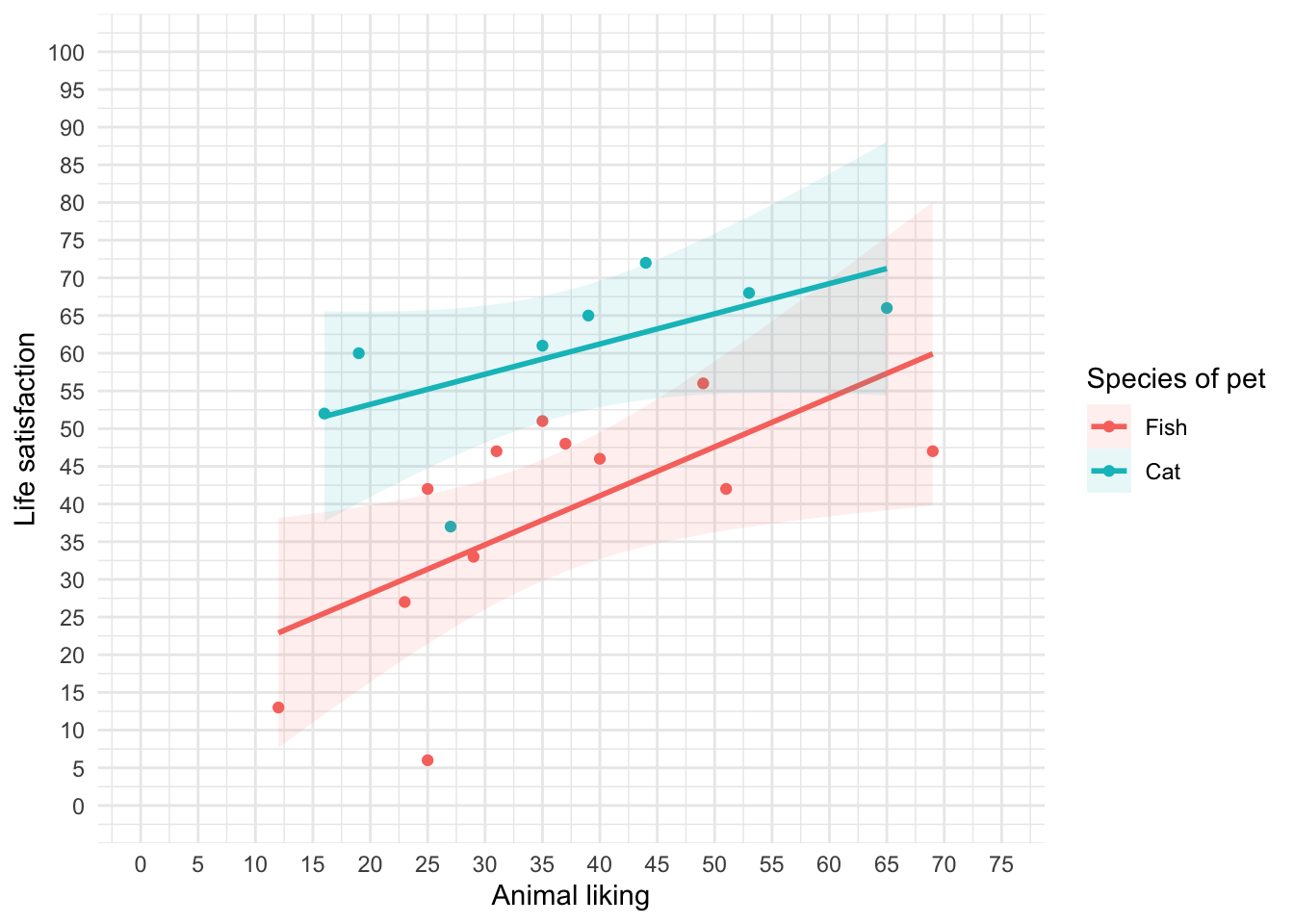

Using the same data as above, plot a scatterplot of animal liking scores against life satisfaction (plot scores for those with fish and cats in different colours).

The code will be something like:

ggplot(pet_tib, aes(animal, life_satisfaction, colour = pet)) +

geom_point() +

geom_smooth(method = "lm", aes(fill = pet), alpha = 0.1) +

coord_cartesian(xlim = c(0, 75), ylim = c(0, 100)) +

scale_x_continuous(breaks = seq(0, 75, 5)) +

scale_y_continuous(breaks = seq(0, 100, 5)) +

labs(x = "Animal liking", y = "Life satisfaction", colour = "Species of pet", fill = "Species of pet") +

theme_minimal()

We can conclude from Figure 13 that for for regardles sof whether someone had a cat or fish as a pet, as love of animals increases so does life satisfaction (a positive relationship).

Task 5.12

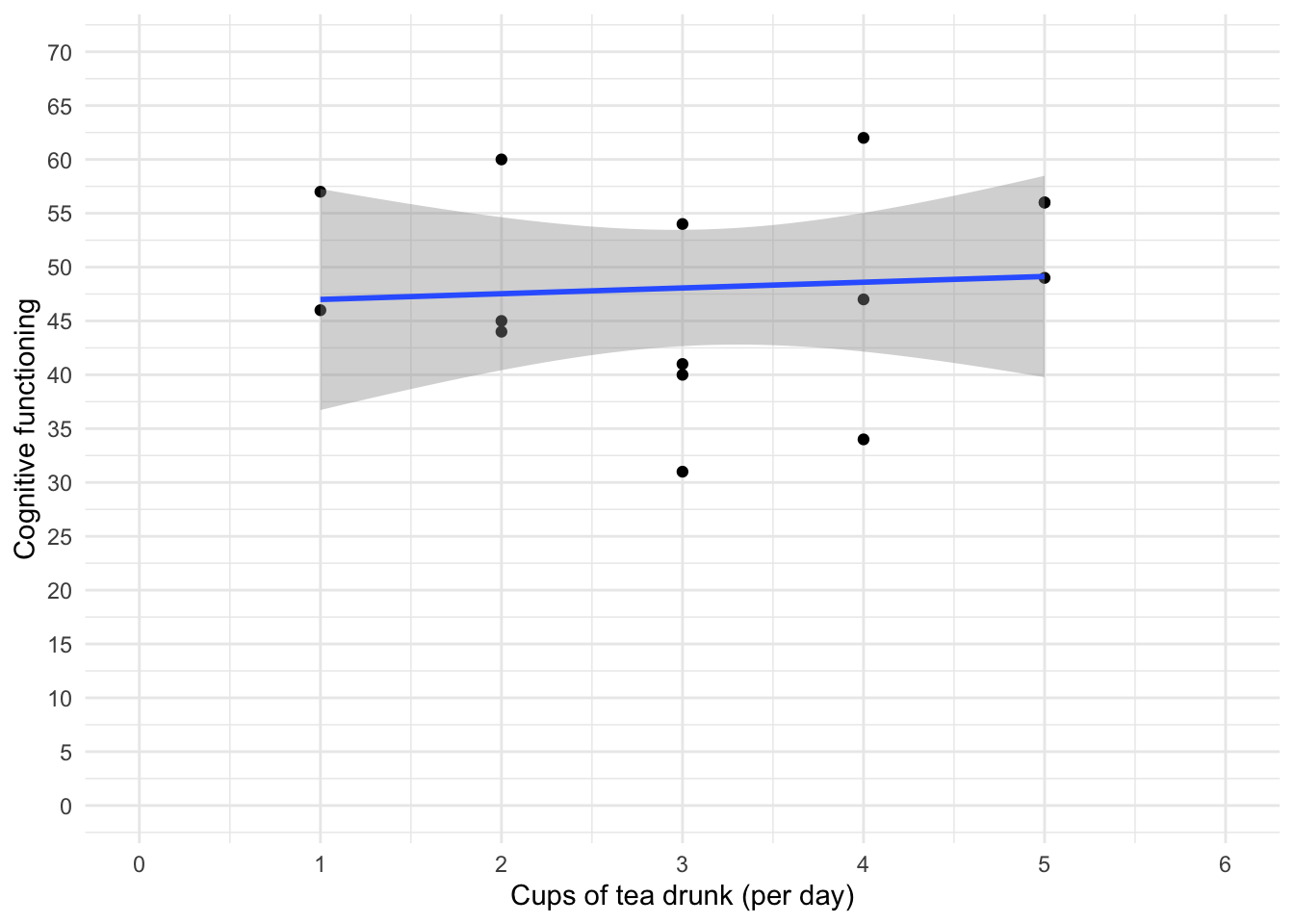

Using the tea_15.csv data from Chapter 4, plot a scatterplot showing the number of cups of tea drunk (`

tea) on the x-axis against cognitive functioning (cog_fun) and the y-axis.

First, let’s load the data (see Tip 2):

#

tea15_tib <- discovr::tea_15To find out more about the data execute ?tea_15. The code will be something like:

ggplot(tea15_tib, aes(tea, cog_fun)) +

geom_point() +

geom_smooth(method = "lm") +

coord_cartesian(xlim = c(0, 6), ylim = c(0, 70)) +

scale_x_continuous(breaks = seq(0, 6, 1)) +

scale_y_continuous(breaks = seq(0, 70, 5)) +

labs(x = "Cups of tea drunk (per day)", y = "Cognitive functioning") +

theme_minimal()

The scatterplot (and near-flat line especially) in Figure 14 tells us that there is a tiny relationship (practically zero) between the number of cups of tea drunk per day and cognitive function.

Chapter 6

Task 6.1

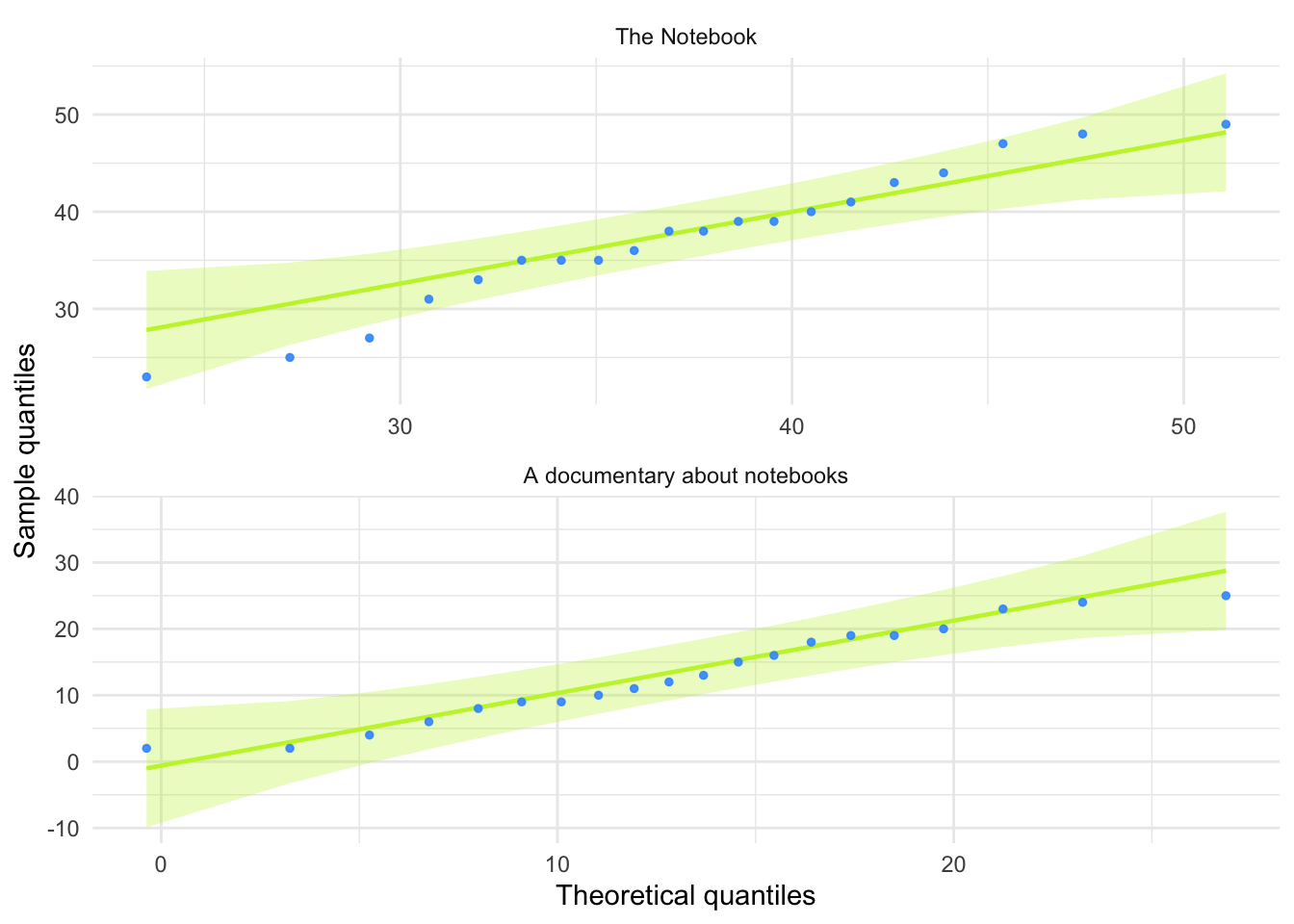

Using the

notebook.csvdata from Chapter 5, create and interpret a Q-Q plot for the two films (ignoresex)?

Load the data directly from the discovr package (see Tip 2).

notebook_tib <- discovr::notebookFigure 15 shows the plot.

ggplot(data = notebook_tib, aes(sample = arousal)) +

qqplotr::stat_qq_band(fill = "#C4F134FF", alpha = 0.3) +

qqplotr::stat_qq_line(colour = "#C4F134FF") +

qqplotr::stat_qq_point(colour = "#3E9BFEFF", alpha = 0.9, size = 1) +

labs(x = "Theoretical quantiles", y = "Sample quantiles") +

facet_wrap(~film, ncol = 1, scales = "free") +

theme_minimal()

For both films the expected quantile points are close, on the whole, to those that would be expected from a normal distribution (i.e. the dots fall within the confidence interval for the line).

Task 6.2

The file

r_exam.csvcontains data on students’ performance on an SPSS exam. Four variables were measured:exam(first-year SPSS exam scores as a percentage),computer(measure of computer literacy in percent),lecture(percentage of statistics lectures attended) andnumeracy(a measure of numerical ability out of 15). There is a variable calleduniindicating whether the student attended Sussex University (where I work) or Duncetown University. Compute and interpret summary statistics forexam,computer,lecturesandnumeracyfor the sample as a whole.

Load the data (see Tip 2).

rexam_tib <- discovr::r_examTable 9 shows the table of descriptive statistics for the four variables in this example. From this table, we can see that, on average, students attended nearly 60% of lectures, obtained 58% in their exam, scored only 51% on the computer literacy test, and only 5 out of 15 on the numeracy test. In addition, the confidence interval for computer literacy was relatively narrow compared to that of the percentage of lectures attended and exam scores.

| Variable | Mean | 95% CI (Mean) | SD | IQR | Range | Skewness | Kurtosis | n | n_Missing |

|---|---|---|---|---|---|---|---|---|---|

| exam | 58.10 | (53.90, 61.24) | 21.32 | 37.00 | (15.00, 99.00) | -0.11 | -1.11 | 100 | 0 |

| computer | 50.71 | (49.17, 52.21) | 8.26 | 10.75 | (27.00, 73.00) | -0.17 | 0.36 | 100 | 0 |

| lectures | 59.77 | (54.93, 63.51) | 21.68 | 28.75 | (8.00, 100.00) | -0.42 | -0.18 | 100 | 0 |

| numeracy | 4.85 | (4.42, 5.31) | 2.71 | 4.00 | (1.00, 14.00) | 0.96 | 0.95 | 100 | 0 |

Task 6.3

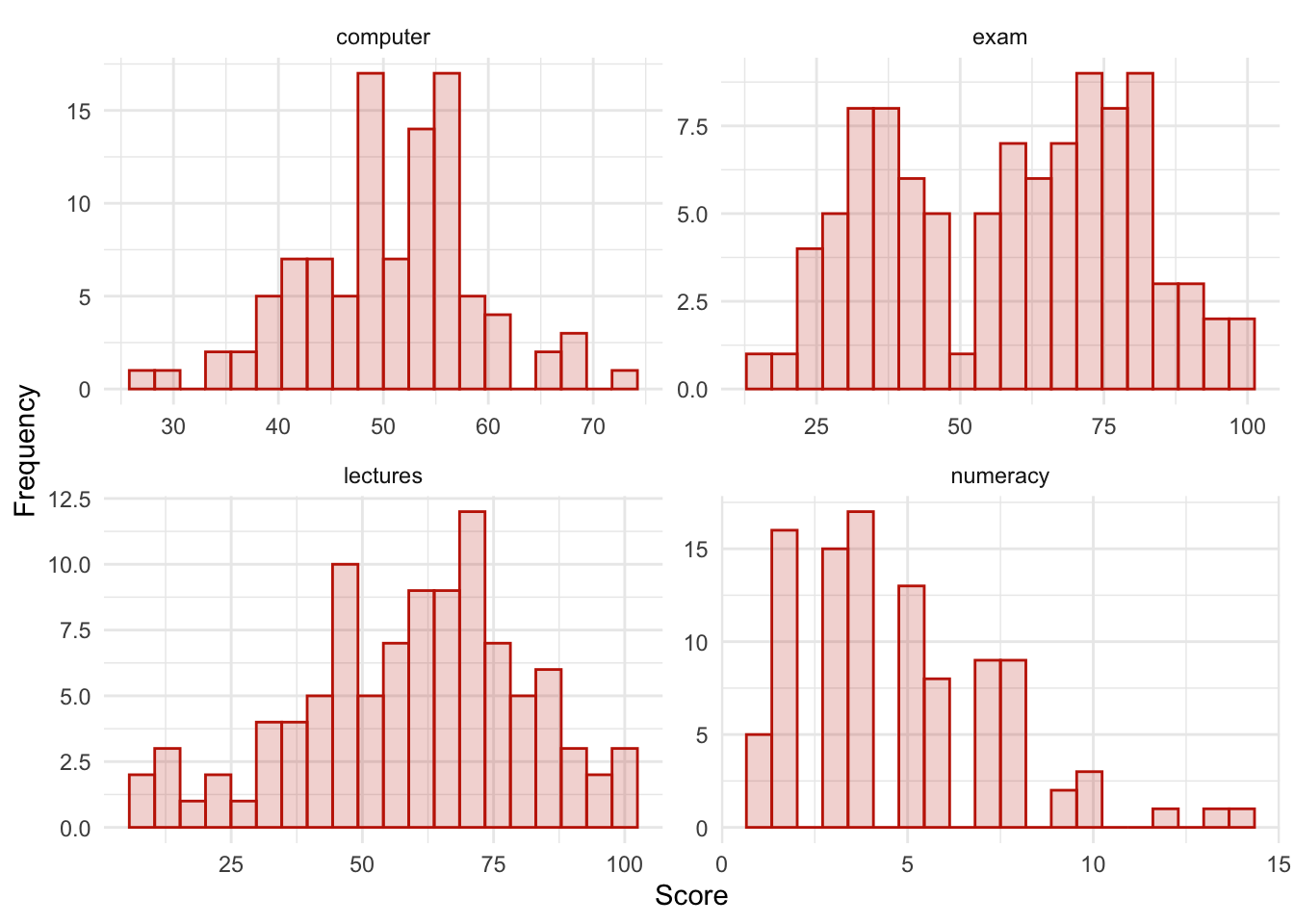

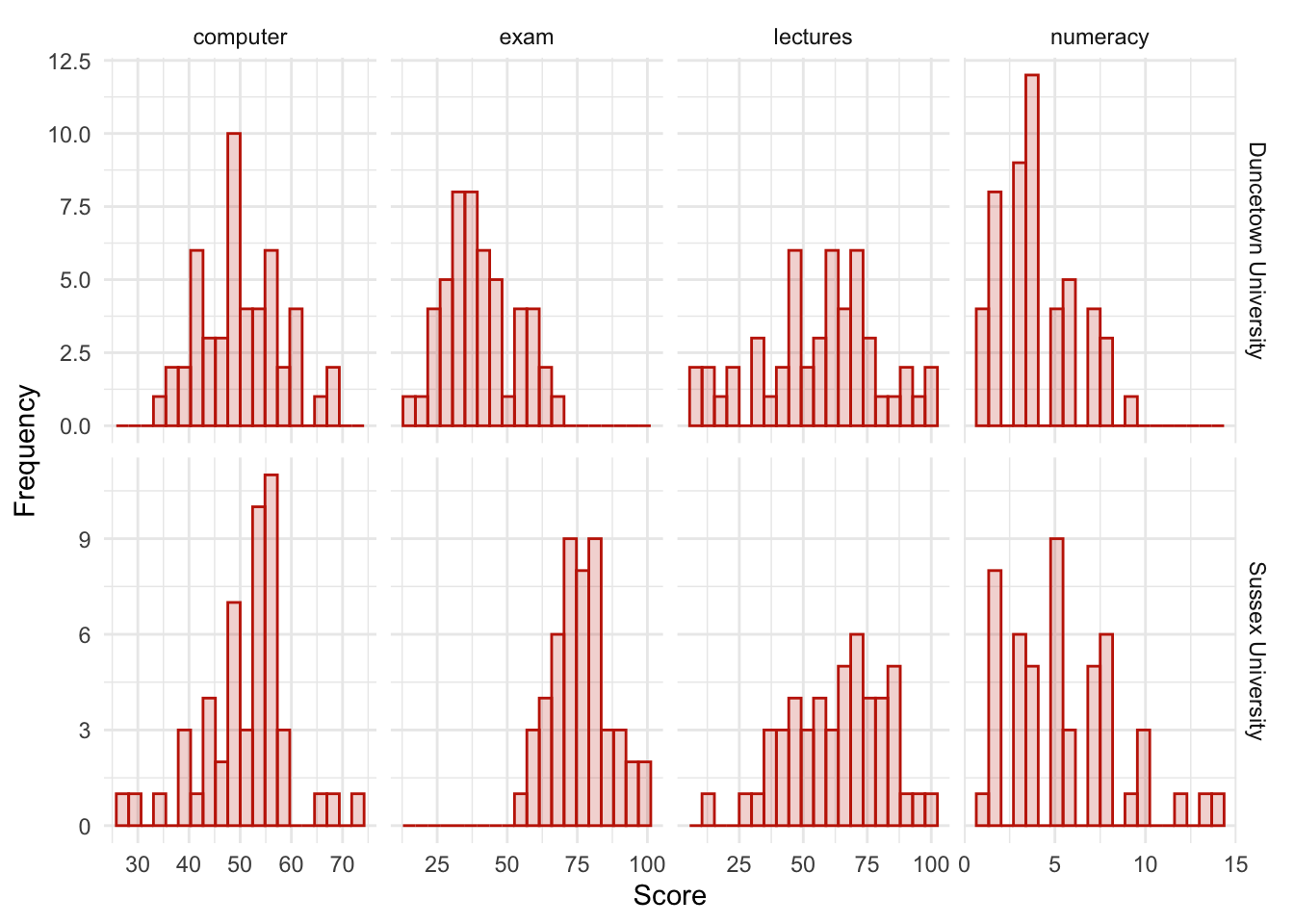

Produce histograms for each of the four measures in the previous task and interpret them

The data are in messy format, so first let’s make the data tidy:

rexam_tidy_tib <- rexam_tib |>

pivot_longer(

cols = exam:numeracy,

values_to = "score",

names_to = "measure"

)The data now look like Table 10

DT::datatable(rexam_tidy_tib)To create the plot in Figure 16 use the code below.

ggplot(rexam_tidy_tib, aes(score)) +

geom_histogram(bins = 20, fill = "#C42503FF", colour = "#C42503FF", alpha = 0.2) +

labs(y = "Frequency", x = "Score") +

facet_wrap(~measure, scale = "free") +

theme_minimal()

The histograms show us several things. The exam scores are very interesting because this distribution is quite clearly not normal; in fact, it looks suspiciously bimodal (there are two peaks, indicative of two modes). It looks as though computer literacy is somewhat normally distributed (a few people are very good with computers and a few are very bad, but the majority of people have a similar degree of knowledge) as is the lecture attendance. Both are a little negatively skewed. Finally, the numeracy test has produced very positively skewed data (the majority of people did very badly on this test and only a few did well).

Descriptive statistics and histograms are a good way of getting an instant picture of the distribution of your data. This snapshot can be very useful: for example, the bimodal distribution of exam scores instantly indicates a trend that students are typically either very good at statistics or struggle with it (there are relatively few who fall in between these extremes). Intuitively, this finding fits with the nature of the subject: statistics is very easy once everything falls into place, but before that enlightenment occurs it all seems hopelessly difficult!

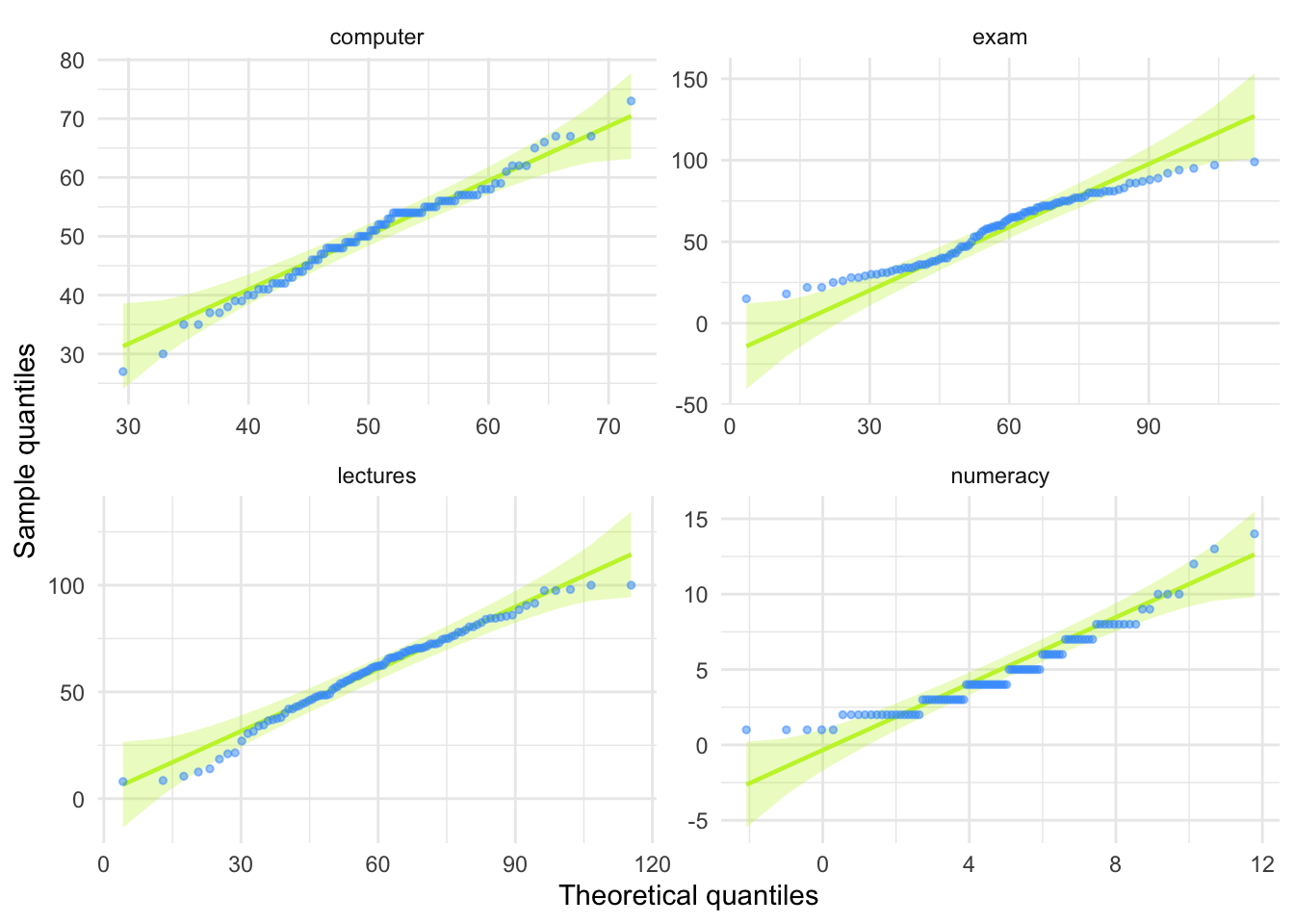

Task 6.4

Produce Q-Q plots for the four measures in Task 2.

Figure 17 was created using the code below.

ggplot(rexam_tidy_tib, aes(sample = score)) +

qqplotr::stat_qq_band(fill = "#C4F134FF", alpha = 0.3) +

qqplotr::stat_qq_line(colour = "#C4F134FF") +

qqplotr::stat_qq_point(colour = "#3E9BFEFF", alpha = 0.5, size = 1) +

labs(x = "Theoretical quantiles", y = "Sample quantiles") +

facet_wrap(~measure, scales = "free") +

theme_minimal()

Task 6.5

Use Task 2 and 4 to interpret skew and kurtosis for each of the four measures.

The skewness values are calculated in the table for task 2. For all of the measures the value of skewness is fairly close to zero, indicating the expected value of 0. The exception is numeracy scores for which skew is about 1. This is within acceptable limits. Looking at the Q-Q plots, none of them show the characteristic upward or downward bend associated with skew.

For kurtosis, computer literacy and lecture attendance have kurtosis around the expected value of 3. The Q-Q plots confirm that these measures show the characteristic patterns of normality.

Exam scores have kurtosis below 2 suggesting lighter tails than you’d expect in a normal distribution, which is also shown in the Q-Q plot with observed quantiles at the low end being higher than expected and observed quantiles at the high end being lower than expected.

Numeracy scores have kurtosis close to 4 indicating heavier tails than expected in a normal distribution, but the Q-Q plot looks relatively normal (although observed quantiles rise above the normal line at the low end.

Task 6.6

Produce summary statistics for the four measures separately for each university.

Table 11 shows the summary statistics split by university. From this table it is clear that Sussex students scored higher on both their exam and the numeracy test than their Duncetown counterparts. In fact, looking at the means reveals that, on average, Sussex students scored an amazing 36% more on the exam than Duncetown students, and had higher numeracy scores too (what can I say, my students are the best). Skew statistics are all close to the expected value of 0 and most of the kurtosis values are close to the expected value of 3. The notable exception are the computer literacy scores in the Sussex students, which have a kurtosis value above 4 suggesting heavy tails.

| uni | Variable | Mean | 95% CI (Mean) | SD | IQR | Range | Skewness | Kurtosis | n | n_Missing |

|---|---|---|---|---|---|---|---|---|---|---|

| Duncetown University | exam | 40.18 | (36.97, 43.73) | 12.59 | 17.50 | (15.00, 66.00) | 0.31 | -0.57 | 50 | 0 |

| Duncetown University | computer | 50.26 | (48.07, 52.22) | 8.07 | 12.25 | (35.00, 67.00) | 0.23 | -0.51 | 50 | 0 |

| Duncetown University | lectures | 56.26 | (51.95, 61.96) | 23.77 | 28.75 | (8.00, 100.00) | -0.31 | -0.38 | 50 | 0 |

| Duncetown University | numeracy | 4.12 | (3.63, 4.63) | 2.07 | 3.25 | (1.00, 9.00) | 0.51 | -0.48 | 50 | 0 |

| Sussex University | exam | 76.02 | (73.60, 79.34) | 10.21 | 12.25 | (56.00, 99.00) | 0.27 | -0.26 | 50 | 0 |

| Sussex University | computer | 51.16 | (49.19, 53.36) | 8.51 | 9.25 | (27.00, 73.00) | -0.54 | 1.38 | 50 | 0 |

| Sussex University | lectures | 63.27 | (58.20, 67.81) | 18.97 | 30.00 | (12.50, 100.00) | -0.36 | -0.22 | 50 | 0 |

| Sussex University | numeracy | 5.58 | (4.79, 6.30) | 3.07 | 5.00 | (1.00, 14.00) | 0.79 | 0.26 | 50 | 0 |

Task 6.7

Produce and interpret histograms for the four measures split by the two universities.

ggplot(rexam_tidy_tib, aes(score)) +

geom_histogram(bins = 20, fill = "#C42503FF", colour = "#C42503FF", alpha = 0.2) +

labs(y = "Frequency", x = "Score") +

facet_grid(uni~measure, scale = "free") +

theme_minimal()

The histograms of these variables split according to the university attended show numerous things. The first interesting thing to note is that for exam marks, the distributions are both fairly normal. This seems odd because the overall distribution was bimodal. However, it starts to make sense when you consider that for Duncetown the distribution is centred around a mark of about 40%, but for Sussex the distribution is centred around a mark of about 76%. This illustrates how important it is to look at distributions within groups. If we were interested in comparing Duncetown to Sussex it wouldn’t matter that overall the distribution of scores was bimodal; all that’s important is that each group comes from a normal population, and in this case it appears to be true. When the two samples are combined, these two normal distributions create a bimodal one (one of the modes being around the centre of the Duncetown distribution, and the other being around the centre of the Sussex data!).

For numeracy scores, the distribution is slightly positively skewed (there is a larger concentration at the lower end of scores) in both the Duncetown and Sussex groups. Therefore, the overall positive skew observed before is due to the mixture of universities.

The remaining distributions look vaguely normal. The only exception is the computer literacy scores for the Sussex students. This is a fairly flat distribution apart from a huge peak between 50 and 60%. It looks potentially slightly heavy-tailed. This suggests positive kurtosis and, in fact kurtosis is above 4 in this group (see previous task).

Chapter 7

Task 7.1

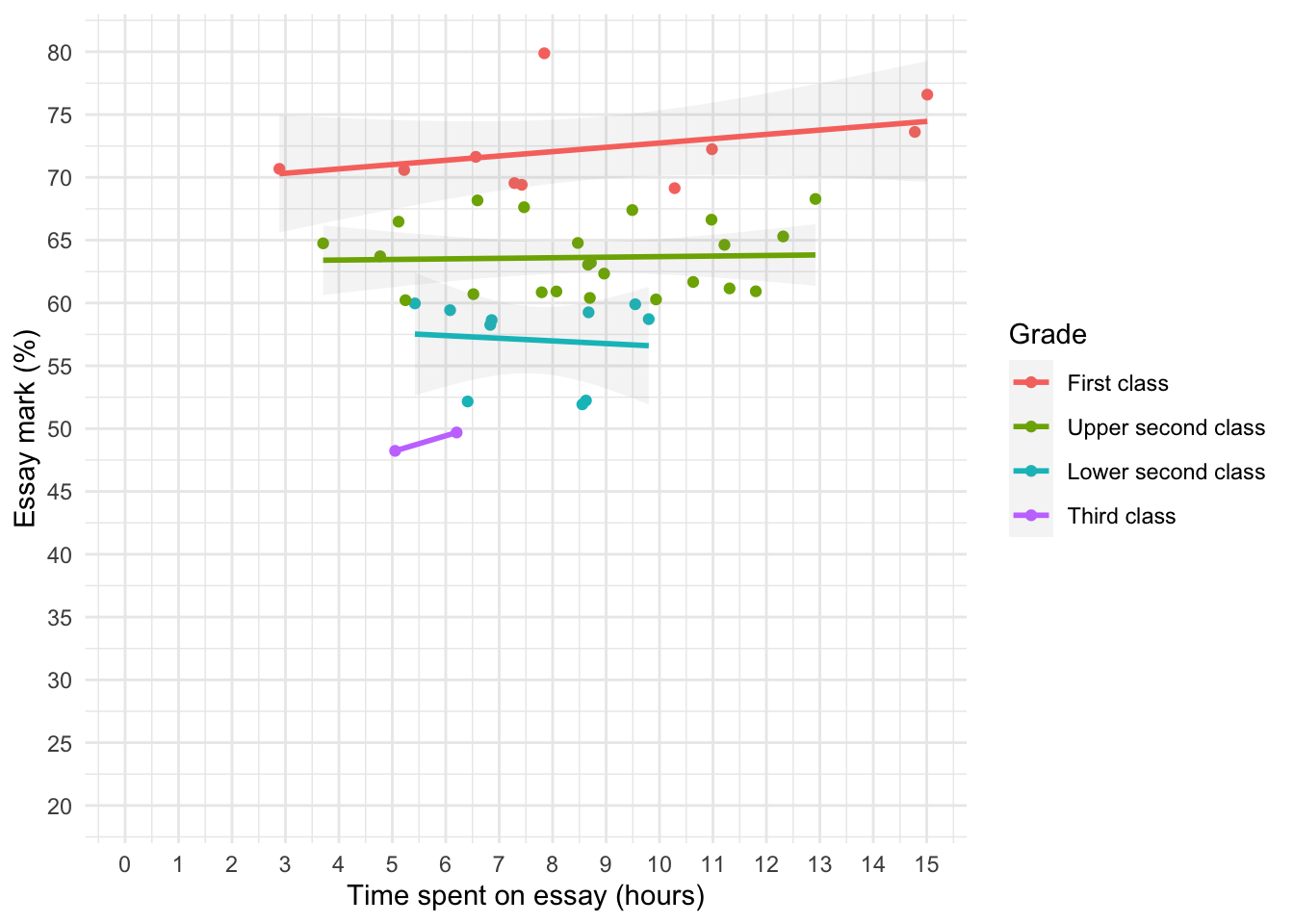

A student was interested in whether there was a positive relationship between the time spent doing an essay and the mark received. He got 45 of his friends and timed how long they spent writing an essay (

hours) and the percentage they got in the essay (essay). He also translated these grades into their degree classifications (grade): in the UK, a student can get a first-class mark (the best), an upper-second-class mark, a lower second, a third, a pass or a fail (the worst). Using the data in the fileessay_marks.csvfind out what the relationship was between the time spent doing an essay and the eventual mark in terms of percentage and degree class (draw a scatterplot too).

Load the data using (see Tip 2):

essay_tib <- discovr::essay_marksWe’re interested in looking at the relationship between hours spent on an essay and the grade obtained. We could create a scatterplot of hours spent on the essay (x-axis) and essay mark (y-axis). In Figure 19 I’ve chosen to highlight the degree classification grades using different colours.

ggplot(essay_tib, aes(hours, essay, colour = grade)) +

geom_point() +

geom_smooth(method = "lm", alpha = 0.1) +

coord_cartesian(xlim = c(0, 15), ylim = c(20, 80)) +

scale_x_continuous(breaks = seq(0, 15, 1)) +

scale_y_continuous(breaks = seq(20, 80, 5)) +

labs(x = "Time spent on essay (hours)", y = "Essay mark (%)", colour = "Grade", fill = "Grade") +

theme_minimal()

To get the correlation:

essay_r <- correlation(data = essay_tib, select = c("hours", "essay"))Table 13 indicate that the relationship between time spent writing an essay and grade awarded was not significant, \(r\) = 0.27 (-0.03, 0.52), t(43) = 1.81, p = 0.077.

display(essay_r)| Parameter1 | Parameter2 | r | 95% CI | t(43) | p |

|---|---|---|---|---|---|

| hours | essay | 0.27 | (-0.03, 0.52) | 1.81 | 0.077 |

p-value adjustment method: Holm (1979) Observations: 45

The second part of the question asks us to do the same analysis but when the percentages are recoded into degree classifications. The degree classifications are ordinal data (not interval): they are ordered categories. So we shouldn’t use Pearson’s test statistic, but Spearman’s and Kendall’s ones instead. grade is a factor:

essay_tib$grade [1] Upper second class First class Third class First class

[5] Lower second class Upper second class Upper second class Upper second class

[9] Upper second class Third class Upper second class First class

[13] First class Lower second class Lower second class Upper second class

[17] Upper second class Upper second class Upper second class Lower second class

[21] Lower second class First class Upper second class Upper second class

[25] First class Upper second class Lower second class Lower second class