Labcoat Leni

Leni is a budding young scientist and he’s fascinated by real research. He says, ‘Andy, I like an example about using an eel as a cure for constipation as much as the next guy, but all of your data are made up. We need some real examples, buddy!’ Leni walked the globe, a lone data warrior in a thankless quest for real data. When Leni appears in Discovering Statistics Using R and RStudio (2nd edition) he brings with him real data, from a real research study, to analyse. These examples let you practice your

Keep it real.

If a chapter isn’t listed it’s because it doesn’t contain a Labcoat Leni box

These solutions assume you have a setup code chunk at the start of your document that loads the easystats and tidyverse packages:

This document contains abridged sections from Discovering Statistics Using R and RStudio by Andy Field so there are some copyright considerations. You can use this material for teaching and non-profit activities but please do not meddle with it or claim it as your own work. See the full license terms at the bottom of the page.

Many tasks will require you to load data. You can do this in one of two ways. The easiest is to grab the data directly from the discovr package using the general code:

my_tib <- discovr::name_of_datain which you replace my_tib with the name you wish to give the data once loaded and name_of_data is the data you wish to load. In RStudio if you type discovr:: it will list the datasets available. For example, to load the data called students into a tibble called students_tib we’d execute:

students_tib <- discovr::studentsAlternatively, download the CSV file from https://www.discovr.rocks/csv/name_of_file replacing name_of_file with the name of the csv file. For example, to download students.csv use this url: https://www.discovr.rocks/csv/students.csv

Set up an RStudio project (exactly as described in Chapter 4), place the csv file in your data folder and use this general code:

in which you replace my_tib with the name you wish to give the data once loaded and name_of_file.csv is the name of the file you wish to read into here to locate the file within the project and read_csv() to read it into students.csv you would execute

Chapter 1

Is Friday 13th unlucky?

Let’s begin with accidents and poisoning on Friday the 6th. First, arrange the scores in ascending order: 1, 1, 4, 6, 9, 9.

The median will be the (n + 1)/2th score. There are 6 scores, so this will be the 7/2 = 3.5th. The 3.5th score in our ordered list is half way between the 3rd and 4th scores which is (4+6)/2= 5 accidents.

The mean is 5 accidents:

\[ \begin{align} \bar{X} &= \frac{\sum_{i = 1}^{n}x_i}{n} \\ &= \frac{1 + 1 + 4 + 6 + 9 + 9}{6} \\ &= \frac{30}{6} \\ &= 5 \end{align} \]

The lower quartile is the median of the lower half of scores. If we split the data in half, there will be 3 scores in the bottom half (lowest scores) and 3 in the top half (highest scores). The median of the bottom half will be the (3+1)/2 = 2nd score below the mean. Therefore, the lower quartile is 1 accident.

The upper quartile is the median of the upper half of scores. If we again split the data in half and take the highest 3 scores, the median will be the (3+1)/2 = 2nd score above the mean. Therefore, the upper quartile is 9 accidents.

The interquartile range is the difference between the upper and lower quartiles: 9 − 1 = 8 accidents.

To calculate the sum of squares, first take the mean from each score, then square this difference, and finally, add up these squared values:

| Score | Error (Score − Mean) |

Error Squared |

|---|---|---|

| 1 | –4 | 16 |

| 1 | –4 | 16 |

| 4 | –1 | 1 |

| 6 | 1 | 1 |

| 9 | 4 | 16 |

| 9 | 4 | 16 |

So, the sum of squared errors is: 16 + 16 + 1 + 1 + 16 + 16 = 66.

The variance is the sum of squared errors divided by the degrees of freedom (N − 1):

\[ s^{2} = \frac{\text{sum of squares}}{N- 1} = \frac{66}{5} = 13.20 \]

The standard deviation is the square root of the variance:

\[ s = \sqrt{\text{variance}} = \sqrt{13.20} = 3.63 \]

Next let’s look at accidents and poisoning on Friday the 13th. First, arrange the scores in ascending order: 5, 5, 6, 6, 7, 7.

The median will be the (n + 1)/2th score. There are 6 scores, so this will be the 7/2 = 3.5th. The 3.5th score in our ordered list is half way between the 3rd and 4th scores which is (6+6)/2 = 6 accidents.

The mean is 6 accidents:

\[ \begin{align} \bar{X} &= \frac{\sum_{i = 1}^{n}x_{i}}{n} \\ &= \frac{5 + 5 + 6 + 6 + 7 + 7}{6} \\ &= \frac{36}{6} \\ &= 6 \\ \end{align} \]

The lower quartile is the median of the lower half of scores. If we split the data in half, there will be 3 scores in the bottom half (lowest scores) and 3 in the top half (highest scores). The median of the bottom half will be the (3+1)/2 = 2nd score below the mean. Therefore, the lower quartile is 5 accidents.

The upper quartile is the median of the upper half of scores. If we again split the data in half and take the highest 3 scores, the median will be the (3+1)/2 = 2nd score above the mean. Therefore, the upper quartile is 7 accidents.

The interquartile range is the difference between the upper and lower quartiles: 7 − 5 = 2 accidents.

To calculate the sum of squares, first take the mean from each score, then square this difference, finally, add up these squared values:

| Score | Error (Score − Mean) |

Error Squared |

|---|---|---|

| 7 | 1 | 1 |

| 6 | 0 | 0 |

| 5 | –1 | 1 |

| 5 | –1 | 1 |

| 7 | 1 | 1 |

| 6 | 0 | 0 |

So, the sum of squared errors is: 1 + 0 + 1 + 1 + 1 + 0 = 4.

The variance is the sum of squared errors divided by the degrees of freedom (N − 1):

\[ s^{2} = \frac{\text{sum of squares}}{N - 1} = \frac{4}{5} = 0.8 \]

The standard deviation is the square root of the variance:

\[ s = \sqrt{\text{variance}} = \sqrt{0.8} = 0.894 \]

Next, let’s look at traffic accidents on Friday the 6th. First, arrange the scores in ascending order: 3, 5, 6, 9, 11, 11.

The median will be the (n + 1)/2th score. There are 6 scores, so this will be the 7/2 = 3.5th. The 3.5th score in our ordered list is half way between the 3rd and 4th scores. The 3rd score is 6 and the 4th score is 9. Therefore the 3.5th score is (6+9)/2 = 7.5 accidents.

The mean is 7.5 accidents:

\[ \begin{align} \bar{X} &= \frac{\sum_{i = 1}^{n}x_{i}}{n} \\ &= \frac{3 + 5 + 6 + 9 + 11 + 11}{6} \\ &= \frac{45}{6} \\ &= 7.5 \end{align} \]

The lower quartile is the median of the lower half of scores. If we split the data in half, there will be 3 scores in the bottom half (lowest scores) and 3 in the top half (highest scores). The median of the bottom half will be the (3+1)/2 = 2nd score below the mean. Therefore, the lower quartile is 5 accidents.

The upper quartile is the median of the upper half of scores. If we again split the data in half and take the highest 3 scores, the median will be the (3+1)/2 = 2nd score above the mean. Therefore, the upper quartile is 11 accidents.

The interquartile range is the difference between the upper and lower quartiles: 11 − 5 = 6 accidents.

To calculate the sum of squares, first take the mean from each score, then square this difference, finally, add up these squared values:

| Score | Error (Score − Mean) |

Error Squared |

|---|---|---|

| 9 | 1.5 | 2.25 |

| 6 | –1.5 | 2.25 |

| 11 | 3.5 | 12.25 |

| 11 | 3.5 | 12.25 |

| 3 | –4.5 | 20.25 |

| 5 | –2.5 | 6.25 |

So, the sum of squared errors is: 2.25 + 2.25 + 12.25 + 12.25 + 20.25 + 6.25 = 55.5.

The variance is the sum of squared errors divided by the degrees of freedom (N − 1):

\[ s^{2} = \frac{\text{sum of squares}}{N - 1} = \frac{55.5}{5} = 11.10 \]

The standard deviation is the square root of the variance:

\[ s = \sqrt{\text{variance}} = \sqrt{11.10} = 3.33 \]

Finally, let’s look at traffic accidents on Friday the 13th. First, arrange the scores in ascending order: 4, 10, 12, 12, 13, 14.

The median will be the (n + 1)/2th score. There are 6 scores, so this will be the 7/2 = 3.5th. The 3.5th score in our ordered list is half way between the 3rd and 4th scores. The 3rd score is 12 and the 4th score is 12. Therefore the 3.5th score is (12+12)/2= 12 accidents.

The mean is 10.83 accidents:

\[ \begin{align} \bar{X} &= \frac{\sum_{i = 1}^{n}x_{i}}{n} \\ &= \frac{4 + 10 + 12 + 12 + 13 + 14}{6} \\ &= \frac{65}{6} \\ &= 10.83 \end{align} \]

The lower quartile is the median of the lower half of scores. If we split the data in half, there will be 3 scores in the bottom half (lowest scores) and 3 in the top half (highest scores). The median of the bottom half will be the (3+1)/2 = 2nd score below the mean. Therefore, the lower quartile is 10 accidents.

The upper quartile is the median of the upper half of scores. If we again split the data in half and take the highest 3 scores, the median will be the (3+1)/2 = 2nd score above the mean. Therefore, the upper quartile is 13 accidents.

The interquartile range is the difference between the upper and lower quartile: 13 − 10 = 3 accidents.

To calculate the sum of squares, first take the mean from each score, then square this difference, finally, add up these squared values:

| Score | Error (Score − Mean) |

Error Squared |

|---|---|---|

| 4 | –6.83 | 46.65 |

| 10 | –0.83 | 0.69 |

| 12 | 1.17 | 1.37 |

| 12 | 1.17 | 1.37 |

| 13 | 2.17 | 4.71 |

| 14 | 3.17 | 10.05 |

So, the sum of squared errors is: 46.65 + 0.69 + 1.37 + 1.37 + 4.71 + 10.05 = 64.84.

The variance is the sum of squared errors divided by the degrees of freedom (N − 1):

\[ s^{2} = \frac{\text{sum of squares}}{N- 1} = \frac{64.84}{5} = 12.97 \]

The standard deviation is the square root of the variance:

\[ s = \sqrt{\text{variance}} = \sqrt{12.97} = 3.6 \]

Chapter 3

Researcher degrees of freedom: a sting in the tale

No solution required.

Chapter 4

Gonna be a rock ‘n’ roll singer

Data from Oxoby (2008).

Using a task from experimental economics called the ultimatum game, individuals are assigned the role of either proposer or responder and paired randomly. Proposers were allocated $10 from which they had to make a financial offer to the responder (i.e., $2). The responder can accept or reject this offer. If the offer is rejected neither party gets any money, but if the offer is accepted the responder keeps the offered amount (e.g., $2), and the proposer keeps the original amount minus what they offered (e.g., $8). For half of the participants the song ‘It’s a long way to the top’ sung by Bon Scott was playing in the background; for the remainder ‘Shoot to thrill’ sung by Brian Johnson was playing. Oxoby measured the offers made by proposers, and the minimum accepted by responders (called the minimum acceptable offer). He reasoned that people would accept lower offers and propose higher offers when listening to something they like (because of the ‘feel-good factor’ the music creates). Therefore, by comparing the value of offers made and the minimum acceptable offers in the two groups he could see whether people have more of a feel-good factor when listening to Bon or Brian. These data are estimated from Figures 1 and 2 in the paper because I couldn’t get hold of the author to get the original data files. The offers made (in dollars) are as follows (there were 18 people per group):

- Bon Scott group: 1, 2, 2, 2, 2, 3, 3, 3, 3, 3, 4, 4, 4, 4, 4, 5, 5, 5

- Brian Johnson group: 2, 3, 3, 3, 3, 3, 4, 4, 4, 4, 4, 5, 5, 5, 5, 5, 5, 5

Create a tibble called

oxoby_tiband enter these data into it. (Hint: There should be two variables, singer which is a factor and offer which is a double).

There are several ways to enter the Oxoby data, here’s one of them (the resulting data are in Table 1):

DT::datatable(oxoby_tib)Chapter 5

Gonna be a rock ‘n’ roll singer (again!)

Data from Oxoby (2008).

In Labcoat Leni’s Real Research 4.1 we came across a study that compared economic behaviour while different music by AC/DC played in the background. Specifically, Oxoby manipulated whether the background song was sung by AC/DC’s original singer (Bonn Scott) or his replacement (Brian Johnson). He measured how many offers participants accepted and the minimum offer they would accept (

acdc.csv). See Labcoat Leni’s Real Research 4.1 for more detail on the study. We entered the data for this study in the previous chapter; now let’s plot it. Produce separate histograms for the number of offers and the minumum acceptable offer and in both cases split the data by which singer was singing in the background music. Compare these plots with Figures 1 and 2 in the original article.

Load the data using (see Tip 1):

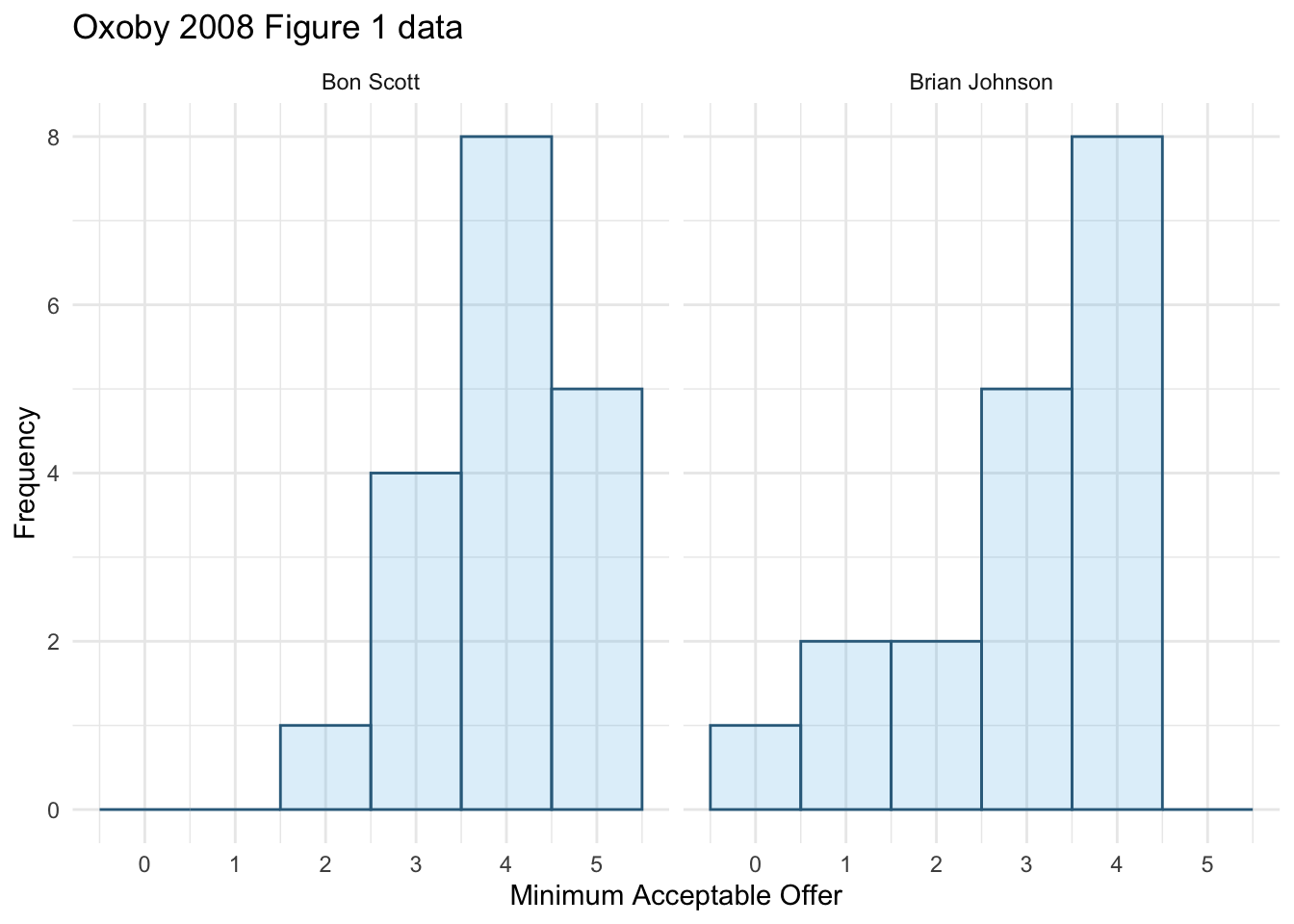

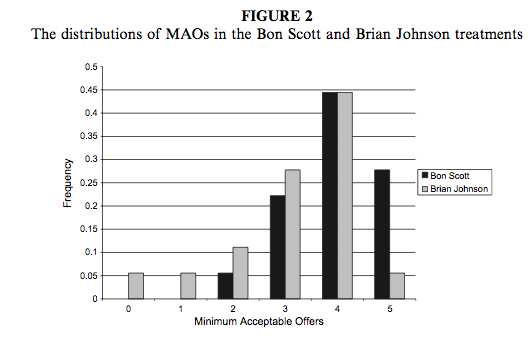

oxoby_tib <- discovr::acdcWe can produce a plot of mao as in Figure 1.

ggplot(oxoby_tib, aes(mao)) +

geom_histogram(binwidth = 1, fill = "#56B4E9", colour = "#336c8b", alpha = 0.2) +

labs(y = "Frequency", x = "Minimum Acceptable Offer", title = "Oxoby 2008 Figure 1 data") +

scale_x_continuous(breaks = seq(0, 5, 1)) +

facet_wrap(~singer) +

theme_minimal()

We can compare the resulting histogram with Figure 2 from the original article (reproduced in Figure 2). Both plots show that MAOs were higher when participants heard the music of Bon Scott. This suggests that more offers would be rejected when listening to Bon Scott than when listening to Brian Johnson.

Next we want to produce a histogram for number of offers made. To do this, use the same code except edit the name of the x-variable in the first line to be offer and also change the label for the x-axis.

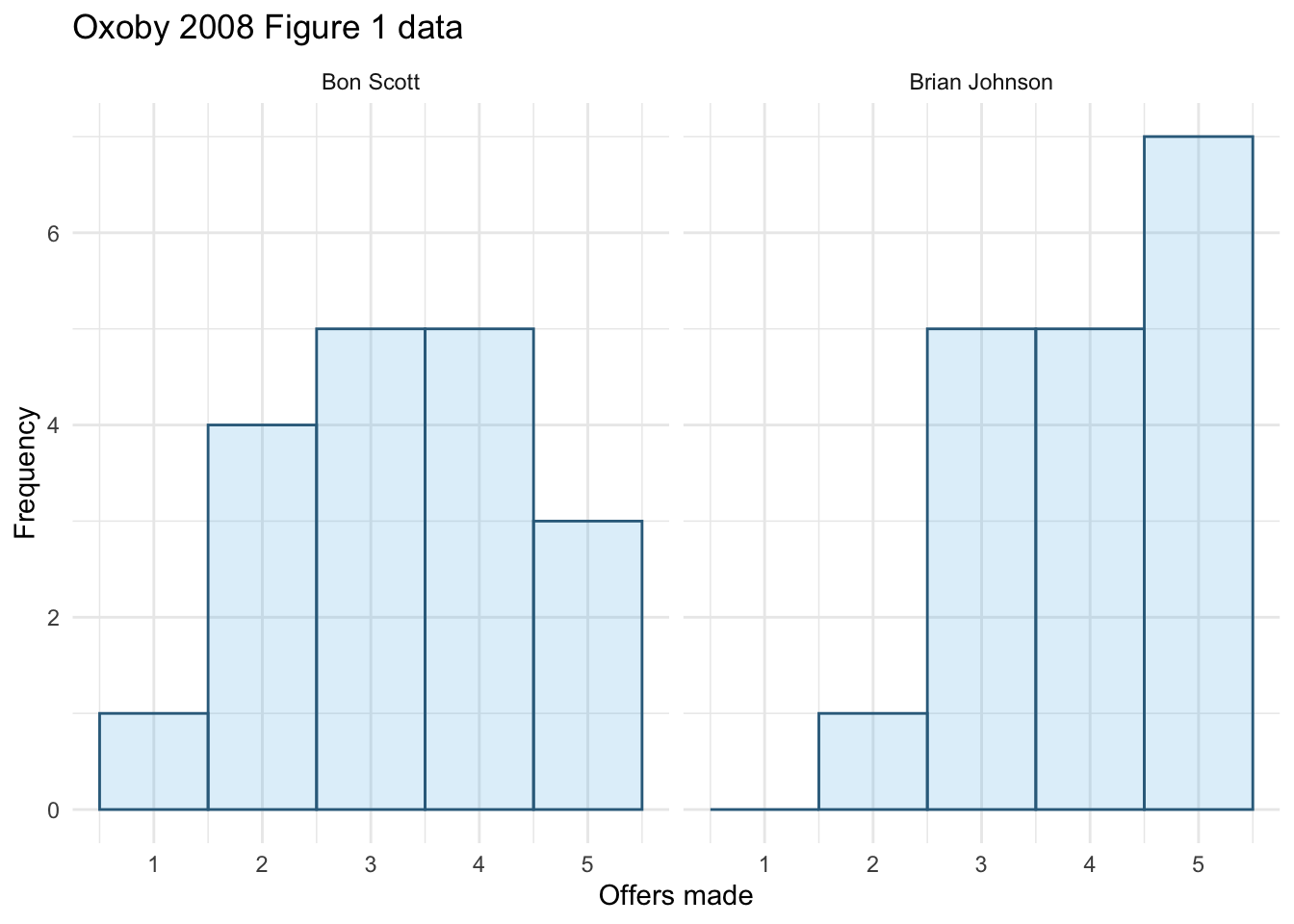

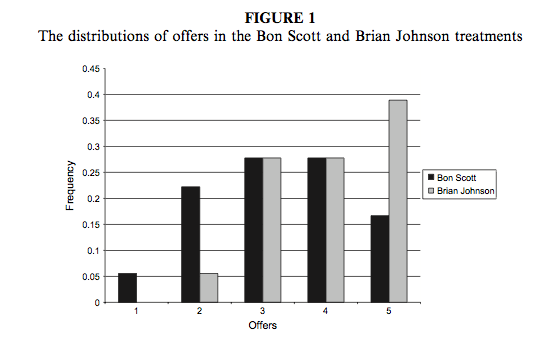

We can produce a plot of offer as in Figure 3.

ggplot(oxoby_tib, aes(offer)) +

geom_histogram(binwidth = 1, fill = "#56B4E9", colour = "#336c8b", alpha = 0.2) +

labs(y = "Frequency", x = "Offers made", title = "Oxoby 2008 Figure 1 data") +

scale_x_continuous(breaks = seq(0, 5, 1)) +

facet_wrap(~singer) +

theme_minimal()

We can compare the resulting population pyramid above with Figure 1 from the original article (reproduced in Figure 4). Both graphs show that offers made were lower when participants heard the music of Bon Scott.

Seeing red

The data are from Johns et al. (2012).

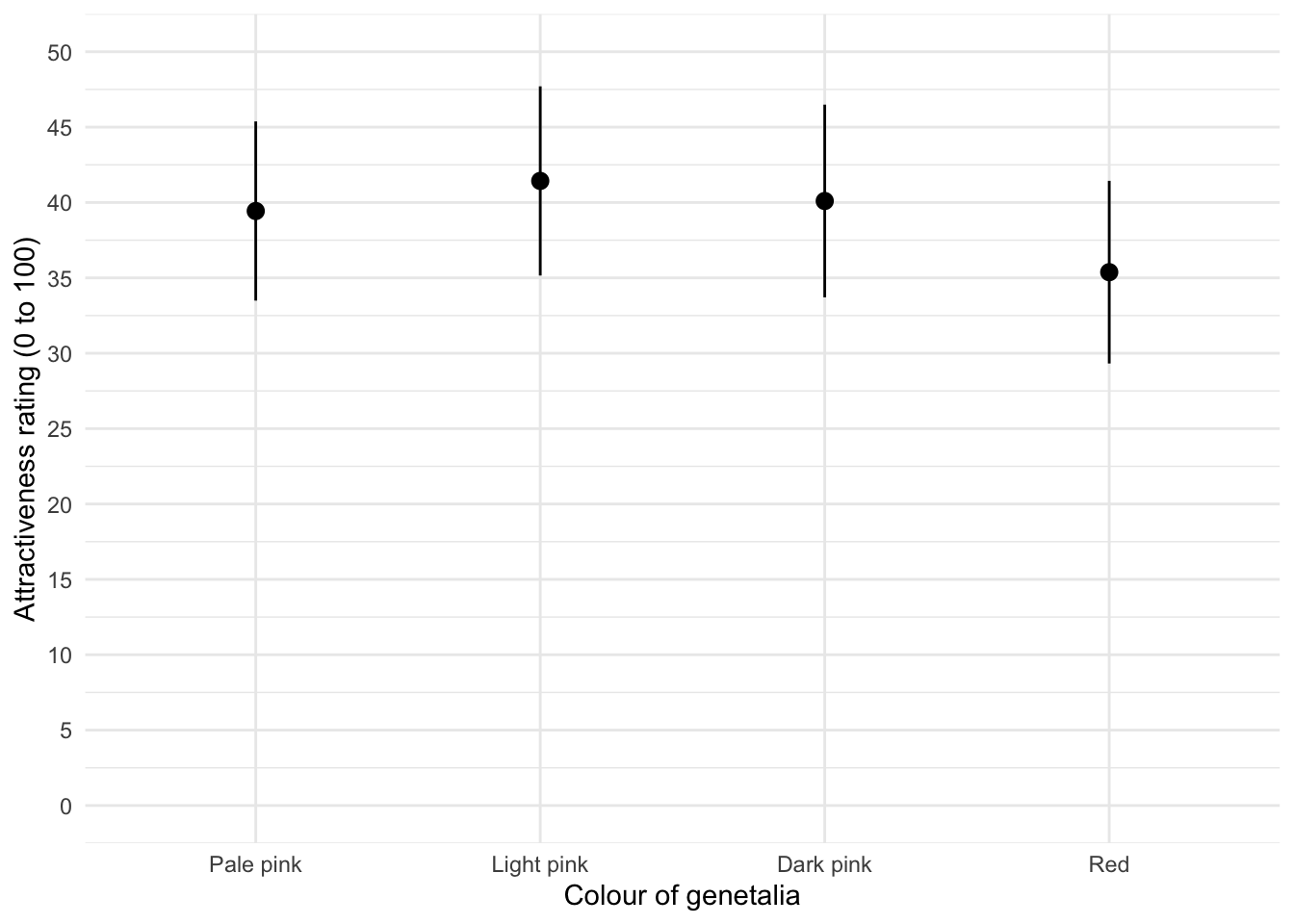

Certain theories suggest that heterosexual males have evolved a predisposition towards the colour red because it is sexually salient. The theory also suggests that women use the colour red as a proxy signal for genital colour to indicate ovulation and sexual proceptivity. Decide for yourself how plausible this theory seems, but a hypothesis to test it is that using the colour red in this way ought to attract men (otherwise it’s a pointless strategy). In a novel study, Johns et al. (2012) tested this idea by manipulating the colour of four pictures of female genitalia to make them increasing shades of red (pale pink, light pink, dark pink, red) relative to the surrounding skin colour. Heterosexual males rated the resulting 16 pictures from 0 (unattractive) to 100 (attractive). The data are in the file

johns_2012.csv. Draw an error bar chart of the mean ratings for the four different colours. Do you think men preferred red genitals? (Remember, if the theory is correct then red should be rated highest.) (We analyse these data at the end of Chapter 16.)

Load the data using (see Tip 1):

johns_tib <- discovr::johns_2012The plot (Figure 5) can be made using the following code:

ggplot(johns_tib, aes(colour, attractiveness)) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange") +

labs(x = "Colour of genetalia", y = "Attractiveness rating (0 to 100)") +

coord_cartesian(ylim = c(0, 50)) +

scale_y_continuous(breaks = seq(0, 50, 5)) +

theme_minimal()

The mean ratings for all colours are fairly similar, suggesting that men don’t prefer the colour red. In fact, the colour red has the lowest mean rating, suggesting that men liked the red genitalia the least. The light pink genital colour had the highest mean rating, but don’t read anything into that: the means are all very similar.

Chapter 7

Why do you like your lecturers?

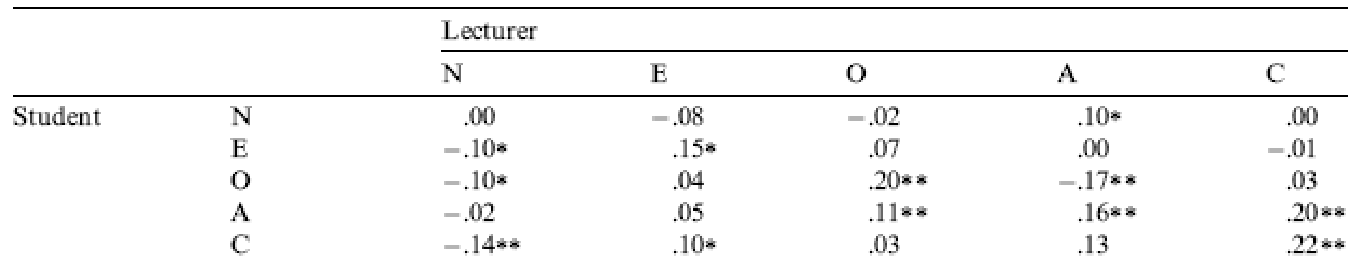

Data from Chamorro-Premuzic et al. (2008).

As students you probably have to rate your lecturers at the end of the course. There will be some lecturers you like and others you don’t. As a lecturer I find this process horribly depressing (although this has a lot to do with the fact that I tend to focus on negative feedback and ignore the good stuff). There is some evidence that students tend to pick courses of lecturers they perceive to be enthusastic and good communicators. In a fascinating study, Tomas Chamorro-Premuzic and his colleagues (Chamorro-Premuzic et al., 2008) tested the hypothesis that students tend to like lecturers who are like themselves. (This hypothesis will have the students on my course who like my lectures screaming in horror.)

The authors measured students’ own personalities using a very well established measure (the NEO-FFI) which measures five fundamental personality traits: neuroticism, extroversion, openness to experience, agreeableness and conscientiousness. Students also completed a questionnaire in which they were given descriptions (e.g., ‘warm: friendly, warm: sociable, cheerful, affectionate, outgoing’) and asked to rate how much they wanted to see this in a lecturer from −5 (I don’t want this characteristic in my lecturer at all) through 0 (the characteristic is not important) to +5 (I really want this characteristic in my lecturer). The characteristics were the same as those measured by the NEO-FFI.

As such, the authors had a measure of how much a student had each of the five core personality characteristics but also a measure of how much they wanted to see those same characteristics in their lecturer. Tomas and his colleagues could then test whether, for instance, extroverted students want extroverted lecturers. The data from this study are in the file

chamorro_premuzic.csv. Run Pearson correlations on these variables to see if students with certain personality characteristics want to see those characteristics in their lecturers. What conclusions can you draw

Load the data using (see Tip 1):

chamorro_tib <- discovr::chamorro_premuzicTo get the correlations use correlation() (note I have set p_adjust = "none" because they didn’t correct p-values for multiple tests in the paper). We’re interested only in the correlations between students’ personality and what they want in lecturers. We’re not interested in how their own five personality traits correlate with each other (i.e. if a student is neurotic are they conscientious too?). To focus in on these correlations we can use a trick not discussed in the book. In the book we saw that correlation() has a select argument that enables you to select the variables in the tibble that you want to include in the correlation matrix. By default the function computes that correlation between all pairs of those variables. So, if we specified:

chamorro_r <- chamorro_tib |>

correlation(data = chamorro_tib,

select = c("stu_neurotic", "stu_extro", "stu_open", "stu_agree", "stu_consc", "lec_neurotic", "lec_extro", "lec_open", "lec_agree", "lec_consc"))We would get, for example, stu_neurotic correlated with all 9 other variables. However, only care about 5 of those (the ones with the variables that relate to lecturers and begin lec_). In total, we’d get 45 correlations but we only care about 25 of them (each of the 5 student variables correlated with each of the 5 lecturer variables). In fact, correlation() has an argument select2 that allows you to select a second list of variables. When both select and select2 arguments are used, rather than correlating every variable with every other variable, the variables in the one list are correlated with all of the variables in the other list but not with the variables within the same list. Therefore we’ll use the code below to generate Table 2. (Note that every variable beginning with stu_ is correlated with every variable beginning with lec_ but not with other variables beginning with stu_].

| Parameter1 | Parameter2 | r | 95% CI | t | df | p |

|---|---|---|---|---|---|---|

| stu_neurotic | lec_neurotic | 6.54e-03 | (-0.09, 0.10) | 0.13 | 411 | 0.895 |

| stu_neurotic | lec_extro | -0.08 | (-0.20, 0.04) | -1.36 | 279 | 0.176 |

| stu_neurotic | lec_open | -0.02 | (-0.11, 0.08) | -0.37 | 414 | 0.712 |

| stu_neurotic | lec_agree | 0.10 | (4.16e-03, 0.20) | 2.05 | 411 | 0.041* |

| stu_neurotic | lec_consc | 2.72e-03 | (-0.09, 0.10) | 0.06 | 411 | 0.956 |

| stu_extro | lec_neurotic | -0.10 | (-0.19, -2.31e-03) | -2.01 | 409 | 0.045* |

| stu_extro | lec_extro | 0.15 | (0.04, 0.27) | 2.59 | 279 | 0.010* |

| stu_extro | lec_open | 0.07 | (-0.03, 0.16) | 1.39 | 412 | 0.165 |

| stu_extro | lec_agree | 4.25e-03 | (-0.09, 0.10) | 0.09 | 409 | 0.932 |

| stu_extro | lec_consc | -9.73e-03 | (-0.11, 0.09) | -0.20 | 409 | 0.844 |

| stu_open | lec_neurotic | -0.10 | (-0.20, -4.35e-03) | -2.05 | 409 | 0.041* |

| stu_open | lec_extro | 0.04 | (-0.08, 0.16) | 0.68 | 279 | 0.499 |

| stu_open | lec_open | 0.20 | (0.11, 0.29) | 4.16 | 412 | < .001*** |

| stu_open | lec_agree | -0.16 | (-0.26, -0.07) | -3.34 | 409 | < .001*** |

| stu_open | lec_consc | -0.03 | (-0.13, 0.06) | -0.68 | 409 | 0.494 |

| stu_agree | lec_neurotic | -0.02 | (-0.12, 0.08) | -0.43 | 404 | 0.667 |

| stu_agree | lec_extro | 0.05 | (-0.07, 0.17) | 0.82 | 274 | 0.412 |

| stu_agree | lec_open | 0.11 | (9.52e-03, 0.20) | 2.16 | 406 | 0.031* |

| stu_agree | lec_agree | 0.16 | (0.07, 0.26) | 3.33 | 403 | < .001*** |

| stu_agree | lec_consc | 0.20 | (0.10, 0.29) | 4.05 | 403 | < .001*** |

| stu_consc | lec_neurotic | -0.14 | (-0.23, -0.04) | -2.85 | 407 | 0.005** |

| stu_consc | lec_extro | 0.10 | (-0.02, 0.22) | 1.70 | 278 | 0.090 |

| stu_consc | lec_open | 0.03 | (-0.07, 0.12) | 0.55 | 410 | 0.582 |

| stu_consc | lec_agree | 0.13 | (0.04, 0.23) | 2.70 | 407 | 0.007** |

| stu_consc | lec_consc | 0.22 | (0.12, 0.31) | 4.47 | 407 | < .001*** |

p-value adjustment method: none Observations: 276-416

These values replicate the values reported in the original research paper (part of the authors’ table is reproduced in Figure 6 so you can see how they reported these values – match these values to the values in your output). In fact, we can display our results in a similar for using summary() as in Table 3.

| Parameter | lec_neurotic | lec_extro | lec_open | lec_agree | lec_consc |

|---|---|---|---|---|---|

| stu_neurotic | 0.01 | -0.08 | -0.02 | 0.10* | 0.00 |

| stu_extro | -0.10* | 0.15* | 0.07 | 0.00 | -0.01 |

| stu_open | -0.10* | 0.04 | 0.20*** | -0.16*** | -0.03 |

| stu_agree | -0.02 | 0.05 | 0.11* | 0.16*** | 0.20*** |

| stu_consc | -0.14** | 0.10 | 0.03 | 0.13** | 0.22*** |

p-value adjustment method: none

As for what we can conclude, well, neurotic students tend to want agreeable lecturers, \(r = .10, p = .041\); extroverted students tend to want extroverted lecturers, $r = .15, p = .010 $; students who are open to experience tend to want lecturers who are open to experience, \(r = .20, p < .001\), and don’t want agreeable lecturers, \(r = -.16, p < .001\); agreeable students want every sort of lecturer apart from neurotic. Finally, conscientious students tend to want conscientious lecturers, \(r = .22, p < .001\), and extroverted ones, \(r = .10, p = .09\) (note that the authors report the one-tailed p-value), but don’t want neurotic ones, \(r = -.14, p = .005\).

Chapter 8

I want to be loved (on Facebook)

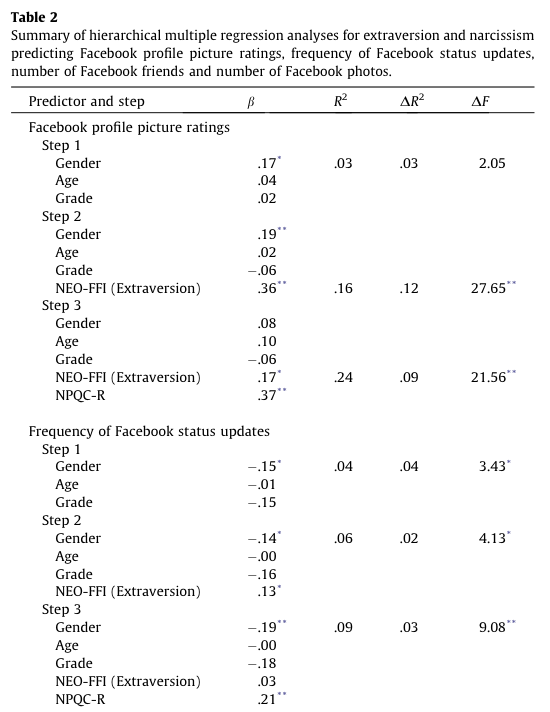

Data from Ong et al. (2011).

Social media websites such as Facebook offer an unusual opportunity to carefully manage your self-presentation to others (i.e., you can appear rad when in fact you write statistics books and wear 1980s heavy metal band T-shirts). Ong et al. (2011) examined the relationship between narcissism and behaviour on Facebook in 275 adolescents. They measured the age, sex and grade (at school), as well as extroversion and narcissism. They also measured how often (per week) these people updated their Facebook status (status), and also how they rated their own profile picture on each of four dimensions: coolness, glamour, fashionableness and attractiveness. These ratings were summed as an indicator of how positively they perceived the profile picture they had selected for their page (profile). Ong et al. hypothesized that narcissism would predict the frequency of status updates, and how positive a profile picture the person chose. To test this, they conducted two hierarchical regressions: one with status as the outcome and one with profile as the outcome. In both models they entered age, sex and grade in the first block, then added extroversion in a second block and finally narcissism in a third block. Using

ong_2011.csv, Labcoat Leni wants you to replicate the two hierarchical regressions and create a table of the results for each.

Load the data using (see Tip 1):

ong_tib <- discovr::ong_2011The first linear model predicts the frequency of status updates from the demographic variables:

status_base_lm <- lm(status ~ sex + age + grade, data = ong_tib, na.action = na.exclude)The second model adds extraversion:

status_ext_lm <- update(status_base_lm, .~. + extraversion)The final model adds narcissism:

status_ncs_lm <- update(status_ext_lm, .~. + narcissism)Table 4 compares the models. Adding extroversion, \(\chi^2\)(1) = 4.02, p = 0.045 and narcissim, \(\chi^2\)(1) = 8.98, p = 0.003, both significantly improve the fit of the model (i.e. they are significant predictors). So basically, Ong et al.’s prediction was supported in that after adjusting for age, grade and gender, narcissism significantly predicted the frequency of Facebook status updates over and above extroversion. Table 5 shows the model parameters. The positive standardized beta value (0.21) indicates a positive relationship between frequency of Facebook updates and narcissism, in that more narcissistic adolescents updated their Facebook status more frequently than their less narcissistic peers did. Compare these results to the results reported in Ong et al. (2011). The Table 2 from their paper is reproduced in Figure 7.

test_lrt(status_base_lm, status_ext_lm, status_ncs_lm) |>

display()| Name | Model | df | df_diff | Chi2 | p |

|---|---|---|---|---|---|

| status_base_lm | lm | 6 | |||

| status_ext_lm | lm | 7 | 1 | 4.02 | 0.045 |

| status_ncs_lm | lm | 8 | 1 | 8.98 | 0.003 |

model_parameters(model = status_ncs_lm, standardize = "refit") |>

display()| Parameter | Coefficient | SE | 95% CI | t(244) | p |

|---|---|---|---|---|---|

| (Intercept) | 0.38 | 0.21 | (-0.03, 0.80) | 1.80 | 0.072 |

| sex (Male) | -0.38 | 0.13 | (-0.64, -0.12) | -2.83 | 0.005 |

| age | -3.35e-03 | 0.13 | (-0.25, 0.24) | -0.03 | 0.979 |

| grade (Sec 2) | -0.17 | 0.21 | (-0.58, 0.25) | -0.80 | 0.422 |

| grade (Sec 3) | -0.42 | 0.31 | (-1.02, 0.19) | -1.36 | 0.175 |

| extraversion | 0.03 | 0.07 | (-0.11, 0.16) | 0.37 | 0.714 |

| narcissism | 0.21 | 0.07 | (0.07, 0.35) | 3.00 | 0.003 |

OK, now let’s fit more models to investigate whether narcissism predicts, above and beyond the other variables, the Facebook profile picture ratings. We use the same code as before but change the outcome from status to profile:

Table 6 compares the models. Adding extroversion, \(\chi^2\)(1) = 29.61, p < 0.001 and narcissim, \(\chi^2\)(1) = 21.60, p < 0.001, both significantly improve the fit of the model (i.e. they are significant predictors). So basically, Ong et al.’s prediction was supported in that after adjusting for age, grade and gender, narcissism significantly predicted the Facebook profile picture ratings over and above extroversion. The positive beta value (0.37) indicates a positive relationship between profile picture ratings and narcissism, in that more narcissistic adolescents rated their Facebook profile pictures more positively than their less narcissistic peers did. Compare these results to the results reported in Table 2 of Ong et al. (2011) reproduced in Figure 7.

test_lrt(prof_base_lm, prof_ext_lm, prof_ncs_lm) |>

display()| Name | Model | df | df_diff | Chi2 | p |

|---|---|---|---|---|---|

| prof_base_lm | lm | 6 | |||

| prof_ext_lm | lm | 7 | 1 | 29.61 | < .001 |

| prof_ncs_lm | lm | 8 | 1 | 21.60 | < .001 |

model_parameters(model = prof_ncs_lm, standardize = "refit") |>

display()| Parameter | Coefficient | SE | 95% CI | t(186) | p |

|---|---|---|---|---|---|

| (Intercept) | 0.05 | 0.21 | (-0.36, 0.47) | 0.26 | 0.798 |

| sex (Male) | 0.16 | 0.15 | (-0.13, 0.45) | 1.10 | 0.271 |

| age | 0.10 | 0.12 | (-0.14, 0.34) | 0.80 | 0.424 |

| grade (Sec 2) | -0.13 | 0.22 | (-0.57, 0.30) | -0.60 | 0.551 |

| grade (Sec 3) | -0.16 | 0.30 | (-0.75, 0.44) | -0.51 | 0.607 |

| extraversion | 0.17 | 0.08 | (0.02, 0.32) | 2.20 | 0.029 |

| narcissism | 0.37 | 0.08 | (0.21, 0.52) | 4.65 | < .001 |

Why do you like your lecturers?

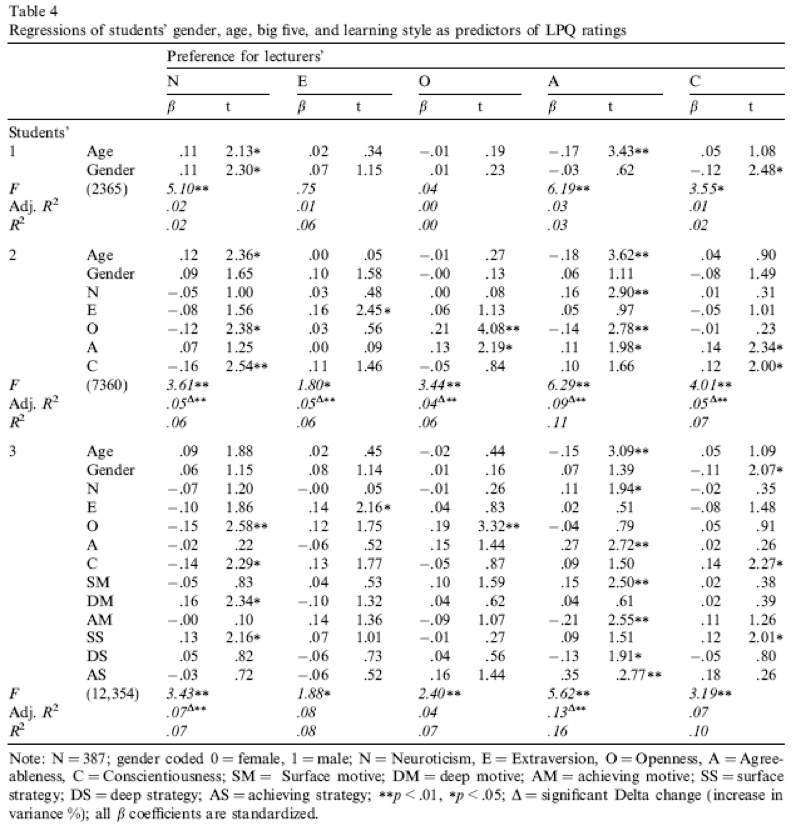

Data from Chamorro-Premuzic et al. (2008).

In the previous chapter we encountered a study by Chamorro-Premuzic et al. that linked students’ personality traits with those they want to see in lecturers (see Labcoat Leni’s Real Research 7.1 for a full description). In that chapter we correlated these scores, but now Labcoat Leni wants you to carry out five multiple regression analyses: the outcome variables across the five models are the ratings of how much students want to see neuroticism, extroversion, openness to experience, agreeableness and conscientiousness. For each of these outcomes, force age and gender into the analysis in the first step of the hierarchy, then in the second block force in the five student personality traits (neuroticism, extroversion, openness to experience, agreeableness and conscientiousness). For each analysis create a table of the results. View the solutions at www.discovr.rocks (or look at Table 4 in the original article). The data are in the file

chamorro_premuzic.csv.

Load the data using (see Tip 1):

chamorro_tib <- discovr::chamorro_premuzicLecturer neuroticism

All of the models follow a similar pattern:

- We fit a baseline model predicting the degree to which students want lecturers to be a trait (in this example neurotic) from

ageandsex - We fit a second model that adds all of the student personality variables (five variables in all)

- Compare the models using

compare_performance()to see whether adding the personality variables improves fit - Interpret the parameter estimates of the second model

We fit the models and compare their fit using the code below. Table 8 shows that adding the personality variables increases the variance explained from 3.1% to 6.4%. Table 8 shows that age, \(\hat{b}\) = 0.30 (0.05, 0.55), t(365) = 2.35, p = 0.019, openness, \(\hat{b}\) = -0.17 (-0.32, -0.03), t(365) = -2.39, p = 0.017, and conscientiousness, \(\hat{b}\) = -0.20 (-0.36, -0.04), t(365) = -2.48, p = 0.013, were significant predictors of wanting a neurotic lecturer. Note that for openness and conscientiousness the relationship is negative; that is, the higher a student scored on these characteristics, the less they wanted a neurotic lecturer.

# Fit the baseline model

neuro_base_lm <- lm(lec_neurotic ~ age + sex,

data = chamorro_tib,

na.action = na.exclude)

# Add personality variables

neuro_full_lm <- lm(lec_neurotic ~ age + sex + stu_neurotic + stu_extro + stu_open + stu_agree + stu_consc,

data = chamorro_tib,

na.action = na.exclude)

compare_performance(neuro_base_lm, neuro_full_lm) |>

display()Warning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWhen comparing models, please note that probably not all models were fit

from same data.| Name | Model | R2 | R2 (adj.) | RMSE | Sigma |

|---|---|---|---|---|---|

| neuro_base_lm | lm | 0.03 | 0.03 | 9.04 | 9.08 |

| neuro_full_lm | lm | 0.06 | 0.05 | 8.58 | 8.67 |

model_parameters(neuro_full_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(365) | p |

|---|---|---|---|---|---|

| (Intercept) | -16.77 | 5.30 | (-27.19, -6.36) | -3.17 | 0.002 |

| age | 0.30 | 0.13 | (0.05, 0.55) | 2.35 | 0.019 |

| sex (Male) | 1.90 | 1.08 | (-0.23, 4.04) | 1.75 | 0.080 |

| stu neurotic | -0.06 | 0.06 | (-0.18, 0.06) | -1.02 | 0.307 |

| stu extro | -0.11 | 0.08 | (-0.26, 0.04) | -1.43 | 0.154 |

| stu open | -0.17 | 0.07 | (-0.32, -0.03) | -2.39 | 0.017 |

| stu agree | 0.09 | 0.07 | (-0.05, 0.23) | 1.22 | 0.224 |

| stu consc | -0.20 | 0.08 | (-0.36, -0.04) | -2.48 | 0.013 |

Lecturer extroversion

The second variable we want to predict is lecturer extroversion. You can follow the steps of the first example but with the outcome variable of lec_extro.

We fit the models and compare their fit using the code below. Table 10 shows that adding the personality variables increases the variance explained from 0.4% to 4.6%. Table 10 shows that only extroversion, \(\hat{b}\) = 0.16 (0.03, 0.29), t(264) = 2.34, p = 0.020, was a significant predictor of wanting a extroverted lecturer. The higher a student scored on extroversion, the more they wanted an extroverted lecturer.

# Fit the baseline model

extro_base_lm <- lm(lec_extro ~ age + sex,

data = chamorro_tib,

na.action = na.exclude)

# Add personality variables

extro_full_lm <- lm(lec_extro ~ age + sex + stu_neurotic + stu_extro + stu_open + stu_agree + stu_consc,

data = chamorro_tib,

na.action = na.exclude)

compare_performance(extro_base_lm, extro_full_lm) |>

display()Warning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWhen comparing models, please note that probably not all models were fit

from same data.| Name | Model | R2 | R2 (adj.) | RMSE | Sigma |

|---|---|---|---|---|---|

| extro_base_lm | lm | 3.80e-03 | -3.34e-03 | 6.91 | 6.95 |

| extro_full_lm | lm | 0.05 | 0.02 | 6.70 | 6.80 |

model_parameters(extro_full_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(264) | p |

|---|---|---|---|---|---|

| (Intercept) | 2.03 | 4.77 | (-7.37, 11.43) | 0.43 | 0.670 |

| age | 5.46e-03 | 0.11 | (-0.21, 0.22) | 0.05 | 0.960 |

| sex (Male) | 1.58 | 1.01 | (-0.41, 3.57) | 1.56 | 0.119 |

| stu neurotic | 0.02 | 0.06 | (-0.10, 0.13) | 0.30 | 0.761 |

| stu extro | 0.16 | 0.07 | (0.03, 0.29) | 2.34 | 0.020 |

| stu open | 0.05 | 0.07 | (-0.09, 0.18) | 0.66 | 0.507 |

| stu agree | 0.01 | 0.06 | (-0.11, 0.14) | 0.20 | 0.840 |

| stu consc | 0.11 | 0.08 | (-0.04, 0.26) | 1.46 | 0.145 |

Lecturer openness to experience

You can follow the steps of the first example but using lec_open as the outcome.

We fit the models and compare their fit using the code below. Table 12 shows that adding the personality variables increases the variance explained from 0.0% to 6.4%. Table 12 shows that openness to experience, \(\hat{b}\) = 0.28 (0.15, 0.41), t(367) = 4.24, p < 0.001, and agreeableness \(\hat{b}\) = 0.15 (0.02, 0.27), t(367) = 2.23, p = 0.026, were significant predictors of wanting a lecturer who was open to experience. The higher a student scored on these traits, the more they wanted a lecturer open to experience.

# Fit the baseline model

open_base_lm <- lm(lec_open ~ age + sex,

data = chamorro_tib,

na.action = na.exclude)

# Add personality variables

open_full_lm <- lm(lec_open ~ age + sex + stu_neurotic + stu_extro + stu_open + stu_agree + stu_consc,

data = chamorro_tib,

na.action = na.exclude)

compare_performance(open_base_lm, open_full_lm) |>

display()Warning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWhen comparing models, please note that probably not all models were fit

from same data.| Name | Model | R2 | R2 (adj.) | RMSE | Sigma |

|---|---|---|---|---|---|

| open_base_lm | lm | 2.38e-04 | -4.81e-03 | 8.08 | 8.11 |

| open_full_lm | lm | 0.06 | 0.05 | 7.84 | 7.92 |

model_parameters(open_full_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(367) | p |

|---|---|---|---|---|---|

| (Intercept) | -5.51 | 4.83 | (-15.01, 3.99) | -1.14 | 0.255 |

| age | -0.04 | 0.12 | (-0.27, 0.18) | -0.37 | 0.712 |

| sex (Male) | -0.22 | 0.99 | (-2.17, 1.73) | -0.22 | 0.824 |

| stu neurotic | 6.17e-03 | 0.05 | (-0.10, 0.11) | 0.12 | 0.908 |

| stu extro | 0.07 | 0.07 | (-0.07, 0.20) | 0.94 | 0.345 |

| stu open | 0.28 | 0.07 | (0.15, 0.41) | 4.24 | < .001 |

| stu agree | 0.15 | 0.07 | (0.02, 0.27) | 2.23 | 0.026 |

| stu consc | -0.06 | 0.07 | (-0.21, 0.09) | -0.81 | 0.417 |

Lecturer agreeableness

The fourth variable we want to predict is lecturer agreeableness. You can follow the steps of the first example but using lec_agree as the outcome.

We fit the models and compare their fit using the code below. Table 14 shows that adding the personality variables increases the variance explained from 3.0% to 10.3%. Table 14 shows that age, \(\hat{b}\) = -0.48 (-0.74, -0.21), t(364) = -3.52, p < 0.001, neuroticism, \(\hat{b}\) = 0.17 (0.05, 0.29), t(364) = 2.74, p = 0.006, openness to experience, \(\hat{b}\) = -0.21 (-0.37, -0.06), t(364) = -2.79, p = 0.006, and agreeableness \(\hat{b}\) = 0.17 (0.02, 0.32), t(364) = 2.25, p = 0.025, were significant predictors of wanting a lecturer who was agreeable. Age and openness to experience had negative estimates suggesting that the older and more open to experienced you are, the less you want an agreeable lecturer, whereas students with higher neuroticism and agreeableness had more desire for an agreeable lecturer (not surprisingly, because neurotics will lack confidence and probably feel more able to ask an agreeable lecturer questions).

# Fit the baseline model

agree_base_lm <- lm(lec_agree ~ age + sex,

data = chamorro_tib,

na.action = na.exclude)

# Add personality variables

agree_full_lm <- lm(lec_agree ~ age + sex + stu_neurotic + stu_extro + stu_open + stu_agree + stu_consc,

data = chamorro_tib,

na.action = na.exclude)

compare_performance(agree_base_lm, agree_full_lm) |>

display()Warning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWhen comparing models, please note that probably not all models were fit

from same data.| Name | Model | R2 | R2 (adj.) | RMSE | Sigma |

|---|---|---|---|---|---|

| agree_base_lm | lm | 0.03 | 0.02 | 9.46 | 9.49 |

| agree_full_lm | lm | 0.10 | 0.09 | 9.08 | 9.18 |

model_parameters(agree_full_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(364) | p |

|---|---|---|---|---|---|

| (Intercept) | 7.04 | 5.61 | (-4.00, 18.07) | 1.25 | 0.211 |

| age | -0.48 | 0.14 | (-0.74, -0.21) | -3.52 | < .001 |

| sex (Male) | 1.00 | 1.15 | (-1.27, 3.27) | 0.87 | 0.386 |

| stu neurotic | 0.17 | 0.06 | (0.05, 0.29) | 2.74 | 0.006 |

| stu extro | 0.06 | 0.08 | (-0.09, 0.22) | 0.81 | 0.421 |

| stu open | -0.21 | 0.08 | (-0.37, -0.06) | -2.79 | 0.006 |

| stu agree | 0.17 | 0.08 | (0.02, 0.32) | 2.25 | 0.025 |

| stu consc | 0.10 | 0.09 | (-0.07, 0.27) | 1.16 | 0.246 |

Lecturer conscientiousness

The final variable we want to predict is lecturer conscientiousness. You can follow the steps of the first example but replacing the outcome variable with lec_consc.

We fit the models and compare their fit using the code below. Table 16 shows that adding the personality variables increases the variance explained from 2.9% to 7.4%. Table 16 shows that agreeableness \(\hat{b}\) = 0.15 (0.03, 0.26), t(364) = 2.44, p = 0.015 and conscientiousness \(\hat{b}\) = 0.14 (0.00, 0.27), t(364) = 2.02, p = 0.044 were significant predictors of wanting a lecturer who was conscientious. Students higher on these traits have a greater desire for a conscientious lecturer.

# Fit the baseline model

consc_base_lm <- lm(lec_consc ~ age + sex,

data = chamorro_tib,

na.action = na.exclude)

# Add personality variables

consc_full_lm <- lm(lec_consc ~ age + sex + stu_neurotic + stu_extro + stu_open + stu_agree + stu_consc,

data = chamorro_tib,

na.action = na.exclude)

compare_performance(consc_base_lm, consc_full_lm) |>

display()Warning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWarning in w * res^2: longer object length is not a multiple of shorter object

lengthWarning in log(w) - (log(2 * pi) + log(s2) + (w * res^2)/s2): longer object

length is not a multiple of shorter object lengthWhen comparing models, please note that probably not all models were fit

from same data.| Name | Model | R2 | R2 (adj.) | RMSE | Sigma |

|---|---|---|---|---|---|

| consc_base_lm | lm | 0.03 | 0.02 | 7.52 | 7.54 |

| consc_full_lm | lm | 0.07 | 0.06 | 7.18 | 7.26 |

model_parameters(consc_full_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(364) | p |

|---|---|---|---|---|---|

| (Intercept) | 6.36 | 4.43 | (-2.36, 15.07) | 1.43 | 0.152 |

| age | 0.10 | 0.11 | (-0.11, 0.31) | 0.90 | 0.368 |

| sex (Male) | -1.56 | 0.91 | (-3.36, 0.24) | -1.71 | 0.089 |

| stu neurotic | 0.01 | 0.05 | (-0.09, 0.11) | 0.21 | 0.832 |

| stu extro | -0.07 | 0.06 | (-0.19, 0.06) | -1.08 | 0.283 |

| stu open | -0.01 | 0.06 | (-0.13, 0.11) | -0.18 | 0.858 |

| stu agree | 0.15 | 0.06 | (0.03, 0.26) | 2.44 | 0.015 |

| stu consc | 0.14 | 0.07 | (3.55e-03, 0.27) | 2.02 | 0.044 |

Compare all of your results to Table 4 in the actual article (reproduced in Figure 8) - our five analyses are represented by the columns labelled N, E, O, A and C).

Chapter 9

Bladder control

Data from Tuk et al. (2011).

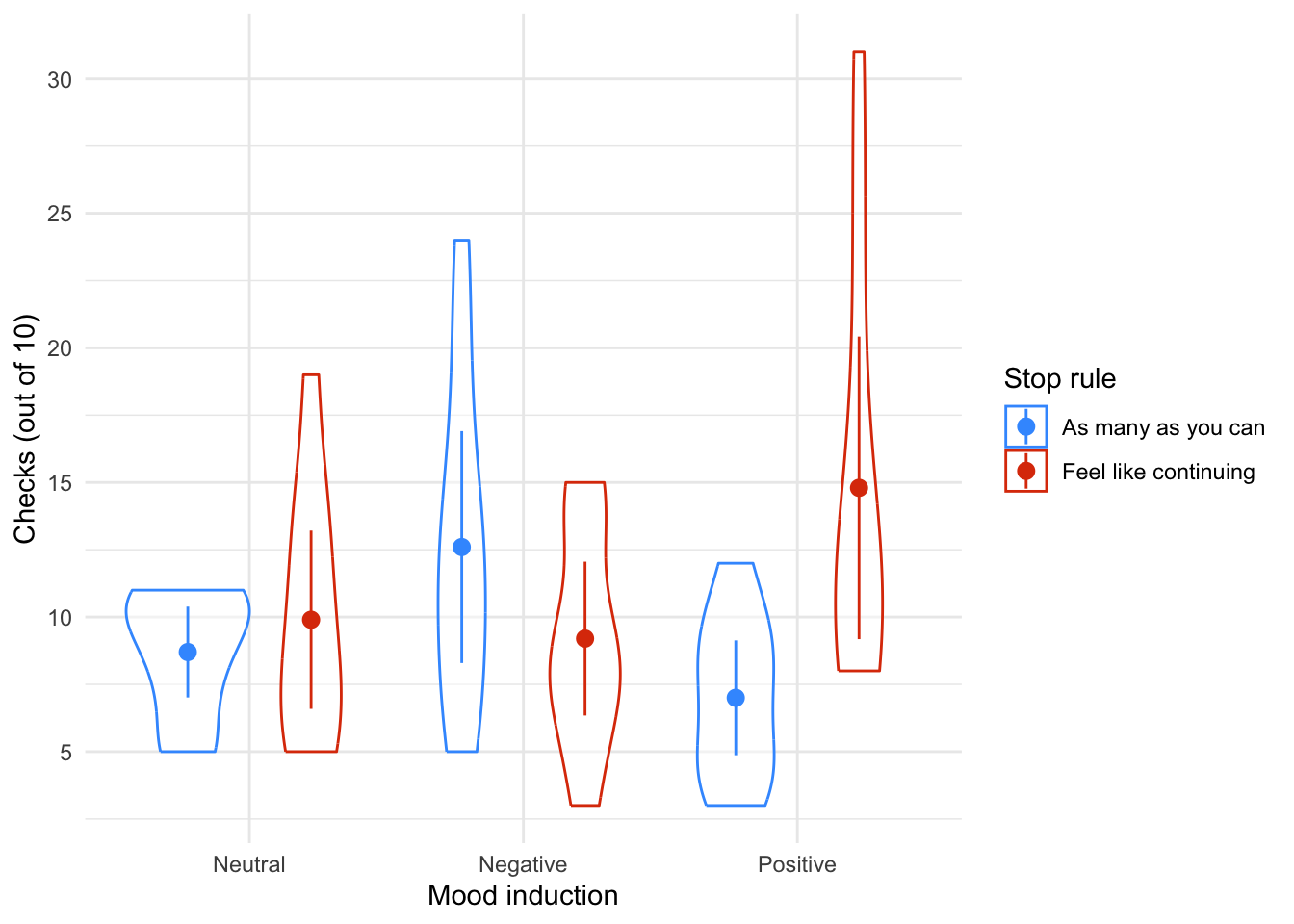

Visceral factors that require us to engage in self control (such as a filling bladder) can affect our inhibtory abilities in unrelated domains. In a fascinating study by Tuk et al. (2011), participants were given five cups of water: one group was asked to drink them all, whereas another was asked to take a sip from each. This manipulation led one group to have full bladders and the other group to have relatively empty ones (

urgency). Later on, these participants were given eight trials in which they had to choose between a small financial reward that they would receive soon (SS) and a large financial reward for which they would wait longer (LL). They counted the number of trials in which participants chose the LL reward as an indicator of inhibitory control (ll_sum). Do a t-test to see whether people with full bladders were inhibited more than those without (tuk_2011.csv).

Load the data using (see Tip 1):

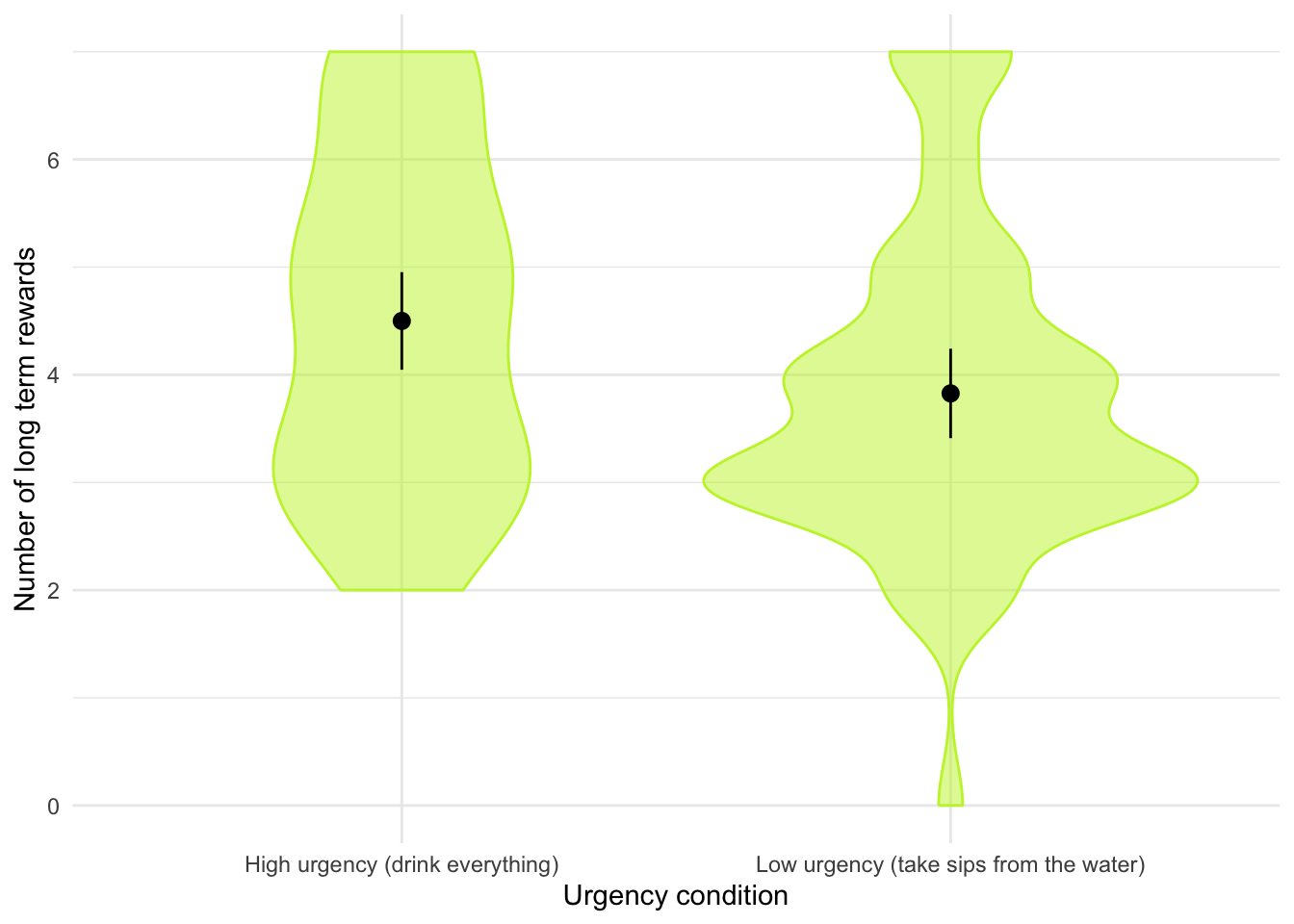

tuk_tib <- discovr::tuk_2011Let’s get the summary statistics in Table 18 and the plot in Figure 9. The results of the t-test itself are in Table 19. Note that I have used es_type to estimate Cohen’s \(d\).

| urgency | Variable | Mean | SD | IQR | Range | Skewness | Kurtosis | n | n_Missing |

|---|---|---|---|---|---|---|---|---|---|

| High urgency (drink everything) | ll_sum | 4.50 | 1.59 | 3.00 | (2.00, 7.00) | 0.19 | -1.15 | 50 | 0 |

| Low urgency (take sips from the water) | ll_sum | 3.83 | 1.49 | 1.75 | (0.00, 7.00) | 0.57 | 0.57 | 52 | 0 |

ggplot(tuk_tib, aes(x = urgency, y = ll_sum)) +

geom_violin(colour = "#C4F134FF", fill = "#C4F134FF", alpha = 0.5) +

stat_summary(fun.data = "mean_cl_normal") +

labs(x = "Urgency condition", y = "Number of long term rewards") +

theme_minimal()

Warning: Unable to retrieve data from htest object.

Returning an approximate effect size using t_to_d().| Parameter | Group | Mean_Group1 | Mean_Group2 | Difference | 95% CI | d | d 95% CI | t(98.89) | p |

|---|---|---|---|---|---|---|---|---|---|

| ll_sum | urgency | 4.50 | 3.83 | 0.67 | (0.07, 1.28) | 0.44 | (0.04, 0.84) | 2.20 | 0.030 |

Alternative hypothesis: true difference in means between group High urgency (drink everything) and group Low urgency (take sips from the water) is not equal to 0

The beautiful people

Data from Gelman & Weakliem (2009).

Apparently there are more beautiful women in the world than there are handsome men. Satoshi Kanazawa explains this finding in terms of good-looking parents being more likely to have a baby daughter as their first child than a baby son. Perhaps more controversially, he suggests that, from an evolutionarily perspective, beauty is a more valuable trait for women than for men (Kanazawa, 2007). In a playful and very informative paper, Andrew Gelman and David Weakliem discuss various statistical errors and misunderstandings, some of which have implications for Kanazawa’s claims. The ‘playful’ part of the paper is that to illustrate their point they collected data on the 50 most beautiful celebrities (as listed by People magazine) of 1995–2000. They counted how many male and female children they had as of 2007. If Kanazawa is correct, these beautiful people would have produced more girls than boys. Do a t-test to find out whether they did. The data are in

gelman_2009.csv.

We need to run a paired samples t-test on these data because the researchers recorded the number of daughters and sons for each participant (repeated-measures design).

Load the data using (see Tip 1). We also need to convert it to messy format. The restructured data is in Table 20, note that we have two new variables sons and daughters containing the number of sons and daughters respectively.

# Load data

gelman_tib <- discovr::gelman_2009

# Convert to messy

gelman_mess <- gelman_tib |>

pivot_wider(

id_cols = person,

names_from = child,

values_from = number

)

# Display using datatable

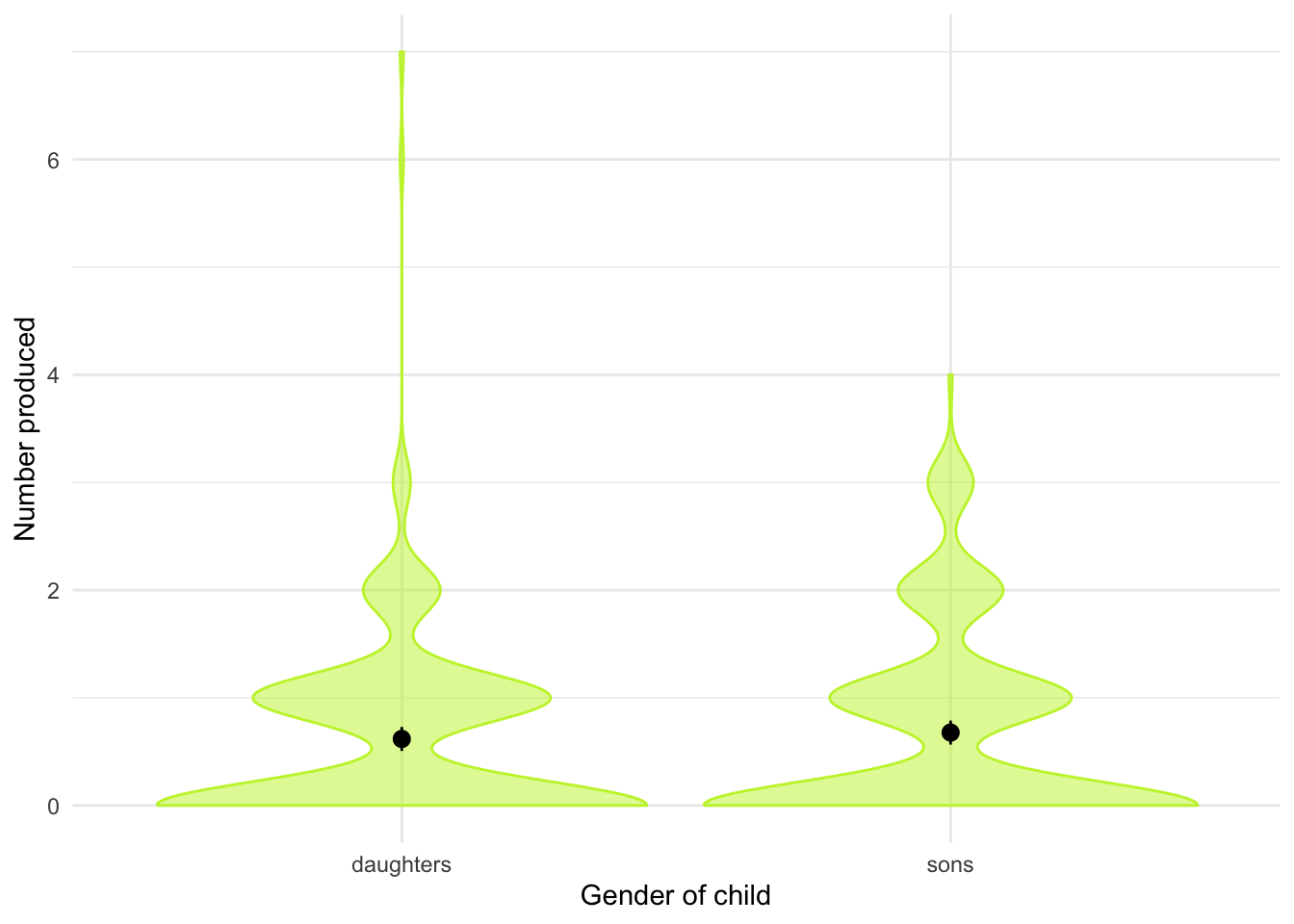

DT::datatable(gelman_mess)Let’s get the summary statistics in Table 21 and the plot in Figure 10. The results of the t-test itself are in Table 22 and the effect size is shown in Table 23.

| child | Variable | Mean | SD | IQR | Range | Skewness | Kurtosis | n | n_Missing |

|---|---|---|---|---|---|---|---|---|---|

| daughters | number | 0.62 | 0.90 | 1 | (0.00, 7.00) | 2.78 | 13.83 | 254 | 20 |

| sons | number | 0.68 | 0.90 | 1 | (0.00, 4.00) | 1.24 | 0.78 | 254 | 20 |

ggplot(gelman_tib, aes(x = child, y = number)) +

geom_violin(colour = "#C4F134FF", fill = "#C4F134FF", alpha = 0.5) +

stat_summary(fun.data = "mean_cl_normal") +

labs(x = "Gender of child", y = "Number produced") +

theme_minimal()Warning: Removed 40 rows containing non-finite outside the scale range

(`stat_ydensity()`).Warning: Removed 40 rows containing non-finite outside the scale range

(`stat_summary()`).

| Parameter | Difference | t(253) | p | 95% CI |

|---|---|---|---|---|

| Pair(sons, daughters) | 0.06 | 0.81 | 0.420 | (-0.09, 0.20) |

Alternative hypothesis: true mean difference is not equal to 0

Chapter 10

I heard that Jane has a boil and kissed a tramp

Data from Massar et al. (2012).

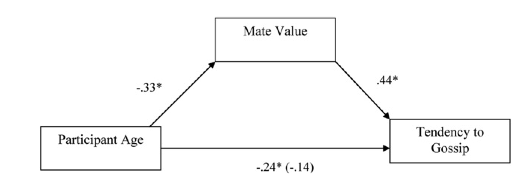

Everyone likes a good gossip from time to time, but apparently it has an evolutionary function. One school of thought is that gossip is used as a way to derogate sexual competitors – especially by questioning their appearance and sexual behaviour. For example, if you’ve got your eyes on a guy, but he has his eyes on Jane, then a good strategy is to spread gossip that Jane has something unappealing about her or kissed someone unpopular. Apparently men rate gossiped-about women as less attractive, and they are more influenced by the gossip if it came from a woman with a high mate value (i.e., attractive and sexually desirable). Karlijn Massar and her colleagues hypothesized that if this theory is true, then (1) younger women will gossip more because there is more mate competation at younger ages and (2) this relationship will be mediated by the mate value of the person (because for those with high mate value, gossiping for the purpose of sexual competition will be more effective). Eighty-three women aged from 20 to 50 (

age) completed questionnaire measures of their tendency to gossip (gossip) and their sexual desirability (mate_value). Test Massar et al.’s mediation model using Baron and Kenny’s method (as they did) but also using PROCESS to estimate the indirect effect (massar_2012.csv). View the solutions at www.discovr.rocks (or look at Figure 1 in the original article, which shows the parameters for the various regressions).

Load the data using (see Tip 1):

massar_tib <- discovr::massar_2012Solution using Baron and Kenny’s method

Baron and Kenny suggested that mediation is tested through three linear models:

- A linear model predicting the outcome (

gossip) from the predictor variable (age). - A linear model predicting the mediator (

mate_value) from the predictor variable (age). - A linear model predicting the outcome (

gossip) from both the predictor variable (age) and the mediator (mate_value).

These models test the four conditions of mediation: (1) the predictor variable (age) must significantly predict the outcome variable (gossip) in model 1; (2) the predictor variable (age) must significantly predict the mediator (mate_value) in model 2; (3) the mediator (mate_value) must significantly predict the outcome (gossip) variable in model 3; and (4) the predictor variable (age) must predict the outcome variable (gossip) less strongly in model 3 than in model 1.

The paper reports standardized betas so I’ve used the standardize = "refit" within model_parameters() to get these coefficients.

Model 1 (Table 24) indicates that the first condition of mediation was met, in that participant age was a significant predictor of the tendency to gossip, \(\hat{\beta}\) = -0.28 (-0.49, -0.06), t(8) = -2.59, p = 0.011. The parameter estimate approximately matches the value of -0.24 for the path between age and gossip in Figure 11.

Model 2 (Table 25) shows that the second condition of mediation was met: participant age was a significant predictor of mate value, \(\hat{\beta}\) = -0.38 (-0.59, -0.17), t(79) = -3.67, p < 0.001. The parameter estimate approximately matches the value of -0.33 for the path between age and gossip in Figure 11.

Model 3 (Table 26) shows that the third condition of mediation has been met: mate value significantly predicted the tendency to gossip while adjusting for participant age, \(\hat{\beta}\) = 0.39 (0.17, 0.61), t(78) = 3.59, p < 0.001. The parameter estimate approximately matches the value of 0.44 for the path between mate value and gossip in Figure 11.

The fourth condition of mediation has also been met: the parameter estimate between participant age and tendency to gossip decreased substantially when adjusting for mate value, in fact it is no longer significant, \(\hat{\beta}\) = -0.14 (-0.35, 0.08), t(78) = -1.28, p = 0.206. The parameter estimate matches the value (in parenthesis) of -0.14 for the path between age and gossip in Figure 11.

If you buy into the Baron and Kenny method, the author’s prediction is supported, and the relationship between participant age and tendency to gossip is mediated by mate value. However, these days, we’d fit all paths simultaneously, which brings us to the next part of the task.

| Parameter | Coefficient | SE | 95% CI | t(80) | p |

|---|---|---|---|---|---|

| (Intercept) | -2.39e-17 | 0.11 | (-0.21, 0.21) | -2.24e-16 | > .999 |

| age | -0.28 | 0.11 | (-0.49, -0.06) | -2.59 | 0.011 |

| Parameter | Coefficient | SE | 95% CI | t(79) | p |

|---|---|---|---|---|---|

| (Intercept) | 2.47e-16 | 0.10 | (-0.21, 0.21) | 2.39e-15 | > .999 |

| age | -0.38 | 0.10 | (-0.59, -0.17) | -3.67 | < .001 |

| Parameter | Coefficient | SE | 95% CI | t(78) | p |

|---|---|---|---|---|---|

| (Intercept) | -1.49e-16 | 0.10 | (-0.20, 0.20) | -1.49e-15 | > .999 |

| age | -0.14 | 0.11 | (-0.35, 0.08) | -1.28 | 0.206 |

| mate value | 0.39 | 0.11 | (0.17, 0.61) | 3.59 | < .001 |

Solution using lavaan

Table 27 shows that age significantly predicts mate value, \(\hat{b}\) = -0.03 (-0.04, -0.01), z = -3.64, p < 0.001. We can see that while age does not significantly predict tendency to gossip with mate value in the model, \(\hat{b}\) = -0.01 (-0.03, 0.00), z = -1.36, p = 0.174, mate value does significantly predict tendency to gossip, \(\hat{b}\) = 0.45 (0.18, 0.72), z = 3.26, p = 0.001. The negative b for age tells us that as age increases, tendency to gossip declines (and vice versa), but the positive b for mate value indicates that as mate value increases, tendency to gossip increases also. These relationships are in the predicted direction.

The total effect shows that when mate value is not in the model, age significantly predicts tendency to gossip, \(\hat{b}\) = -0.02 (-0.04, -0.01), z = -2.89, p = 0.004. Finally, the indirect effect of age on gossip is significant, \(\hat{b}\) = -0.01 (-0.02, -0.00), z = -2.21, p = 0.027. Put another way, mate value is a significant mediator of the relationship between age and tendency to gossip.

| Link | Coefficient | SE | 95% CI | z | p |

|---|---|---|---|---|---|

| gossip ~ age (c) | -0.01 | 7.66e-03 | (-0.03, 4.60e-03) | -1.36 | 0.174 |

| gossip ~ mate_value (b) | 0.45 | 0.14 | (0.18, 0.72) | 3.26 | 0.001 |

| mate_value ~ age (a) | -0.03 | 7.24e-03 | (-0.04, -0.01) | -3.64 | < .001 |

| To | Coefficient | SE | 95% CI | z | p |

|---|---|---|---|---|---|

| (indirect_effect) | -0.01 | 5.39e-03 | (-0.02, -1.34e-03) | -2.21 | 0.027 |

| (total_effect) | -0.02 | 7.72e-03 | (-0.04, -7.17e-03) | -2.89 | 0.004 |

Chapter 11

Scraping the barrel?

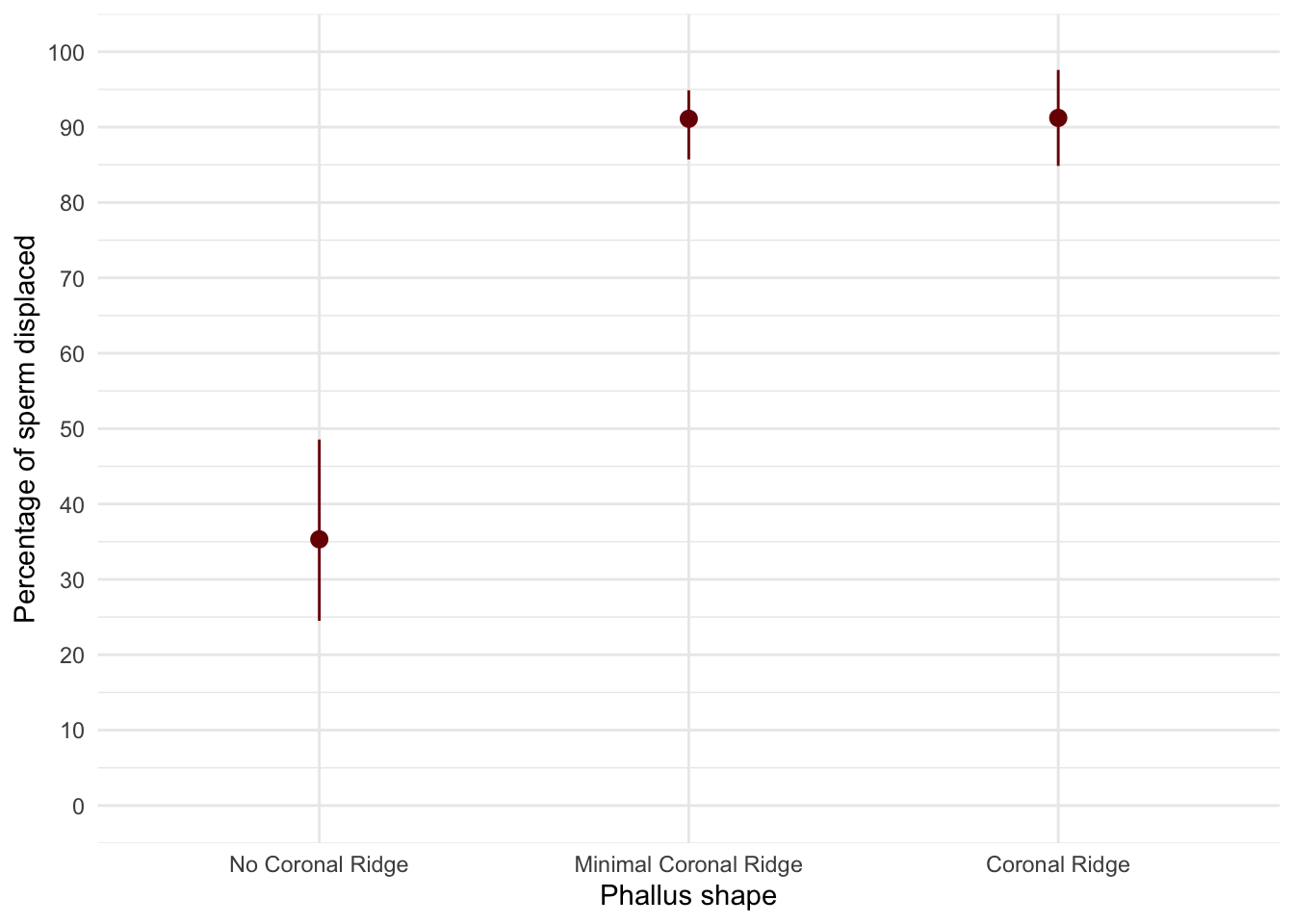

Data from Gallup et al. (2003).

Evolution has endowed us with many beautiful things (cats, dolphins, the Great Barrier Reef, etc.), all selected to fit their ecological niche. Given evolution’s seemingly limitless capacity to produce beauty, it’s something of a wonder how it managed to produce the human penis. One theory is sperm competition: the human penis has an unusually large glans (the ‘bell-end’) compared to other primates, and this may have evolved so that the penis can displace seminal fluid from other males by ‘scooping it out’ during intercourse. Armed with various female masturbatory devices from Hollywood Exotic Novelties, an artificial vagina from California Exotic Novelties and some water and cornstarch to make fake sperm, Gallup and colleagues put this theory to the test. They loaded an artificial vagina with 2.6 ml of fake sperm and inserted one of three female sex toys into it before withdrawing it: a control phallus that had no coronal ridge (i.e., no bell-end), a phallus with a minimal coronal ridge (small bellend) and a phallus with a coronal ridge.

They measured sperm displacement as a percentage using the following expression (included here because it is more interesting than all of the other equations in this book): \[ \frac{\text{Weight of vagina with semen - weight of vagina following insertion and removal of phallus}}{\text{Weight of vagina with semen - weight of empty vagina}} \times 100 \]

A value of 100% means that all the sperm was displaced, and 0% means that none of the sperm was displaced. If the human penis evolved as a sperm displacement device, then Gallup et al. predicted (1) that having a bell-end would displace more sperm than not and (2) that the phallus with the larger coronal ridge would displace more sperm than the phallus with the minimal coronal ridge. The conditions are ordered (no ridge, minimal ridge, normal ridge) so we might also predict a linear trend. The data are in the file

gallup_2003.csv. Plot error bars of the means of the three conditions. Fit a model with planned contrasts to test the two hypotheses above. What did Gallup et al. find? View the solutions at www.discovr.rocks (or look at pages 280–281 in the original article).

Load the data using (see Tip 1):

gallup_tib <- discovr::gallup_2003Let’s do the plot first. There are two variables: phallus (the predictor variable that has three levels: no ridge, minimal ridge and normal ridge) and displace (the outcome variable, the percentage of sperm displaced). The plot should therefore plot phallus on the x-axis and displace on the y-axis as in Figure 12. The plot shows that having a coronal ridge results in more sperm displacement than not having one. The size of ridge made very little difference.

ggplot(gallup_tib, aes(x = phallus, y = displace)) +

stat_summary(fun.data = "mean_cl_boot", colour = "#7A0403FF") +

coord_cartesian(ylim = c(0, 100)) +

scale_y_continuous(breaks = seq(0, 100, 10)) +

labs(x = "Phallus shape", y = "Percentage of sperm displaced") +

theme_minimal()

To test the hypotheses we need to first enter the codes in Table 28 for the contrasts. Contrast 1 tests hypothesis 1: that having a bell-end will displace more sperm than not. To test this we compare the two conditions with a ridge against the control condition (no ridge). So we compare chunk 1 (no ridge) to chunk 2 (minimal ridge, coronal ridge). The numbers assigned to the groups are the number of groups in the opposite chunk divided by the number of groups that have non-zero codes, and we randomly assigned one chunk to be a negative value (the codes 2/3, −1/3, −1/3 would work fine as well).

Contrast 2 tests hypothesis 2: the phallus with the larger coronal ridge will displace more sperm than the phallus with the minimal coronal ridge. First we get rid of the control phallus by assigning a code of 0; next we compare chunk 1 (minimal ridge) to chunk 2 (coronal ridge). The numbers assigned to the groups are the number of groups in the opposite chunk divided by the number of groups that have non-zero codes, and then we randomly assigned one chunk to be a negative value (the codes 0, 1/2, −1/2 would work fine as well).

| Group | No ridge vs. ridge | Minimal vs. coronal |

|---|---|---|

| No Ridge | -2/3 | 0 |

| Minimal ridge | 1/3 | -1/2 |

| Coronal ridge | 1/3 | 1/2 |

We set these contrasts for the variable phallus as follows:

ridge_vs_none minimal_vs_coronal

No Coronal Ridge -0.6666667 0.0

Minimal Coronal Ridge 0.3333333 -0.5

Coronal Ridge 0.3333333 0.5The results of fitting the model are shown in Table 30 and the parameter estimates in Table 31. Table 30 tells us that there was a significant effect of the type of phallus, F(2, 12) = 41.56, p < 0.001. (This is exactly the same result as reported in the paper on page 280.). Table 31 shows that hypothesis 1 is supported (phallus [ridge_vs_none]): having some kind of ridge led to greater sperm displacement than not having a ridge, \(\hat{b}\) = 55.85 (42.50, 69.20), t(12) = 9.12, p < 0.001. Hypothesis 2 is not supported (phallus [minimal_vs_coronal]): the amount of sperm displaced by the normal coronal ridge was not significantly different from the amount displaced by a minimal coronal ridge, \(\hat{b}\) = 0.11 (-15.30, 15.53), t(12) = 0.02, p = 0.987.

| Parameter | Sum_Squares | df | Mean_Square | F | p |

|---|---|---|---|---|---|

| phallus | 10397.66 | 2 | 5198.83 | 41.56 | < .001 |

| Residuals | 1501.13 | 12 | 125.09 |

Anova Table (Type 1 tests)

model_parameters(gallup_lm) |>

display()| Parameter | Coefficient | SE | 95% CI | t(12) | p |

|---|---|---|---|---|---|

| (Intercept) | 72.55 | 2.89 | (66.26, 78.84) | 25.12 | < .001 |

| phallus (ridge_vs_none) | 55.85 | 6.13 | (42.50, 69.20) | 9.12 | < .001 |

| phallus (minimal_vs_coronal) | 0.11 | 7.07 | (-15.30, 15.53) | 0.02 | 0.987 |

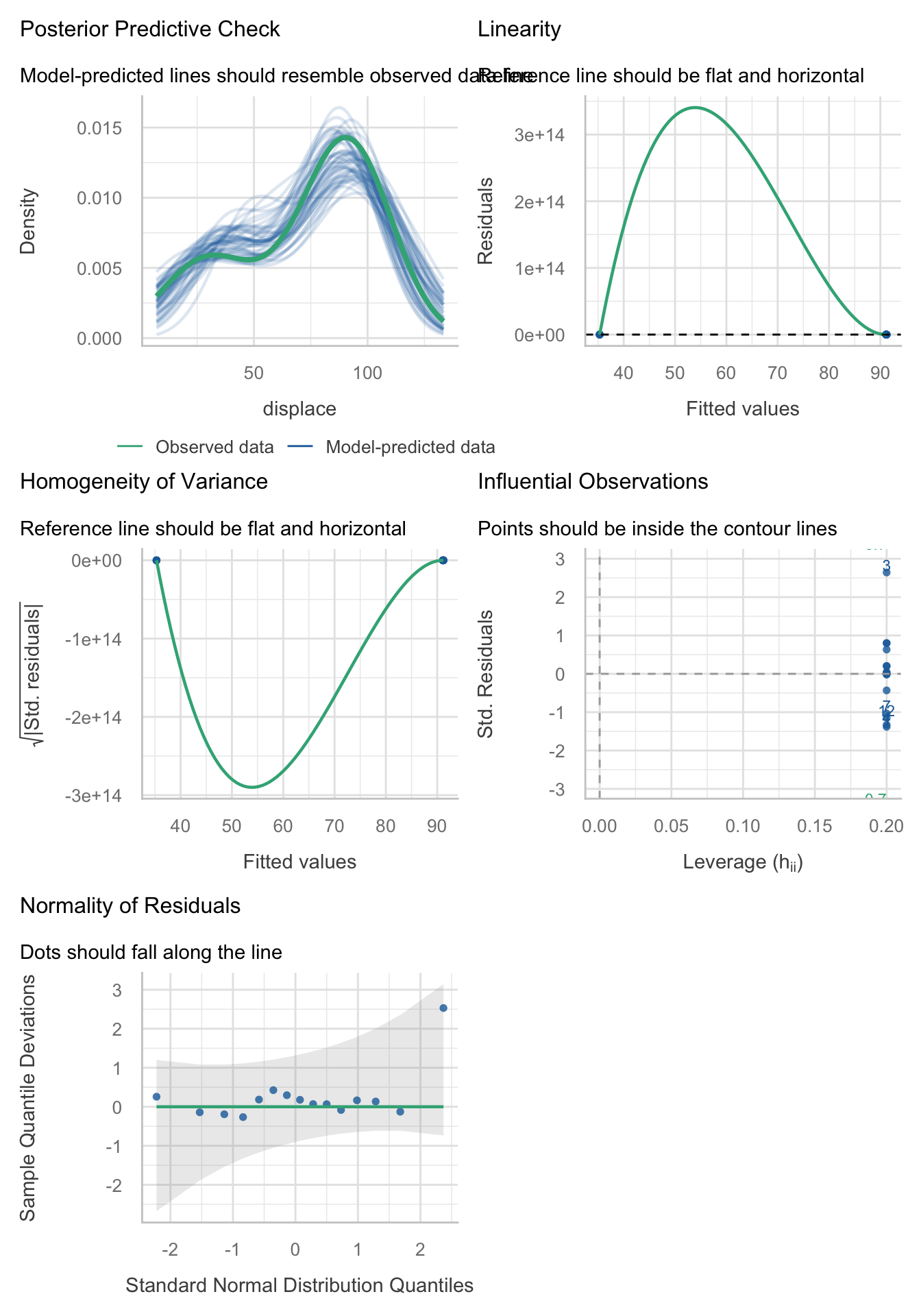

Evaluate the model assumptions using Figure 13. The Q-Q plot suggests non-normal residuals and the residual vs fitted plot and the scale-location plot suggest heterogeneity of variance. Let’s fit a robust model as a sensitivity check. Table 32 shows that the Welch F is highly significant still, F(2, 7.31) = 24.49, p < 0.001. Table 33 shows that the first contrast is still highly significant, \(\hat{b}\) = 55.85 (39.15, 72.55), t(12) = 7.29, p < 0.001, and the second contrast highly non-significant, \(\hat{b}\) = 55.85 (39.15, 72.55), t(12) = 7.29, p < 0.001. As such, our conclusions are unchanged when fitting a model that is robust to heteroscedasticity.

check_model(gallup_lm)

oneway.test(displace ~ phallus, data = gallup_tib) |>

model_parameters() |>

display()| F | df | df (error) | p |

|---|---|---|---|

| 24.49 | 2 | 7.31 | < .001 |

model_parameters(gallup_lm, vcov = "HC4") |>

display()| Parameter | Coefficient | SE | 95% CI | t(12) | p |

|---|---|---|---|---|---|

| (Intercept) | 72.55 | 2.89 | (66.26, 78.84) | 25.12 | < .001 |

| phallus (ridge_vs_none) | 55.85 | 7.67 | (39.15, 72.55) | 7.29 | < .001 |

| phallus (minimal_vs_coronal) | 0.11 | 4.66 | (-10.04, 10.27) | 0.02 | 0.981 |

Eggs-traordinary

Data from Çetinkaya & Domjan (2006).

Science has bestowed upon us the knowledge that quail develop fetishes. Really. In studies where a terrycloth object acts as a sign that a mate will shortly become available, some quail start to direct their sexual behaviour towards the terrycloth object. (I will regret this anology, but in human terms if everytime you were going to have sex with your boyfriend you gave him a green towel a few moments before seducing him, then after enough seductions he would start getting really excited by green towels. If you’re planning to dump your boyfriend, a towel fetish could be an entertaining parting gift). In evolutionary terms, this fetishistic behaviour seems counterproductive because sexual behaviour becomes directed towards something that cannot provide reproductive success. However, perhaps this behaviour serves to prepare the organism for the ‘real’ mating behaviour.

Hakan Çetinkaya and Mike Domjan sexually conditioned male quail (Çetinkaya & Domjan, 2006). All quail experienced the terrycloth stimulus and an opportunity to mate, but for some the terrycloth stimulus immediately preceded the mating opportunity (paired group) whereas others experienced a 2-hour delay (this acted as a control group because the terrycloth stimulus did not predict a mating opportunity). In the paired group, quail were classified as fetishistic or not depending on whether they engaged in sexual behaviour with the terrycloth object.

During a test trial the quail mated with a female and the researchers measured the percentage of eggs fertilized, the time spent near the terrycloth object, the latency to initiate copulation and copulatory efficiency. If this fetishistic behaviour provides an evolutionary advantage, then we would expect the fetishistic quail to fertilize more eggs, initiate copulation faster and be more efficient in their copulations.

The data from this study are in the file

cetinkaya_2006.csv. Labcoat Leni wants you to carry out a robust model to see whether fetishistic quail (groups) produced a higher percentage of fertilized eggs (egg_percent) and initiated sex more quickly (latency). You can view the solutions at www.discovr.rocks.

Load the data using (see Tip 1):

cetinkaya_tib <- discovr::cetinkaya_2006The authors conducted a Kruskal-Wallis test (a test not covered in the book because of our focus on robust methods). For the percentage of eggs, they report (p. 429):

Kruskal–Wallis analysis of variance (ANOVA) confirmed that female quail partnered with the different types of male quail produced different percentages of fertilized eggs, $ ^{2} $(2, N = 59) =11.95, p < .05, $ ^{2} $ = 0.20. Subsequent pairwise comparisons with the Mann–Whitney U test (with the Bonferroni correction) indicated that fetishistic male quail yielded higher rates of fertilization than both the nonfetishistic male quail (U = 56.00, N1 = 17, N2 = 15, effect size = 8.98, p < .05) and the control male quail (U= 100.00, N1 = 17, N2 = 27, effect size = 12.42, p < .05). However, the nonfetishistic group was not significantly different from the control group (U = 176.50, N1 = 15, N2 = 27, effect size = 2.69, p > .05).

For the latency data they reported as follows:

A Kruskal–Wallis analysis indicated significant group differences,$ ^{2} $(2, N = 59) = 32.24, p < .05, $ ^{2} $ = 0.56. Pairwise comparisons with the Mann–Whitney U test (with the Bonferroni correction) showed that the nonfetishistic males had significantly shorter copulatory latencies than both the fetishistic male quail (U = 0.00, N1 = 17, N2 = 15, effect size = 16.00, p < .05) and the control male quail (U = 12.00, N1 = 15, N2 = 27, effect size = 19.76, p < .05). However, the fetishistic group was not significantly different from the control group (U = 161.00, N1 = 17, N2 = 27, effect size = 6.57, p > .05). (p. 430)

These results support the authors’ theory that fetishist behaviour may have evolved because it offers some adaptive function (such as preparing for the real thing).

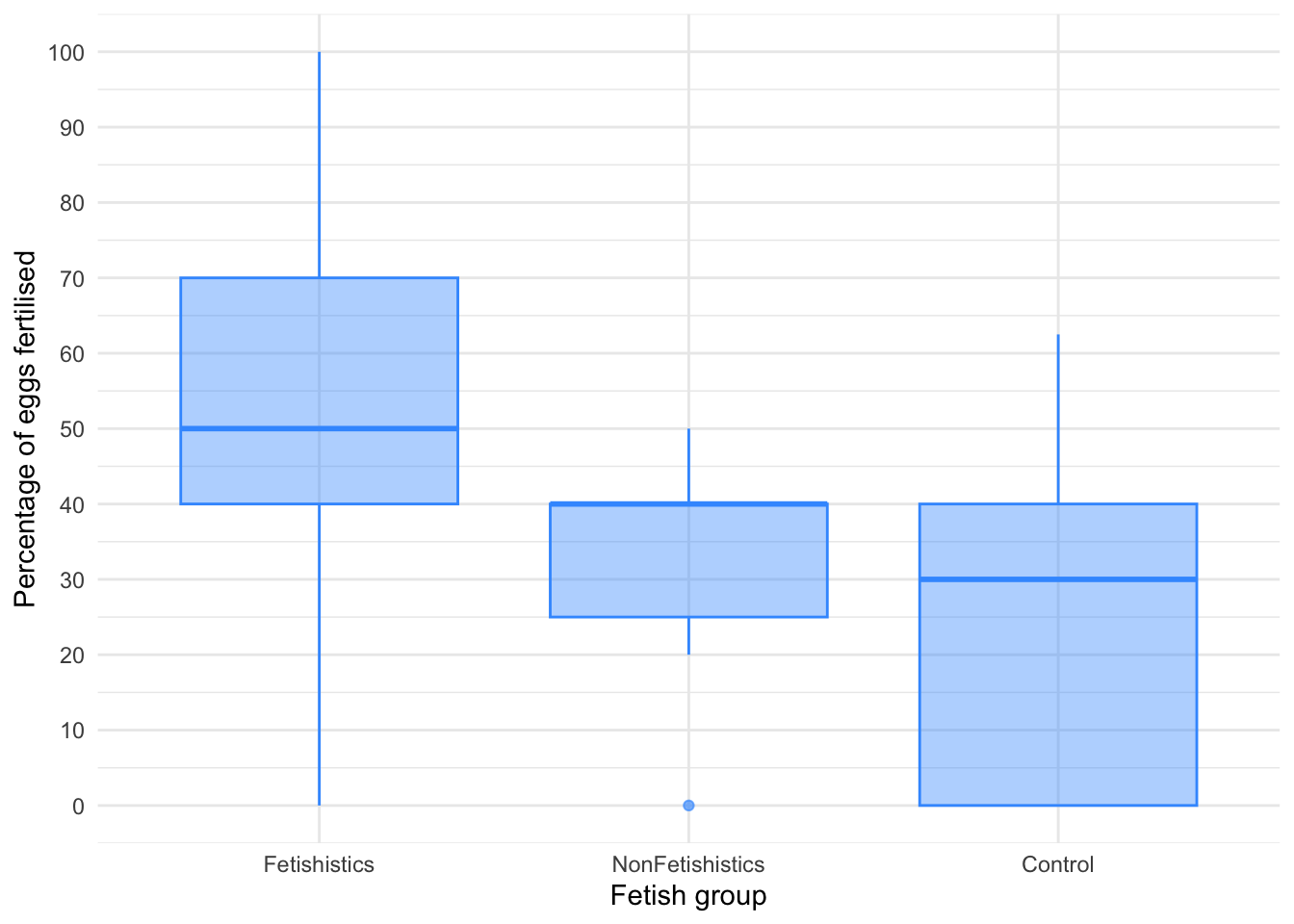

Percentage of eggs

Figure 14 shows that the percentage of eggs fertilized increases from control group to non-fetishistic to fetishistic group. There is an outlier and skew in the non-fetishistic group and skew in the control group also. The authors were wise to fit a nonparametric test. We’ll use a 20% trimmed mean test with post hoc tests. Table 35 tells us that there was a significant effect, F(2, 22.2) = 5.02, p = 0.016. Table 36 shows the robust post hoc tests, which reveal that there was no significant difference between the control group and the non-fetishistic group, \(\hat{\Psi}\) = 8.45 (-10.63, 27.52), p = 0.268, but significant differences were found between the control group and the fetishistic group, \(\hat{\Psi}\) = 24.34 (3.76, 44.91), p = 0.017, and between the fetishistic group and the non-fetishistic group, \(\hat{\Psi}\) = 15.89 (-0.99, 32.77), p = 0.048. We know by looking at the boxplot (the medians in particular) that the fetishistic males yielded significantly higher rates of fertilization than both the non-fetishistic male quail and the control male quail. These results confirm the findings reported from the nonparametric tests in the paper.

ggplot(cetinkaya_tib, aes(x = groups, y = egg_percent)) +

geom_boxplot(colour = "#3E9BFEFF", fill = "#3E9BFEFF", alpha = 0.4) +

coord_cartesian(ylim = c(0, 100)) +

scale_y_continuous(breaks = seq(0, 100, 10)) +

labs(x = "Fetish group", y = "Percentage of eggs fertilised") +

theme_minimal()

| F | df | df (error) | p | Estimate | 95% CI | Effectsize |

|---|---|---|---|---|---|---|

| 5.02 | 2 | 22.20 | 0.016 | 0.62 | (0.20, 1.21) | Explanatory measure of effect size |

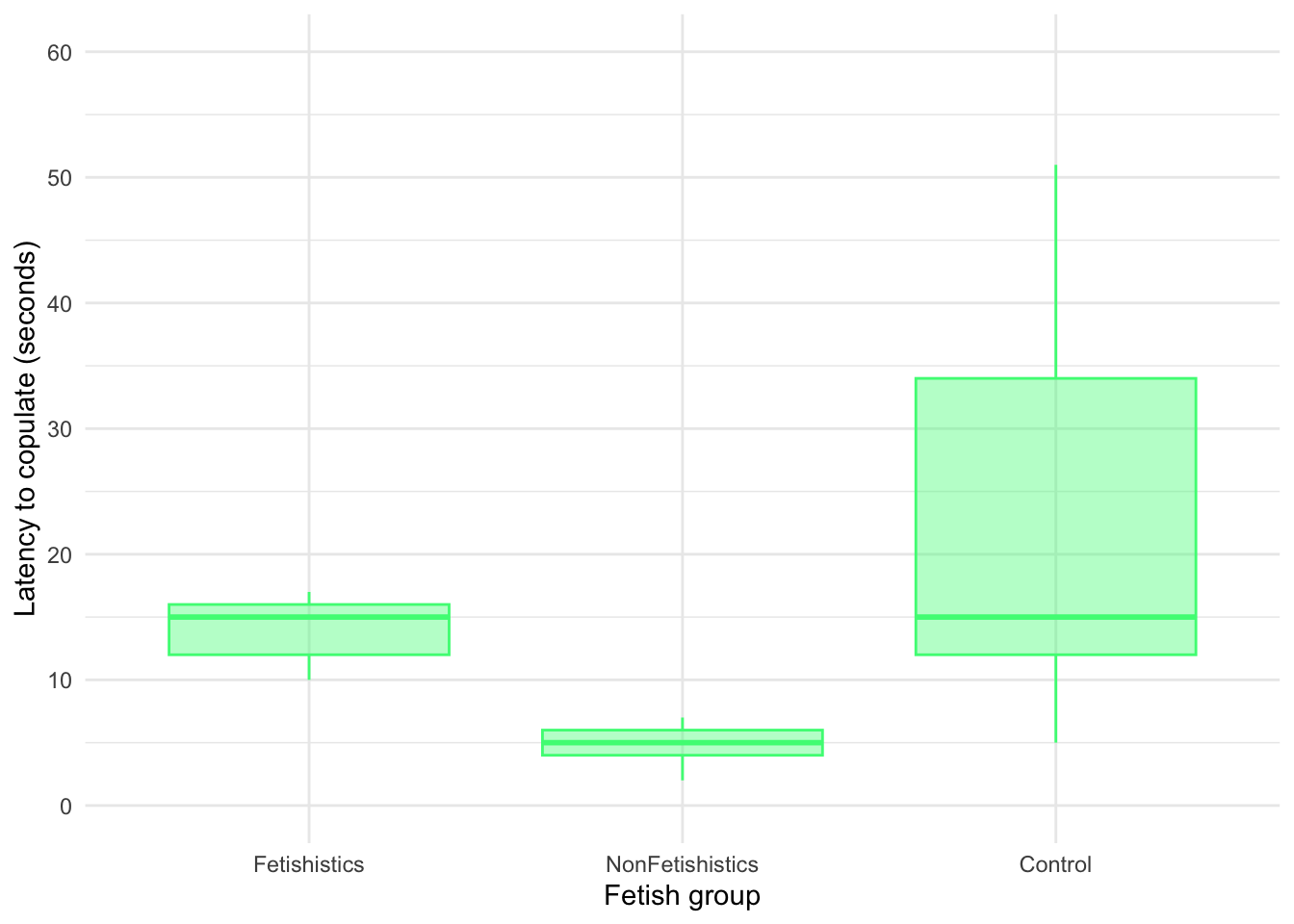

Latency to copulate

Figure 15 shows that the latency to copulate was lowest in the non-fetishistic group and higher but similar in the fetishistic and control groups. These groups have very different variances, which means the residuals will likely have too. As with the percentage of eggs, we’ll use a 20% trimmed mean test with post hoc tests. Table 37 tells us that there was a significant effect, F(2, 21.28) = 68.12, p < 0.001. Although we’ve applied a robust test rather than a nonparametric one the results of the study are confirmed.

Table 38 shows the robust post hoc tests, which reveal that there was no significant difference between the control group and the fetishistic group, \(\hat{\Psi}\) = -6.65 (-16.19, 2.90), p = 0.084, but significant differences were found between the control group and the non-fetishistic group, \(\hat{\Psi}\) = -15.65 (-25.12, -6.17), p < 0.001, and between the fetishistic group and the non-fetishistic group, \(\hat{\Psi}\) = 9.00 (6.88, 11.12), p < 0.001. We know by looking at the boxplot (the medians in particular) that the non-fetishistic males yielded significantly lower rates of fertilization than the fetishistic male quail and the control male quail. Again, these results confirm the findings reported from the nonparametric tests in the paper.

ggplot(cetinkaya_tib, aes(x = groups, y = latency)) +

geom_boxplot(colour = "#46F884FF", fill = "#46F884FF", alpha = 0.4) +

coord_cartesian(ylim = c(0, 60)) +

scale_y_continuous(breaks = seq(0, 60, 10)) +

labs(x = "Fetish group", y = "Latency to copulate (seconds)") +

theme_minimal()

| F | df | df (error) | p | Estimate | 95% CI | Effectsize |

|---|---|---|---|---|---|---|

| 68.12 | 2 | 21.28 | < .001 | 1.12 | (0.78, 1.61) | Explanatory measure of effect size |

Chapter 12

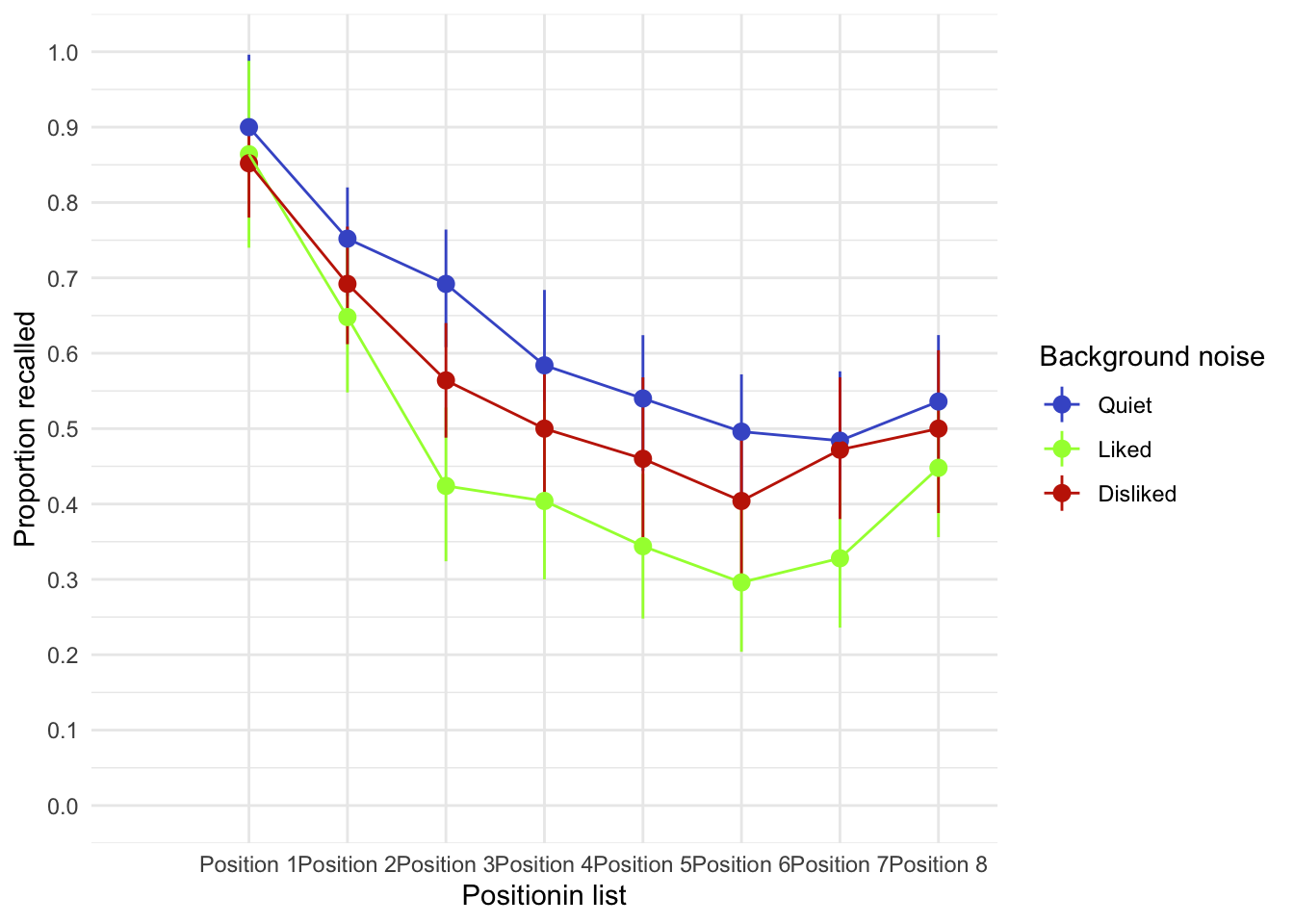

Space invaders

Data from Muris et al. (2008).

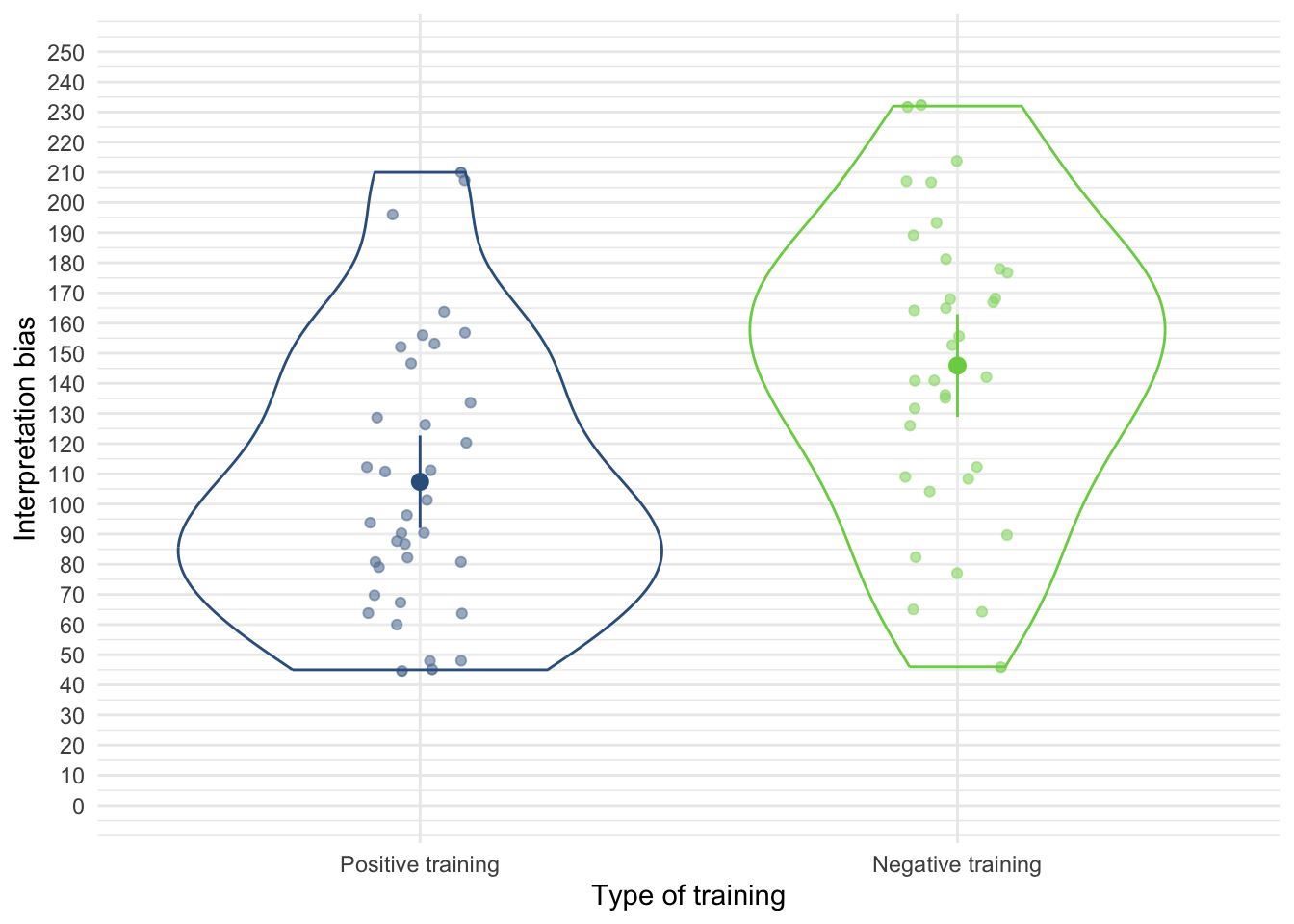

Anxious people tend to interpret ambiguous information in a negative way. For example, being highly anxious myself, if I overheard a student saying ‘Andy Field’s lectures are really different’, I would assume that ‘different’ meant rubbish, but it could also mean ‘refreshing’ or ‘innovative’. Muris et al. (2008) addressed how these interpretational biases develop in children. Children imagined that they were astronauts who had discovered a new planet. They were given scenarios about their time on the planet (e.g., ‘Onthe street, you encounter a spaceman. He has a toy handgun and he fires at you …’) and the child had to decide whether a positive (‘You laugh: it is a water pistol and the weather is fine anyway’) or negative (‘Oops, this hurts! The pistol produces a red beam which burns your skin!’) outcome occurred. After each response the child was told whether their choice was correct. Half of the children were always told that the negative interpretation was correct, and the reminder were told that the positive interpretation was correct.

In over 30 scenarios children were trained to interpret their experiences on the planet as negative or positive. Muris et al. then measured interpretational biases in everyday life to see whether the training had created a bias to interpret things negatively. In doing so, they could ascertain whether children might learn interpretational biases through feedback (e.g., from parents).

The data from this study are in the file

muris_2008.csv. The independent variable istraining(positive or negative) and the outcome is the child’s interpretational bias score (int_bias) – a high score reflects a tendency to interpret situations negatively. It is important to adjust for theageandgenderof the child and also their natural anxiety level (which they measured with a standard questionnaire of child anxiety called the screen for child anxiety related disorders (scared) because these things affect interpretational biases also). Labcoat Leni wants you to fit a model to see whether training significantly affected children’sint_biasusingage,genderandscaredas covariates. What can you conclude? View the solutions at www.discovr.rocks (or look at pages 475–476 in the original article).

Load the data using (see Tip 1):

muris_tib <- discovr::muris_2008In the chapter we looked at how to select contrasts, but because our main predictor variable (the type of training) has only two levels (positive or negative) we don’t need contrasts: the main effect of this variable can only reflect differences between the two types of training. We can, therefore, use the default behaviour of

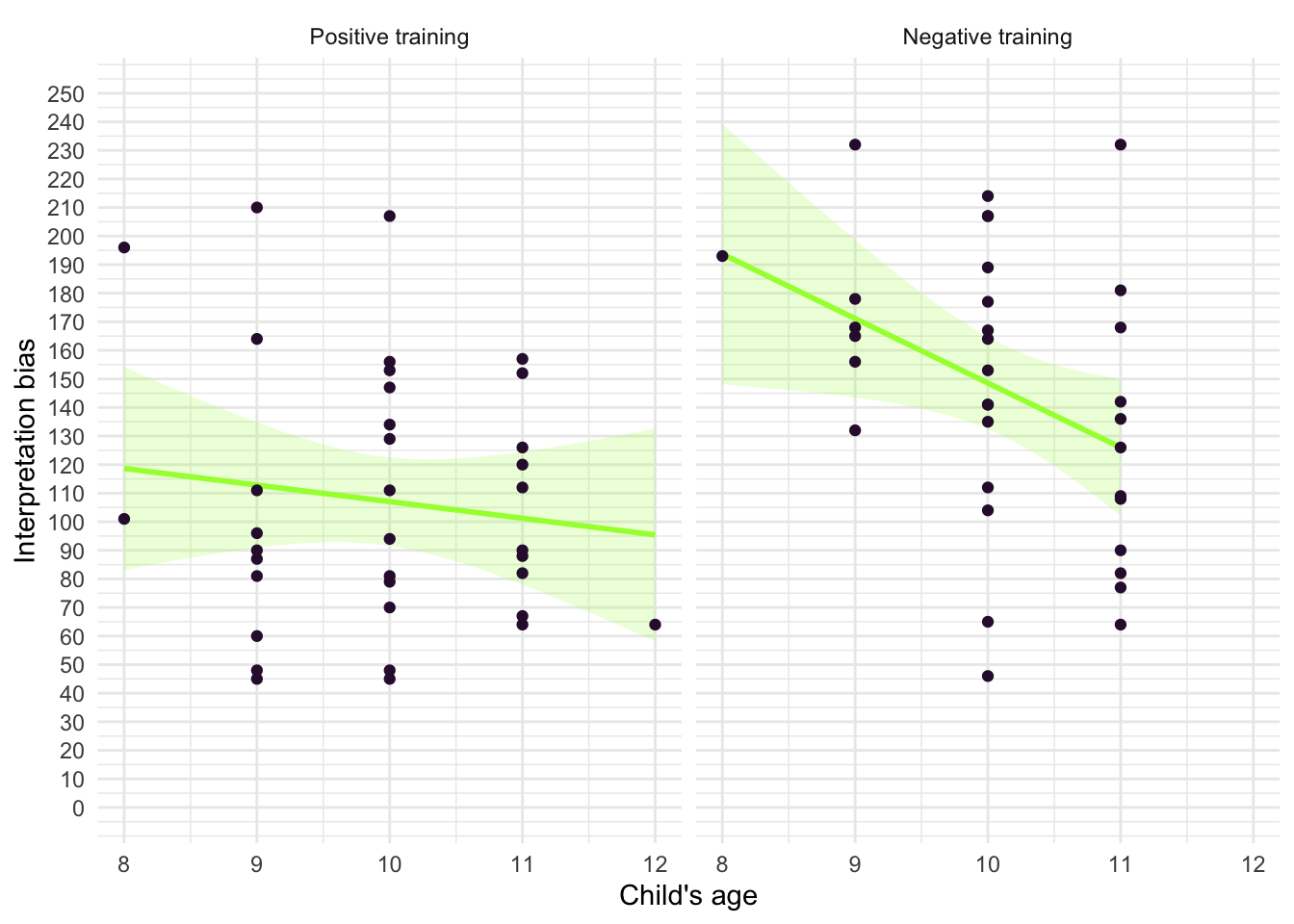

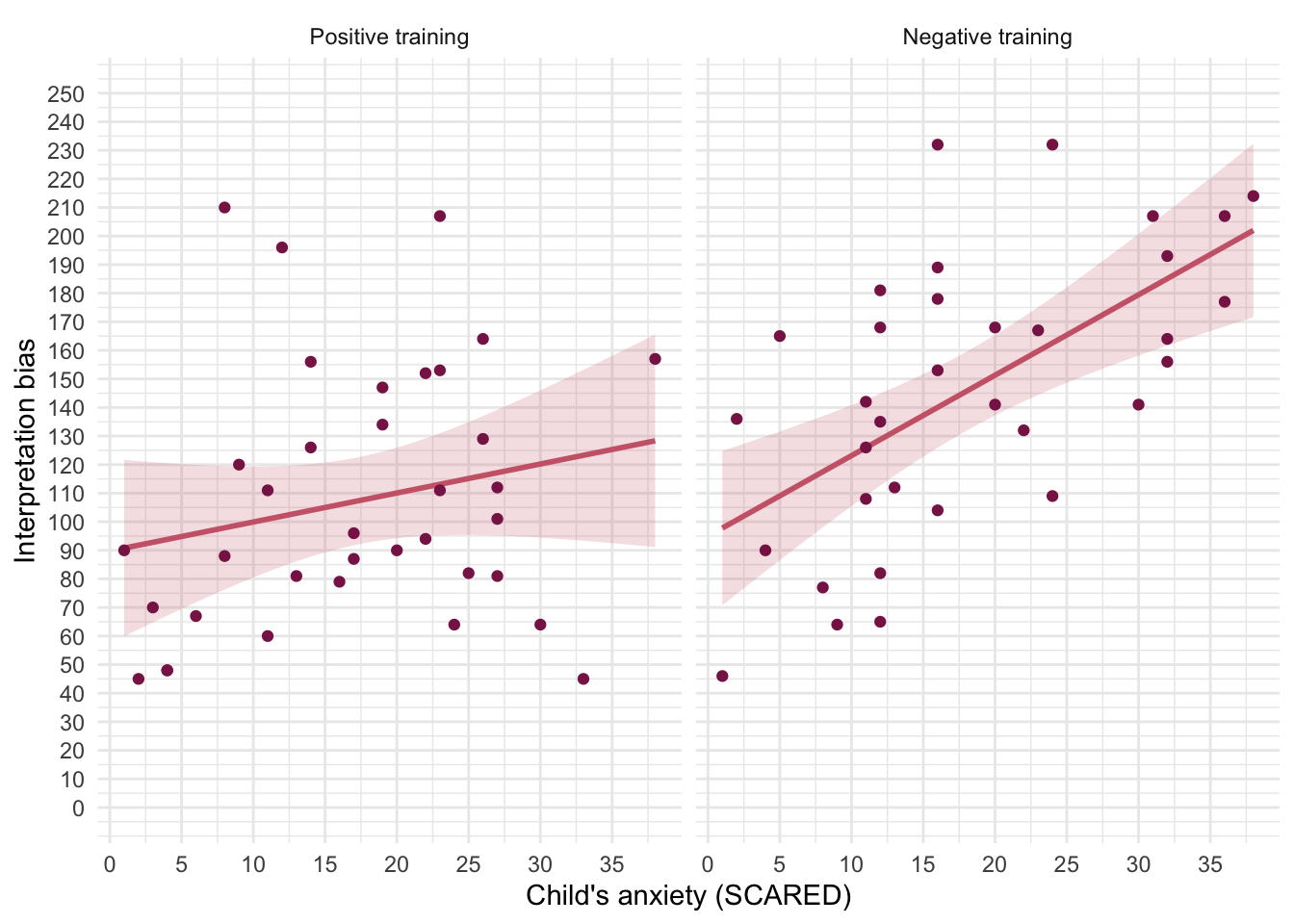

First visualise the data (Figure 16, Figure 17 and Figure 18)

ggplot(muris_tib, aes(x = training, y = int_bias, colour = training)) +

geom_point(position = position_jitter(width = 0.1), alpha = 0.6) +

geom_violin(alpha = 0.2) +

stat_summary(fun.data = "mean_cl_normal", geom = "pointrange", position = position_dodge(width = 0.9)) +

coord_cartesian(ylim = c(0, 250)) +

scale_y_continuous(breaks = seq(0, 250, 10)) +

scale_colour_viridis_d(begin = 0.3, end = 0.8) +

labs(x = "Type of training", y = "Interpretation bias", colour = "Type of training") +

theme_minimal() +

theme(legend.position = "none")

ggplot(muris_tib, aes(x = age, y = int_bias)) +

geom_smooth(method = "lm", colour = "#A2FC3CFF", fill = "#A2FC3CFF", alpha = 0.2) +

geom_point(colour = "#30123BFF") +

coord_cartesian(ylim = c(0, 250)) +

scale_x_continuous(breaks = seq(0, 15, 1)) +

scale_y_continuous(breaks = seq(0, 250, 10)) +

labs(x = "Child's age", y = "Interpretation bias") +

facet_wrap(~training) +